| PowerColor AX6950 PCS++ Video Card |

| Reviews - Featured Reviews: Video Cards | ||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||

| Written by Bruce Normann | ||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||

| Tuesday, 22 February 2011 | ||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||

PowerColor R6950 PCS++ Video Card

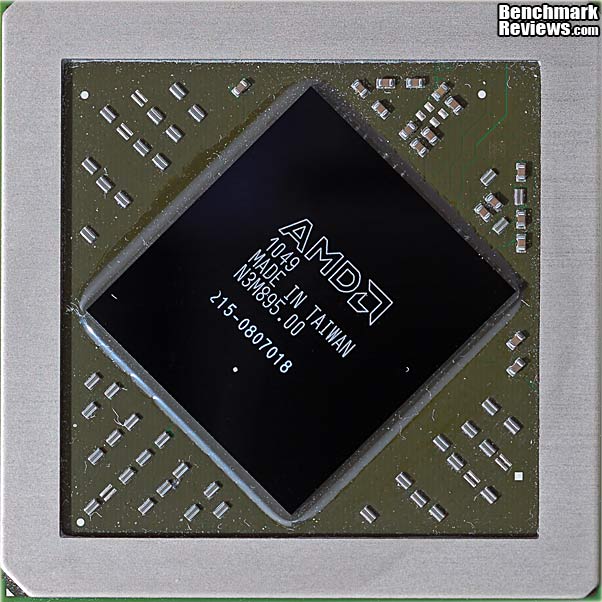

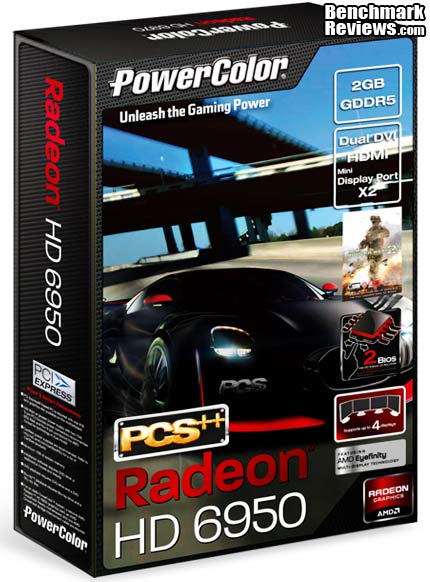

Manufacturer: PowerColor (TUL Corporation) Full Disclosure: The product sample used in this article has been provided by PowerColor. AMD's new Radeon HD 6900 series occupies the top position in their single-GPU product hierarchy. The two models, the HD 6950 and HD 6970 are very much like the HD 5850 and HD 5870 that they replace. The xx50 cards generally run at a lower clock rate and have a few sections of the GPU disabled, presumably because the vendor is trying to reclaim chips that have a small, isolated manufacturing defect. But what happens when your manufacturing process is so good that you're not producing enough "defective" chips to meet the market demand? When is a 6950 not a 6950? Well, quite often, as it turns out. In the case of the PowerColor PCS++ HD 6950 video card, it just depends on which way you flip the switch.

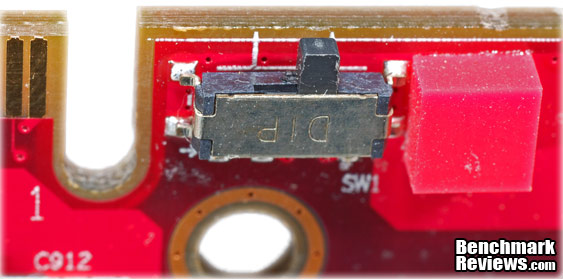

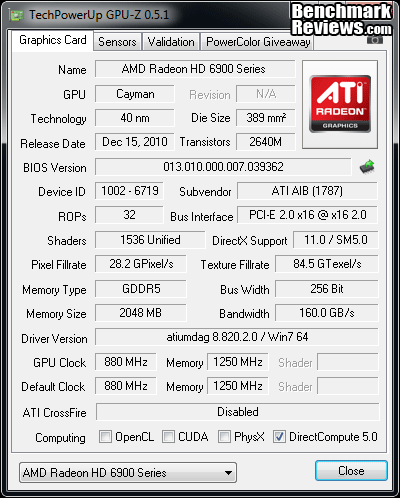

Overclocking has been a constant factor for PCs ever since Intel let the cat out of the bag with their E2180, and other members of the Conroe family. What was sort of an underground activity became mainstream overnight, with 50% overclocks almost guaranteed and 100% overclocks achievable by a great many enthusiasts, even with air cooling. Then AMD came along with their Phenom II CPUs and we got to try our luck at unlocking disabled cores. Now PowerColor has combined both methods into one video card, and they've made it as simple as flipping a switch. Push it one way and you have a standard Radeon HD 6950, with 1408 shaders running at 800 MHz. Push it the other way and you have 1536 shaders running at 880 MHz, which is the exact configuration of the HD 6970. The only difference is that PowerColor kept the PCS++ memory at 1250 MHz instead of spending the extra money for the 1500 MHz memory, like a real HD 6970 has. That's easy fix with a little overclocking, because PowerColor has done the hard work of loading a second BIOS that unlocks the extra 128 shader processors. This is a new feature for PowerColor and their PCS++ series. This segment of the product line has always been known for wringing the last drop of performance from whatever GPU and platform they used as a basis. But never before has a video card manufacturer been able to add shader cores at will, like this. It's a happy reflection on the maturity of AMD's 40nm design rules that they seem to have an endless supply of perfectly functional HD 6970 chips. Plus, stability has finally arrived in the manufacturing process, as performed by the world's largest semiconductor foundry operation, TSMC in Taiwan. You may have seen some benchmarks for Radeon HD 6950 video cards already, and an equal number for the HD 6970, but let's take a complete look at the novel PowerColor PCS++ HD 6950 2GB GDDR5, which is a bit of both. Then we'll run it through Benchmark Review's full test suite, where we're going to look at how this card performs with both factory BIOS options. Closer Look: PowerColor PCS++ HD 6950The PowerColor PCS++ HD 6950 2GB GDDR5 is not based on the AMD reference card at all, but is a completely new design. The board layout is different, the VRM section is different, the cooling section is different, and the biggest difference is also the smallest: a tiny switch along the top edge that turns the whole AMD product line on its ear. Let's start at the top. The first thing you notice when you pick up this video card is that it's fairly light; it's not dense and solid like the reference card. The two axial fans are placed close to one another, at the center of the card. The fans push air down through the shroud and it spreads out through the fin assembly, then down to the component level on the PC board.

The fan shroud is the antithesis of a completely sealed-off design; there are vents all over the black plastic cover. The overall styling is modeled after the general layout of ultra-performance sport cars, but this twin fan design has less surface area to work with so the likeness is not quite as obvious as it is with the single-fan PowerColor cards. The fans are basic DC-powered designs with a 3-wire electrical connection for a tachometer output signal. Unfortunately, the third wire is not connected back at the PCB, so the RPM of the fan is not monitored or reported to any of the common monitoring and control utilities, just the open-loop percentage number. The axial design of the cooling fans and their location pretty much guarantees that the memory and voltage regulator chips are getting decent airflow in this design

The heatpipe arrangement uses two long pipes and one short one, all exiting from the mounting block at the top of the card and providing for a fair amount of exposed area before they disappear into the aluminum fin array. We'll see later that there is room for an additional heat pipe in the design, but only three are fitted here. They are all 8mm diameter pipes, which aids in their heat carrying capacity and they are not plated; they are bare copper, but look like some clear coating has been applied to prevent oxidation. The PCS++ models from PowerColor have always sported decent overclocks and excellent cooling performance, so I'm expecting good results.

The layout of the various elements of the cooler design is a little easier to see in this view from the GPU's perspective. An oversized copper block mates with the GPU and transfers heat directly to the three copper heatpipes running just slightly offset from the center of the GPU. The fin assembly spans the entire length of the card and is sculpted in several areas to provide the proper clearance for the electrical parts on the printed circuit board. Of the two banks of memory chips, one receives a healthy dose of direct airflow from the rear fan, and the other is in a bit of a no-flow zone between the two fans. For that bank, PowerColor have provided a conductive heat path through the substantial aluminum mounting plate, via thermal interface tape. The copper heatsink for the VRM devices is just to the left of the row of enclosed filter chokes, and it covers a single row of the TI 59901M DrMOS devices which recently were in short supply.

The three 8mm diameter heatpipes are soldered between the copper mounting plate and the aluminum fin assembly, with all three pipes closely spaced and passing directly over the center of the GPU die. Both ends of the "missing" fourth pipe are easy to see in this view; it looks like it would have been one more short pipe section. This cooler uses traditional assembly techniques, with solder firmly attaching the pipes to the fins and the GPU interface plate. The solder also acts as a reasonably good heat conductor, and electronics manufacturers are intimately familiar with soldering things together, so it's a tried and true assembly technique.

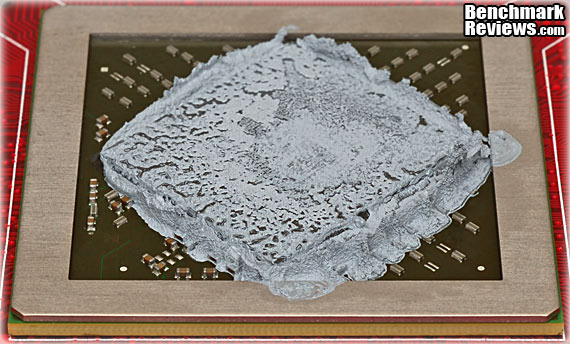

The thermal interface material (TIM) was very evenly distributed by the factory, but was applied way too thick. Take a look at all the excess material oozing off the sides of the GPU; this is literally two to three times as much TIM as necessary. One day, anxious manufacturing engineers are going to figure out that too little TIM is better than too much. For the rest of us who end up correcting these things, a thorough discussion of best practices for applying TIM is available here. I have never had the thermal performance of a video card degrade after I've taken it apart and reassembled it with a smaller amount of high quality TIM paste.

The layout on the front and back of the printed circuit board is very straightforward and it follows a pattern that is common practice for most video cards. The current paths are as short as possible by grouping the power distribution and voltage regulator module sections somewhere between the power input connectors and the major electrical loads, which are the GPU and the memory modules. The axial fans on this card are perfectly placed to offer substantial airflow over the critical VRM section. I've mentioned this before, but it bears repeating. For good heat transfer, all airflow is good, turbulent airflow is better, and impingement airflow is the best. The more friction, the better the heat transfer. For a high-end graphics card, the PowerColor PCS++ HD 6950 is a relatively simple and straightforward product. It reminds me more of the GTX460 design than it does the AMD HD 6950 reference design. It's not as compact as a GTX 460, because it has a 6+1 power supply design instead of a 3+1, and it's got 2-3 times the amount of memory, but it still looks like a design that's been reduced to its basic elements. It is also much simpler and less costly to produce than the GTX570, which is also its near neighbor in terms of performance, if not price. The added complexity of the dual, selectable BIOS doesn't really jump out at you, unless you know what to look for. Even then, the overall impact is minimal. In the next section, let's take a more detailed look at some of the new components on this non-reference board. I did a full tear-down, so we could see everything there is to see... PowerColor PCS++ HD 6950 Detailed FeaturesThe full PWM-based voltage regulator section that supplies power to the HD 6950 GPU is shown here. It is a 6+1 phase design that is controlled by a relatively new chip: the CHL8228 from CHiL Semiconductor Corporation. It is a dual-loop digital multi-phase buck controller specifically designed for GPU voltage regulation. Dynamic voltage control is programmable through the I2C protocol. CHil's first big design win in the graphics market was with a slightly simpler 6-phase chip in the GTX480 Fermi card, a power monster if there ever was one. The CHL8228 has 8 phases available for use, and the phases can be grouped any way the designer wants. In this case, six are used for the GPU and one of the two remaining is used to supply power to the memory. That leaves one phase unused, which is very common once you get above four phases. Designers tend not to use odd numbers, because they rarely use more than four steps to ramp the current delivery up and down as the load varies. The last phase could have been used to supply 2-phase power to the memory, but the benefit would likely be mostly psychological.

The CHL8228 is fully compatible with the I2C communication protocol, which makes software controlled voltage adjustments a walk in the park. PWM controllers without this capability require more work from the BIOS designer to provide voltage control, and the methods are almost always specific to one or two cards, and cannot be accessed by the most common monitoring and control utilities, such as MSI Afterburner.

The VRM section also features another relatively new chip in this application space; a DrMOS design from Texas Instruments that includes both the driver transistors and the High-Low MOSFET pair in one tightly integrated package. They are positioned right above the R19 chokes in the image above. It's a very small device, with markings of 59901M, and it's so new I can't find any specs for it. It has a bit of a reputation already though, as it was initially being blamed for the production delays of the AMD 6900 series cards. Apparently, it was in short supply for some unexpected reason, so AMD and their AIB partners had to do a fast workaround to get the first Radeon HD6970 and HD6950 cards to market. It saves a huge amount of board space, and a full complement of discrete MOSFETs and drivers for low side and high side circuits would not have fit so easily in this area of the board.

This new DrMOS chip is considerably smaller than previous parts. It's only 6mm x 5mm, where many of the recent DrMOS designs were 8mm x 8mm. It doesn't sound like such a big change, but the new part has less than half the surface area (30mm2 v. 64mm2). There is a single copper heatsink for the DrMOS chips, that's a custom design for this card. Unfortunately the design allows the board assembly technicians to place it on the board in either orientation, and if they get it wrong, it looks OK but doesn't contact the IC package nearly as well. I had to flip mine around to get it placed correctly. The Japanese quality mavens have a quality management technique they call poka-yoke that could have been used to prevent this. The part design should only allow it to fit in one orientation, thereby mistake-proofing the assembly.

It's hard to believe, but this little switch is what this video card is all about. While this isn't the first card to utilize a dual BIOS arrangement, it is the first one to offer a "free" upgrade to the next level of GPU specification, right out of the box. I know several of our readers are proficient at flashing the BIOS of their graphics card, and I've done it a couple of times for a variety of reasons, but it's still not a frequent thing for most enthusiasts to do. In my years of reading various forums, it's also the single most common cause that I see of the condition known as bricking, as in "I bricked my video card...!" Oddly enough, the last big occurrence of a BIOS Flash Mob was when the ATI HD 4830 cards were widely tapped to receive an instant upgrade to HD 4850 status. Even though the success rate was well over 50%, a large number of people learned the hard way that nothing in life is guaranteed. Now for the very first time, PowerColor is offering the general user that guaranteed boost. Through testing, binning, or some other selection method, they are offering an HD 6950 GPU that WILL unlock to HD6970 specs with the flip of a switch.

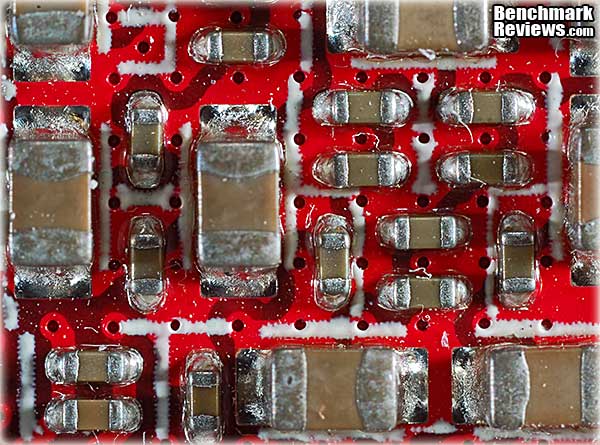

The PC board had some of the best solder quality and precision component placement that I've seen recently, as you can also see above. This is the area on the back side of the board, directly below the GPU, and it's one of the most crowded sections of any graphics card. On my LCD screen, this image is magnified 20X, compared to what the naked eye sees. The smallest SMD capacitors located in this view are placed on 1mm centers. This board was also well above average for cleanliness, compared to some of the samples I've looked at recently. There were some traces of residue across different sections of the board, but they were minor. All manufacturers are under intense pressure to minimize the environmental impact of their operations, and cleaning processes have historically produced some of the most prolific and toxic industrial waste streams. The combination of eco-friendly solvents, lead-free solder, and smaller SMD components have made cleaning of electronic assemblies much more difficult than it used to be.

The memory choice for the PowerColor PCS+ HD 6870 2GB GDDR5 is consistent with the AMD reference design for the HD 6950. The basic Radeon HD 6950 specs require 1250 MHz chips for the memory, which is exactly what these Hynix H5GQ2H24MFR-T2C GDDR5 parts are designed for. They need 1.5V to do it; at 1.35V they are only good for 900 MHz. The stock Radeon HD 6970 cards have a 1375 MHz memory clock, and require the "-ROC" version of this chip to run at that speed. The lower spec memory chips on this graphics card are the only thing keeping it from running at identical settings as the HD 6970. Because there's 2 GB of RAM, the cost for the upgrade would probably be substantial, and PowerColor is trying to keep the cost low for this board, so 1250 MHz it is. Before we move into the testing phase of the review, let's take a detailed look at the features and specifications for the new AMD Radeon HD 6950 GPU. AMD and PowerColor have supplied us with a ton of information, so let's go.... AMD Radeon HD 6950 GPU FeaturesThe AMD Radeon HD 6950 GPU contained in the PowerColor PCS++ HD 6950 video card has all of the major technologies that the Radeon 5xxx cards have had since September 2009. AMD has added several new features, however. The most important ones are: the new Morphological Anti-aliasing, the two DisplayPort 1.2 connections that support four monitors between them, 3rd generation UVD video acceleration, and AMD HD3D technology. In case you are just starting your research for a new graphics card, here is the complete list of standard GPU features, as supplied by AMD:

AMD RadeonTM HD 6950 GPU Feature Summary:

Now, here are the usual disclaimers:2010 Advanced Micro Devices, Inc. All rights reserved. AMD, the AMD Arrow logo, Catalyst, CrossFireX, PowerPlay, Radeon and combinations thereof are trademarks of Advanced Micro Devices, Inc. Microsoft, Windows, Windows Vista, and DirectX are registered trademarks of Microsoft Corporation in the U.S. and/or other jurisdictions. PCI Express is a registered trademark of PCI-SIG. Other names are for informational purposes only and may be trademarks of their respective owners. Additional hardware (e.g. Blu-ray drive, HD or 10-bit monitor, TV tuner) and/or software (e.g. multimedia applications) are required for the full enablement of some features. Not all features may be supported on all components or systems - check with your component or system manufacturer for specific model capabilities and supported technologies.

AMD Radeon HD 6950 GPU Detail Specifications |

||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||

|

Graphics Card |

Cores |

Core Clock |

Shader Clock |

Memory Clock |

Memory |

Interface |

| MSI GeForce GTX 460 (N460GTX Cyclone 1GD5/OC) |

336 |

725 |

1450 |

900 |

1.0 GB GDDR5 |

256-bit |

| MSI Radeon HD 6870 (R6870-2PM2D1GD5) |

1120 |

900 |

N/A |

1050 |

1.0 GB GDDR5 |

256-bit |

| MSI GeForce GTX 560 Ti (N560GTX-Ti Twin Frozr II/OC) |

384 |

880 |

1760 |

1050 |

1.0 GB GDDR5 |

256-bit |

|

PowerColor Radeon HD 5870 (PCS+ AX5870 1GBD5-PPDHG2) |

1600 |

875 |

N/A |

1250 |

1.0 GB GDDR5 |

256-bit |

| PowerColor PCS++ Radeon HD6950 (AX6950 2GBD5-P22DHG) |

1408 |

800 |

N/A |

1250 |

2.0 GB GDDR5 |

256-bit |

| Gigabyte GeForce GTX 480 (GV-N480SO-15I Super Over Clock) |

480 |

820 |

1640 |

950 |

1536 MB GDDR5 |

384-bit |

-

MSI GeForce GTX 460 (N460GTX Cyclone 1GD5/OC - Forceware v266.58)

-

MSI Radeon HD 6870 (R6870-2PM2D1GD5 - Catalyst 8.820.2.0)

-

MSI GeForce GTX 560 Ti (N560GTX-Ti Twin Frozr II/OC - Forceware v266.56)

-

PowerColor PCS+ Radeon HD 5870 (AX5870 1GBD5-PPDHG2 - Catalyst 8.820.2.0)

-

PowerColor PCS++ Radeon HD 6950 (AX6950 2GBD5-P22DHG - Catalyst 8.820.2.0)

-

Gigabyte GeForce GTX 480 (GV-N480SO-15I - Forceware v266.58)

3DMark Vantage Performance Tests

3DMark Vantage is a computer benchmark by Futuremark (formerly named Mad Onion) to determine the DirectX 10 performance of 3D game performance with graphics cards. A 3DMark score is an overall measure of your system's 3D gaming capabilities, based on comprehensive real-time 3D graphics and processor tests. By comparing your score with those submitted by millions of other gamers you can see how your gaming rig performs, making it easier to choose the most effective upgrades or finding other ways to optimize your system.

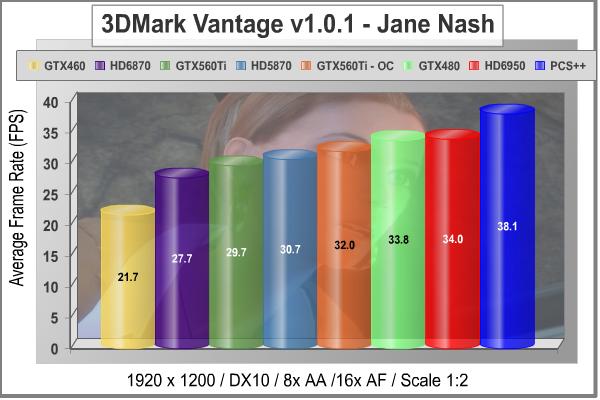

There are two graphics tests in 3DMark Vantage: Jane Nash (Graphics Test 1) and New Calico (Graphics Test 2). The Jane Nash test scene represents a large indoor game scene with complex character rigs, physical GPU simulations, multiple dynamic lights, and complex surface lighting models. It uses several hierarchical rendering steps, including for water reflection and refraction, and physics simulation collision map rendering. The New Calico test scene represents a vast space scene with lots of moving but rigid objects and special content like a huge planet and a dense asteroid belt.

At Benchmark Reviews, we believe that synthetic benchmark tools are just as valuable as video games, but only so long as you're comparing apples to apples. Since the same test is applied in the same controlled method with each test run, 3DMark is a reliable tool for comparing graphic cards against one-another.

1680x1050 is rapidly becoming the new 1280x1024. More and more widescreen are being sold with new systems or as upgrades to existing ones. Even in tough economic times, the tide cannot be turned back; screen resolution and size will continue to creep up. Using this resolution as a starting point, the maximum settings were applied to 3DMark Vantage include 8x Anti-Aliasing, 16x Anisotropic Filtering, all quality levels at Extreme, and Post Processing Scale at 1:2.

3DMark Vantage GPU Test: Jane Nash

Our first synthetic test shows the base HD 6950 configuration going toe-to-toe with the wildly overclocked Gigabyte GTX480SOC and standing comfortably above the GTX 560 Ti, even in its highly overclocked form. The PowerColor PCS++ BIOS settings allow the Cayman GPU to stretch its legs and jump to the top rank. Both the increase in the number of shaders and the core clock bump contribute to this higher performance by this enhanced Radeon card. There's even more performance where that came from, as these are factory BIOS clocks, and they can be improved on with additional overclocking by the user.

At 1920x1200 native resolution, things are much the same as the lower screen size; just the absolute values are lower, the ranking stays the same. Once again, the PCS++ settings really jumpstart this GPU and provide a very large increase in performance. All for free.....

Let's take a look at test #2 now, which has a lot more surfaces to render, with all those asteroids flying around the doomed planet New Calico.

3DMark Vantage GPU Test: New Calico

In the medium resolution New Calico test, the GeForce cards show a little extra muscle, particularly the newest GPU in the game, the GTX 560 Ti. The stock Radeon HD 6950 gives up 15% in performance compared to the stock GTX 560 Ti. The PowerColor PCS++ version cranks up the shaders and clock and reduces that gap to less than 1% at factory settings. The HD 6870 takes a real hit in frame rates in this test, for some reason. Probably all those asteroids. The two insanely overclocked Fermi cards take the number one and two slots and the GTX 480 hangs on to the number one spot despite being one generation removed from the current graphics chips.

At the higher screen resolution of 1920x1200, the HD 6950 once again lags behind the new GTX 560 Ti card. Changing up the BIOS to the PCS++ configuration puts the PowerColor card back into the fray and it ties the performance of the base 560 Ti. The two overclocked Fermi cards pick up the first and second slots again, demonstrating good scaling with increasing core clocks. This benchmark suite may have recently been replaced with DX11-based tests, but in the fading days of DX10 it has been a very reliable and challenging benchmark for high-end video cards.

We need to look at some actual gaming performance to verify these results, so let's take a look in the next section, at how these cards stack up in the standard bearer for DX10 gaming benchmarks, Crysis.

|

Graphics Card |

Cores |

Core Clock |

Shader Clock |

Memory Clock |

Memory |

Interface |

| MSI GeForce GTX 460 (N460GTX Cyclone 1GD5/OC) |

336 |

725 |

1450 |

900 |

1.0 GB GDDR5 |

256-bit |

| MSI Radeon HD 6870 (R6870-2PM2D1GD5) |

1120 |

900 |

N/A |

1050 |

1.0 GB GDDR5 |

256-bit |

| MSI GeForce GTX 560 Ti (N560GTX-Ti Twin Frozr II/OC) |

384 |

880 |

1760 |

1050 |

1.0 GB GDDR5 |

256-bit |

|

PowerColor Radeon HD 5870 (PCS+ AX5870 1GBD5-PPDHG2) |

1600 |

875 |

N/A |

1250 |

1.0 GB GDDR5 |

256-bit |

| PowerColor PCS++ Radeon HD6950 (AX6950 2GBD5-P22DHG) |

1408 |

800 |

N/A |

1250 |

2.0 GB GDDR5 |

256-bit |

| Gigabyte GeForce GTX 480 (GV-N480SO-15I Super Over Clock) |

480 |

820 |

1640 |

950 |

1536 MB GDDR5 |

384-bit |

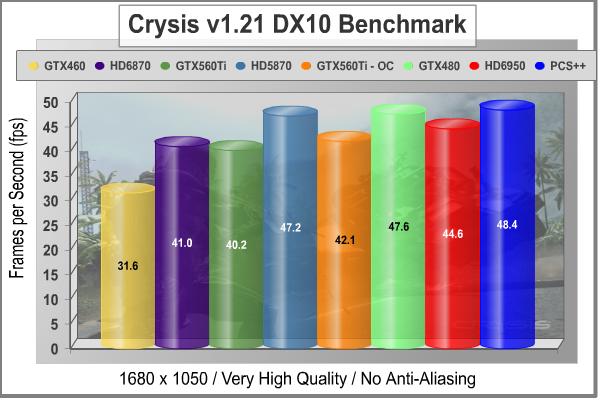

Crysis Performance Tests

Crysis uses a new graphics engine: the CryENGINE2, which is the successor to Far Cry's CryENGINE. CryENGINE2 is among the first engines to use the Direct3D 10 (DirectX 10) framework, but can also run using DirectX 9, on Vista, Windows XP and the new Windows 7. As we'll see, there are significant frame rate reductions when running Crysis in DX10. It's not an operating system issue, DX9 works fine in WIN7, but DX10 knocks the frame rates in half.

Roy Taylor, Vice President of Content Relations at NVIDIA, has spoken on the subject of the engine's complexity, stating that Crysis has over a million lines of code, 1GB of texture data, and 85,000 shaders. To get the most out of modern multicore processor architectures, CPU intensive subsystems of CryENGINE2 such as physics, networking and sound, have been re-written to support multi-threading.

Crysis offers an in-game benchmark tool, which is similar to World in Conflict. This short test does place some high amounts of stress on a graphics card, since there are so many landscape features rendered. For benchmarking purposes, Crysis can mean trouble as it places a high demand on both GPU and CPU resources. Benchmark Reviews uses the Crysis Benchmark Tool by Mad Boris to test frame rates in batches, which allows the results of many tests to be averaged.

Low-resolution testing allows the graphics processor to plateau its maximum output performance, and shifts demand onto the other system components. At the lower resolutions Crysis will reflect the GPU's top-end speed in the composite score, indicating full-throttle performance with little load. This makes for a less GPU-dependant test environment, but it is sometimes helpful in creating a baseline for measuring maximum output performance. At the 1280x1024 resolution used by 17" and 19" monitors, the CPU and memory have too much influence on the results to be used in a video card test. At the widescreen resolutions of 1680x1050 and 1900x1200, the performance differences between video cards under test are mostly down to the cards themselves, but there is still some influence by the rest of the system components.

With medium screen resolution and no MSAA dialed in, Crysis shows a completely different picture than 3DMark. Unlike many so-called TWIMTBP titles, Crysis has always run quite well on the ATI architecture, and the PowerColor Radeon HD 6950 PCS++ takes top honors away from the Green Team. Note that the HD 5870 and the GTX 480 are roughly equal here, which should tell you a little bit about how well AMD does in a Crysis.

Crysis is one of those few games that stress the CPU almost as much as the GPU. As we increase the load on the graphics card, with higher resolution and AA processing, the situation may change. Remember all the test results in this article are with maximum allowable image quality settings, plus all the performance numbers in Crysis took a major hit when Benchmark Reviews switched over to the DirectX 10 API for all our testing. None of the cards are struggling at these low settings, except the GTX 460/1GB which is running an average frame rate that's too close to 30 FPS.

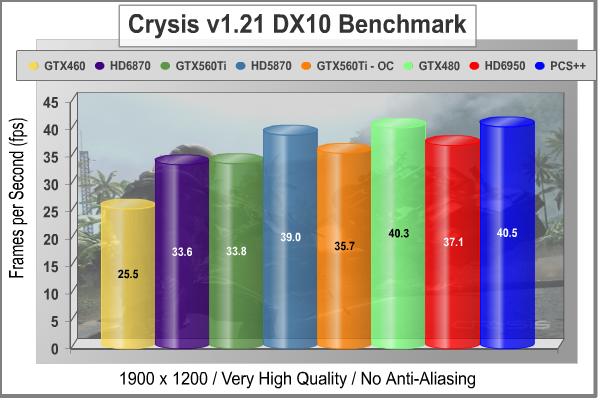

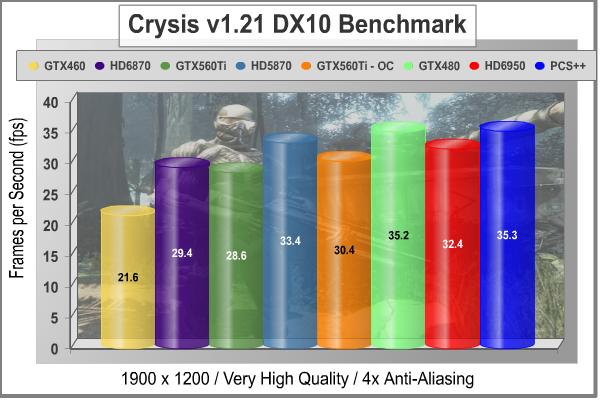

At 1900 x 1200 resolution, the relative rankings stay the same; the raw numbers just go down. Even with the increased load on the GPU, every card from the HD 6870 on up still gets over the 30 FPS hump, at least for average frame rates. Almost all of these high-end GPUs can muster up the muscle to play Crysis at high resolution with most of the bells and whistles turned on, much to everyone's relief. Can it play Crysis? Yes. The PowerColor HD 6950 PCS++ pulls top rank again, with the Radeon HD 5870 and the GeForce GTX 480SOC not too far behind.

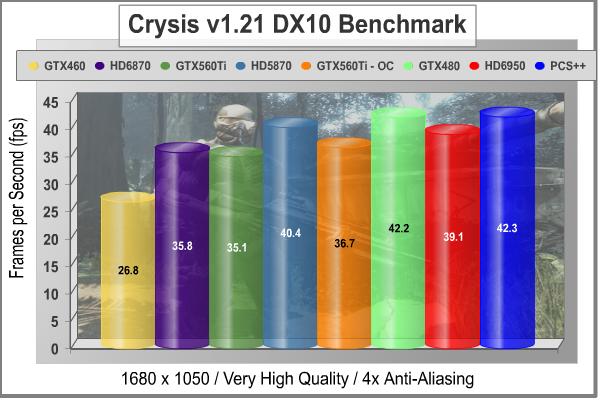

Now let's turn up the heat a bit on the ROP units, and add some Multi-Sample Anti-Aliasing. With 4x MSAA cranked in, the top cards lose about 5 FPS at 1680x1050 screen resolution but they manage to stay well above the 30 FPS line. The PowerColor PCS+ HD 6870 1GB GDDR5 just nips past the base GTX 560 Ti and stays within hailing distance of the same card overclocked to within an inch of its life, at 975 MHz. The Gigabyte GTX 480 SOC has also managed to do quite well with the additional anti-aliasing load. It's the only card that keeps up with the PowerColor HD 6950 PCS++, which has the advantage of the second BIOS settings driving it to near HD 6970 performance levels.

This is one of our toughest tests, at 1900 x 1200, maximum quality levels, and 4x AA. In the middle ranges, the HD 6870 hangs close to the performance leaders, and matches up quite well with the GeForce GTX 560Ti, both in base form and with enhanced clocks. On this, the toughest of the four benchmark configurations, the Gigabyte GTX 480 SOC and the PowerColor Radeon HD 6950 PCS++ share the top spot in the 35 FPS range. The extra shaders in the PCS++ version give a 9% boost to the Radeon HD 6950 GPU.

Our next test is a relatively new one for Benchmark Reviews. It's a DirectX 10 game with all the stops pulled out. Just Cause 2 uses a brand new game engine called Avalanche Engine 2.0, which enabled the developers to create games of epic scale and with great variation across genres and artistic styles, for the next generation of gaming experiences. Sounds like fun, let's take a look...

|

Graphics Card |

Cores |

Core Clock |

Shader Clock |

Memory Clock |

Memory |

Interface |

| MSI GeForce GTX 460 (N460GTX Cyclone 1GD5/OC) |

336 |

725 |

1450 |

900 |

1.0 GB GDDR5 |

256-bit |

| MSI Radeon HD 6870 (R6870-2PM2D1GD5) |

1120 |

900 |

N/A |

1050 |

1.0 GB GDDR5 |

256-bit |

| MSI GeForce GTX 560 Ti (N560GTX-Ti Twin Frozr II/OC) |

384 |

880 |

1760 |

1050 |

1.0 GB GDDR5 |

256-bit |

|

PowerColor Radeon HD 5870 (PCS+ AX5870 1GBD5-PPDHG2) |

1600 |

875 |

N/A |

1250 |

1.0 GB GDDR5 |

256-bit |

| PowerColor PCS++ Radeon HD6950 (AX6950 2GBD5-P22DHG) |

1408 |

800 |

N/A |

1250 |

2.0 GB GDDR5 |

256-bit |

| Gigabyte GeForce GTX 480 (GV-N480SO-15I Super Over Clock) |

480 |

820 |

1640 |

950 |

1536 MB GDDR5 |

384-bit |

Just Cause 2 Performance Tests

"Just Cause 2 sets a new benchmark in free-roaming games with one of the most fun and entertaining sandboxes ever created," said Lee Singleton, General Manager of Square Enix London Studios. "It's the largest free-roaming action game yet with over 400 square miles of Panaun paradise to explore, and its 'go anywhere, do anything' attitude is unparalleled in the genre." In his interview with IGN, Peter Johansson, the lead designer on Just Cause 2 said, "The Avalanche Engine 2.0 is no longer held back by having to be compatible with last generation hardware. There are improvements all over - higher resolution textures, more detailed characters and vehicles, a new animation system and so on. Moving seamlessly between these different environments, without any delay for loading, is quite a unique feeling."

Just Cause 2 is one of those rare instances where the real game play looks even better than the benchmark scenes. It's amazing to me how well the graphics engine copes with the demands of an open world style of play. One minute you are driving through the jungles, the next you're diving off a cliff, hooking yourself to a passing airplane, and parasailing onto the roof of a hi-rise building. The ability of the Avalanche Engine 2.0 to respond seamlessly to these kinds of dramatic switches is quite impressive. It's not DX11 and there's no tessellation, but the scenery goes by so fast there's no chance to study it in much detail anyway.

Although we didn't use the feature in our testing, in order to equalize the graphics environment between NVIDIA and ATI, the GPU water simulation is a standout visual feature that rivals DirectX 11 techniques for realism. There's a lot of water in the environment, which is based around an imaginary Southeast Asian island nation, and it always looks right. The simulation routines use the CUDA functions in the Fermi architecture to calculate all the water displacements, and those functions are obviously not available when using an ATI-based video card. The same goes for the Bokeh setting, which is an obscure Japanese term for out-of-focus rendering. Neither of these techniques uses PhysX, but they do use specific computing functions that are only supported by NVIDIA's proprietary CUDA architecture.

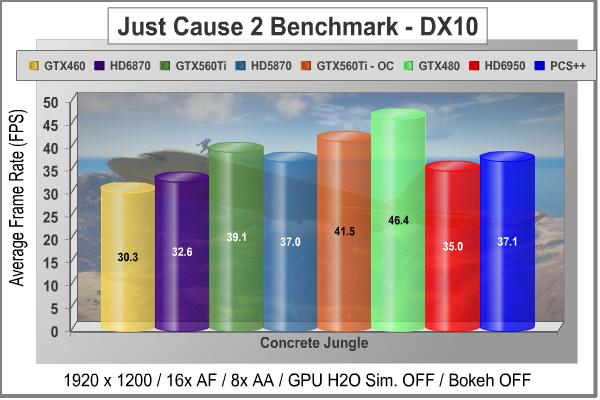

There are three scenes available for the in-game benchmark, and I used the last one, "Concrete Jungle" because it was the toughest and it also produced the most consistent results. That combination made it an easy choice for the test environment. All Advanced Display Settings were set to their highest level, and Motion Blur was turned on, as well.

The results for the Just Cause 2 benchmark show a bit of a mixed bag for the typical Red v. Green competition. They look similar to the ones we saw for the New Calico test on 3DMark vantage, just compressed a bit. Obviously, they use completely different rendering engines, but both tests have massive amounts of environment to render. The Gigabyte GTX 480 SOC takes the top spot, and the GTX 560 Ti hangs a little closer than the rest of the cards. The Radeon HD 6950 in its base configuration is almost 5 FPS behind the base GeForce GTX 560Ti; the 35-40 FPS range is not an area where you can give up that type of advantage and not have it show up in real game play. On the whole, I'd call this a pretty well behaved benchmark, and the game's a blast, too. It's a shame the HD 6900 series doesn't get along with this game, perhaps its a driver issue.

Let's take a look at one more popular gaming benchmark, which was released recently with PhysX support, yet it relies on DirectX 9 features. It's a wonderful blend of modern graphics technology and classic crime scenes, called Mafia II.

|

Graphics Card |

Cores |

Core Clock |

Shader Clock |

Memory Clock |

Memory |

Interface |

| MSI GeForce GTX 460 (N460GTX Cyclone 1GD5/OC) |

336 |

725 |

1450 |

900 |

1.0 GB GDDR5 |

256-bit |

| MSI Radeon HD 6870 (R6870-2PM2D1GD5) |

1120 |

900 |

N/A |

1050 |

1.0 GB GDDR5 |

256-bit |

| MSI GeForce GTX 560 Ti (N560GTX-Ti Twin Frozr II/OC) |

384 |

880 |

1760 |

1050 |

1.0 GB GDDR5 |

256-bit |

|

PowerColor Radeon HD 5870 (PCS+ AX5870 1GBD5-PPDHG2) |

1600 |

875 |

N/A |

1250 |

1.0 GB GDDR5 |

256-bit |

| PowerColor PCS++ Radeon HD6950 (AX6950 2GBD5-P22DHG) |

1408 |

800 |

N/A |

1250 |

2.0 GB GDDR5 |

256-bit |

| Gigabyte GeForce GTX 480 (GV-N480SO-15I Super Over Clock) |

480 |

820 |

1640 |

950 |

1536 MB GDDR5 |

384-bit |

Mafia II DX9+SSAO Benchmark Results

Mafia II is a single-player third-person action shooter developed by 2K Czech for 2K Games, and is the sequel to Mafia: The City of Lost Heaven released in 2002. Players assume the life of World War II veteran Vito Scaletta, the son of small Sicilian family who immigrates to Empire Bay. Growing up in the slums of Empire Bay teaches Vito about crime, and he's forced to join the Army in lieu of jail time. After sustaining wounds in the war, Vito returns home and quickly finds trouble as he again partners with his childhood friend and accomplice Joe Barbaro. Vito and Joe combine their passion for fame and riches to take on the city, and work their way to the top in Mafia II.

Mafia II is a DirectX 9 PC video game built on 2K Czech's proprietary Illusion game engine, which succeeds the LS3D game engine used in Mafia: The City of Lost Heaven. In our Mafia-II Video Game Performance article, Benchmark Reviews explored characters and gameplay while illustrating how well this game delivers APEX PhysX features on both AMD and NVIDIA products. Thanks to APEX PhysX extensions that can be processed by the system's CPU, Mafia II offers gamers equal access to high-detail physics regardless of video card manufacturer. Equal access is not the same thing as equal performance, though.

With PhysX technology turned off, both AMD and NVIDIA are on a level playing field in this test. In contrast to many gaming scenes, where other-worldly characters and environments allow the designers to amp up the detail, Mafia II uses human beings wearing ordinary period-correct clothes and natural scenery. Just like how high end audio equipment is easiest to judge using that most familiar of sounds - the human voice, graphics hardware is really put to the test when rendering things that we have real experience with. The drape of a woolen overcoat is a deceptively simple construct; easy to understand and implement, but very difficult to get perfect.

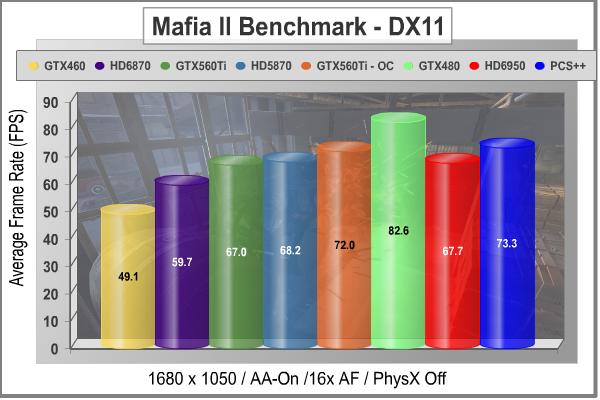

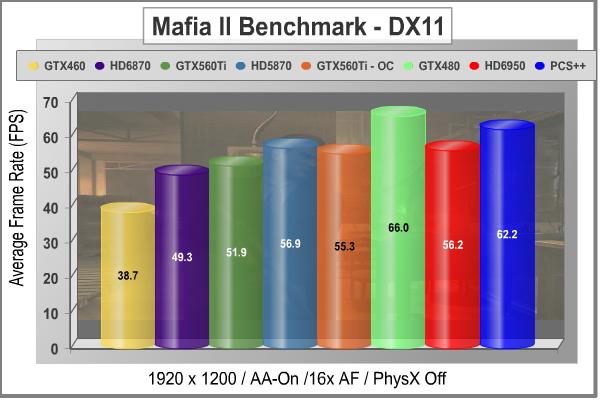

Despite the fact that Mafia IImakes excellent use of PhysX and 3D as described in our NVIDIA APEX PhysX Efficiency: CPU vs GPU article, both areas where NVIDIA has an edge, this test seems equally suited to either AMD or NVIDIA solutions. Some of you are probably howling at that statement, because it's so difficult to imagine turning PhysX off once you've experienced it. The Radeon HD 6950 has a very slight edge on the stock GeForce GTX 560Ti, and when it gets a shot in the arm from the enhanced BIOS, it even wins out over the same GTX 560Ti card with a huge overclock of 975 MHz. The older HD 5870 still does well in this test, which is not completely surprising since this benchmark is limited to DX9 function calls.

At the higher screen resolution of 1920x1200, the NVIDIA cards start to lose some ground relative to the ATI clan. For a game clearly developed using NVIDIA hardware, it surprises me a bit to see the Radeon series doing so well. Of course, I DO miss the PhysX features, which are always turned off during comparison testing. Since Mafia II can't rely on tessellation for enhancing realism, it leans heavily on PhysX. If tessellation were in the mix, the new and improved tessellation engines in the HD 6870 and the GTX 560 Ti would be pushing those numbers up. Here is a game where brute force, meaning the number of shader processors, pays off and you can see that in two places. The strong performance by the good old HD 5870 is one, and the 11% bump in average frame rates when the extra shaders are enabled on the PCS++ HD 6950 is the second.

Our next benchmark of the series is not for the faint of heart. Lions and tiger - OK, fine. Guys with guns - I can deal with that. But those nasty little spiders......NOOOOOO! How did I get stuck in the middle of a deadly fight between Aliens vs. Predator anyway? Check out the results from one of our toughest new DirectX 11 benchmarks in the next section.

|

Graphics Card |

Cores |

Core Clock |

Shader Clock |

Memory Clock |

Memory |

Interface |

| MSI GeForce GTX 460 (N460GTX Cyclone 1GD5/OC) |

336 |

725 |

1450 |

900 |

1.0 GB GDDR5 |

256-bit |

| MSI Radeon HD 6870 (R6870-2PM2D1GD5) |

1120 |

900 |

N/A |

1050 |

1.0 GB GDDR5 |

256-bit |

| MSI GeForce GTX 560 Ti (N560GTX-Ti Twin Frozr II/OC) |

384 |

880 |

1760 |

1050 |

1.0 GB GDDR5 |

256-bit |

|

PowerColor Radeon HD 5870 (PCS+ AX5870 1GBD5-PPDHG2) |

1600 |

875 |

N/A |

1250 |

1.0 GB GDDR5 |

256-bit |

| PowerColor PCS++ Radeon HD6950 (AX6950 2GBD5-P22DHG) |

1408 |

800 |

N/A |

1250 |

2.0 GB GDDR5 |

256-bit |

| Gigabyte GeForce GTX 480 (GV-N480SO-15I Super Over Clock) |

480 |

820 |

1640 |

950 |

1536 MB GDDR5 |

384-bit |

Aliens vs. Predator Test Results

Rebellion, SEGA and Twentieth Century FOX have released the Aliens vs. Predator DirectX 11 Benchmark to the public. As with many of the already released DirectX 11 benchmarks, the Aliens vs. Predator DirectX 11 benchmark leverages your DirectX 11 hardware to provide an immersive game play experience through the use of DirectX 11 Tessellation and DirectX 11 Advanced Shadow features.

In Aliens vs. Predator, DirectX 11 Geometry Tessellation is applied in an effective manner to enhance and more accurately depict HR Giger's famous Alien design. Through the use of a variety of adaptive schemes, applying tessellation when and where it is necessary, the perfect blend of performance and visual fidelity is achieved with at most a 4% change in performance.

DirectX 11 hardware also allows for higher quality, smoother and more natural looking shadows as well. DirectX 11 Advanced Shadows allow for the rendering of high-quality shadows, with smoother, artifact-free penumbra regions, which otherwise could not be realized, again providing for a higher quality, more immersive gaming experience.

Benchmark Reviews is committed to pushing the PC graphics envelope, and whenever possible we configure benchmark software to its maximum settings for our tests. In the case of Aliens vs. Predator, all cards were tested with the following settings: Texture Quality-Very High, Shadow Quality-High, HW Tessellation & Advanced Shadow Sampling-ON, Multi Sample Anti-Aliasing-4x, Anisotropic Filtering-16x, Screen Space Ambient Occlusion (SSAO)-ON. You will see that this is a challenging benchmark, with all the settings turned up and a screen resolution of 1920 x 1200; it takes an HD5870 card to achieve an average frame rate higher than 30FPS.

Now we get into the full DirectX 11 only benchmarks, so we're looking at the full potential for graphics rendering that's available on only the latest generation of video cards. AvP is a tough benchmark, but it has been a fair one so far, and it's very useful for testing the newest graphics hardware. The relatively high frame rates you see above are a testament to the very high performance of the latest and greatest cards, especially when paired up in SLI or CrossFireX.

The Radeon HD 6950, and its enhanced brother, the PCS++ Radeon HD 6950 both do yeoman's duty in Aliens vs. Predator. They beat both the standard and overclocked versions of the new GTX 560 Ti, and the PCS++ version comes within 3 FPS of the highly overclocked Gigabyte GTX 480SOC. This is definitely one of those games that need the right blend of hardware elements to come together for peak performance, and the Radeon HD 6950 seems to have the right mix. On this test, when using anything less than the top hardware, some scenes have a jumpy quality to them. This was evident on all the cards below the overclocked MSI N560GTX-Ti This game needs shaders more than tessellation, as the big jump in performance for the PCS++ BIOS settings on the PowerColor card proves, with 1156 Cores on the job.

In our next section, Benchmark Reviews looks at one of the newest and most popular games, Battlefield: Bad Company 2. The game lacks a dedicated benchmarking tool, so we'll be using FRAPS to measure frame rates within portions of the game itself.

|

Graphics Card |

Cores |

Core Clock |

Shader Clock |

Memory Clock |

Memory |

Interface |

| MSI GeForce GTX 460 (N460GTX Cyclone 1GD5/OC) |

336 |

725 |

1450 |

900 |

1.0 GB GDDR5 |

256-bit |

| MSI Radeon HD 6870 (R6870-2PM2D1GD5) |

1120 |

900 |

N/A |

1050 |

1.0 GB GDDR5 |

256-bit |

| MSI GeForce GTX 560 Ti (N560GTX-Ti Twin Frozr II/OC) |

384 |

880 |

1760 |

1050 |

1.0 GB GDDR5 |

256-bit |

|

PowerColor Radeon HD 5870 (PCS+ AX5870 1GBD5-PPDHG2) |

1600 |

875 |

N/A |

1250 |

1.0 GB GDDR5 |

256-bit |

| PowerColor PCS++ Radeon HD6950 (AX6950 2GBD5-P22DHG) |

1408 |

800 |

N/A |

1250 |

2.0 GB GDDR5 |

256-bit |

| Gigabyte GeForce GTX 480 (GV-N480SO-15I Super Over Clock) |

480 |

820 |

1640 |

950 |

1536 MB GDDR5 |

384-bit |

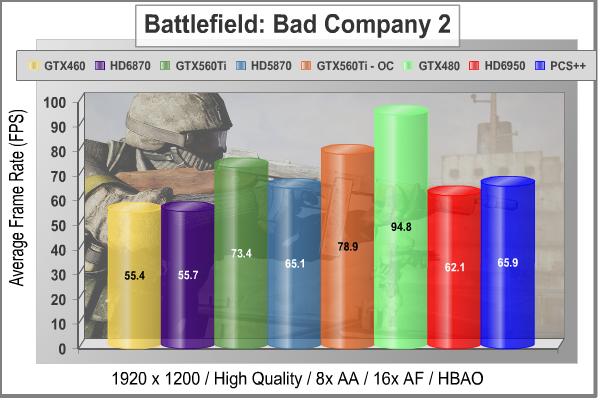

Battlefield: Bad Company 2 Test Results

The Battlefield franchise has been known to demand a lot from PC graphics hardware. DICE (Digital Illusions CE) has incorporated their Frostbite-1.5 game engine with Destruction-2.0 feature set with Battlefield: Bad Company 2. Battlefield: Bad Company 2 features destructible environments using Frostbit Destruction-2.0, and adds gravitational bullet drop effects for projectiles shot from weapons at a long distance. The Frostbite-1.5 game engine used on Battlefield: Bad Company 2 consists of DirectX-10 primary graphics, with improved performance and softened dynamic shadows added for DirectX-11 users. At the time Battlefield: Bad Company 2 was published, DICE was also working on the Frostbite-2.0 game engine. This upcoming engine will include native support for DirectX-10.1 and DirectX-11, as well as parallelized processing support for 2-8 parallel threads. This will improve performance for users with an Intel Core-i7 processor.

In our benchmark tests of Battlefield: Bad Company 2, the first three minutes of action in the single-player raft night scene are captured with FRAPS. Relative to the online multiplayer action, these frame rate results are nearly identical to daytime maps with the same video settings.

This is a game that favors the Green Team, without a doubt. Across the board, you can see competitive matchups where the NVIDIA card puts up better numbers. The Radeon HD 6950, and its PCS++ big brother lose out to the NVIDIA GTX 560Ti by more than 10 FPS. The gameplay doesn't suffer, because the average is above 60FPS for even the base HD 6950. This is not as tough a benchmark as some others; the developers trod a fine line between juicing up the visuals and keeping the performance levels up. This benchmark does not utilize tessellation, so as in our DX10 testing, the strength of the newest GPUs in this area are not having an impact here. Don't worry; we'll see some results later that will show clear differences between the generations with some tessellation-heavy titles.

The little-documented feature in the basic game setup, which allows the application to choose which DirectX API it uses during the session, is not a factor here. All of the tested cards here are DX11-capable, and the game was running in DX11 mode for all the test results reported here. Even though this is primarily developed as a DX10 game, there are DX11 features incorporated in BF:BC2, like softened shadows. That one visual enhancement takes a small, but measureable toll on frame rates. It doesn't have as big an impact as aggressive use of tessellation would, either from the visuals standpoint or the computing perspective.

In the next section we use one of my favorite games, DiRT-2, to look at DX11 performance. Life isn't ALL about shooting aliens; sometimes you just need to get out of the city and drive...!

|

Graphics Card |

Cores |

Core Clock |

Shader Clock |

Memory Clock |

Memory |

Interface |

| MSI GeForce GTX 460 (N460GTX Cyclone 1GD5/OC) |

336 |

725 |

1450 |

900 |

1.0 GB GDDR5 |

256-bit |

| MSI Radeon HD 6870 (R6870-2PM2D1GD5) |

1120 |

900 |

N/A |

1050 |

1.0 GB GDDR5 |

256-bit |

| MSI GeForce GTX 560 Ti (N560GTX-Ti Twin Frozr II/OC) |

384 |

880 |

1760 |

1050 |

1.0 GB GDDR5 |

256-bit |

|

PowerColor Radeon HD 5870 (PCS+ AX5870 1GBD5-PPDHG2) |

1600 |

875 |

N/A |

1250 |

1.0 GB GDDR5 |

256-bit |

| PowerColor PCS++ Radeon HD6950 (AX6950 2GBD5-P22DHG) |

1408 |

800 |

N/A |

1250 |

2.0 GB GDDR5 |

256-bit |

| Gigabyte GeForce GTX 480 (GV-N480SO-15I Super Over Clock) |

480 |

820 |

1640 |

950 |

1536 MB GDDR5 |

384-bit |

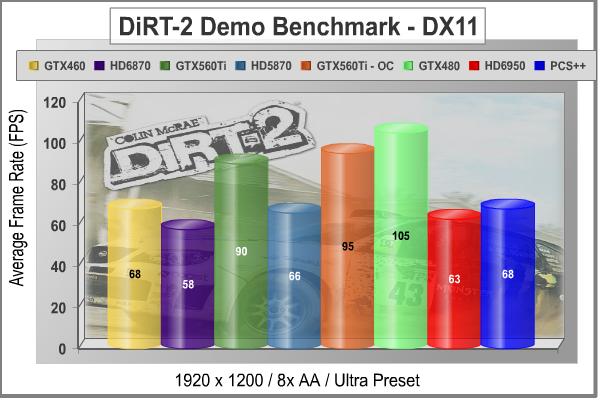

DiRT-2 Demo DX11 Benchmark Results

DiRT-2 features a roster of contemporary off-road events, taking players to diverse and challenging real-world environments. This World Tour has players competing in aggressive multi-car, and intense solo races at extraordinary new locations. Everything from canyon racing and jungle trails to city stadium-based events. Span the globe as players unlock tours in stunning locations spread across the face of the world. USA, Japan, Malaysia, Baja Mexico, Croatia, London, and more venues await, as players climb to the pinnacle of modern competitive off-road racing.

Multiple disciplines are featured; encompassing the very best that modern off-roading has to offer. Powered by the third generation of the EGOTM Engine's award-winning racing game technology, DiRT-2 benefits from tuned-up car-handling physics and new damaged engine effects. It showcases a spectacular new level of visual fidelity, with cars and tracks twice as detailed as those seen in GRID. The DiRT-2 garage houses a collection of officially licensed rally cars and off-road vehicles, specifically selected to deliver aggressive and fast paced racing. Covering seven vehicle classes, players are given the keys to powerful vehicles right away. In DiRT-2 the opening drive is the Group N Subaru, essentially making the ultimate car from the original game the starting point in the sequel, and the rides just get even more impressive as you rack up points.

The primary contribution that DirectX-11 makes to the DiRT-2 Demo benchmark is in the way water is displayed when a car is passing through it, and in the way cloth items are rendered. The water graphics are pretty obvious, and there are several places in the Moroccan race scene where cars are plowing through large and small puddles. Each one is unique, and they are all believable, especially when more than one car is in the scene. The cloth effects are not as obvious, except in the slower-moving menu screens; when there is a race on, there's precious little time to notice the realistic furls in a course-side flag. I should also note that the flags are much more noticeable in the actual game than in the demo, so they do add a little more to the realism there, that is absent from the benchmark.

On a side note, I appreciate the fact that the demo's built-in benchmark has variable game play. I know its lame, but I most always watch it intently, just to see how well "my" car is being driven. So far, my finest telekinetic efforts have yielded a best finish of second place!

The race winner is the GTX 480SOC, by a good 10 frames per second, on average. For a title that was developed on AMD hardware, this is a somewhat surprising result, or it would be if I hadn't already seen the GTX 460 pick a fight with every high end card it encountered. The entire Radeon lineup suffers by comparison in this benchmark; the HD 6870 and HD 5870 results look pretty lackluster and the HD 6950 doesn't put much distance between it and the lower cost cards. The extra shaders definitely help, as the HD 5870 demonstrates, but the GTX 560-Ti really steals the show here, in terms of performance vs. cost. Fortunately, every setup I tested with here did a great job rendering all of the various scenes. As I said above, this is one of my favorite games, and I can confirm that the results above are not far off from real gameplay. There has been some concern in the community about the veracity of the Demo Benchmark compared to the in-game one, and/or FRAPS results. Despite that, I like to use the Demo version because everyone has access to it, and can easily compare results obtained with their own hardware.

In the next section we'll take a look at one of the newest benchmarking tools, H.A.W.X. 2. It's a high flying aerial adventure filled with lots of tessellated terrain, blown-up airplane bits, and masses of blue sky as a background.

|

Graphics Card |

Cores |

Core Clock |

Shader Clock |

Memory Clock |

Memory |

Interface |

| MSI GeForce GTX 460 (N460GTX Cyclone 1GD5/OC) |

336 |

725 |

1450 |

900 |

1.0 GB GDDR5 |

256-bit |

| MSI Radeon HD 6870 (R6870-2PM2D1GD5) |

1120 |

900 |

N/A |

1050 |

1.0 GB GDDR5 |

256-bit |

| MSI GeForce GTX 560 Ti (N560GTX-Ti Twin Frozr II/OC) |

384 |

880 |

1760 |

1050 |

1.0 GB GDDR5 |

256-bit |

|

PowerColor Radeon HD 5870 (PCS+ AX5870 1GBD5-PPDHG2) |

1600 |

875 |

N/A |

1250 |

1.0 GB GDDR5 |

256-bit |

| PowerColor PCS++ Radeon HD6950 (AX6950 2GBD5-P22DHG) |

1408 |

800 |

N/A |

1250 |

2.0 GB GDDR5 |

256-bit |

| Gigabyte GeForce GTX 480 (GV-N480SO-15I Super Over Clock) |

480 |

820 |

1640 |

950 |

1536 MB GDDR5 |

384-bit |

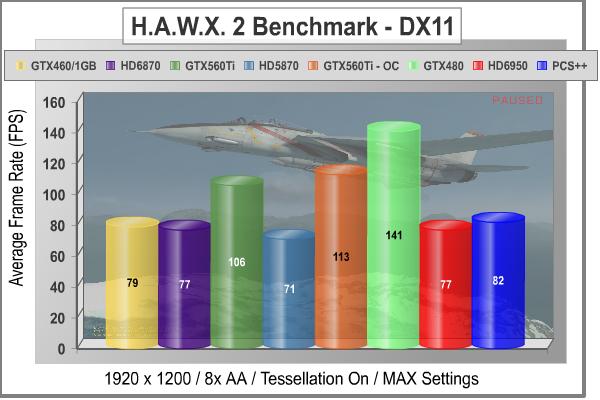

H.A.W.X. 2 DX11 Benchmark Results

H.A.W.X. 2 has been optimized for DX11 enabled GPUs and has a number of enhancements to not only improve performance with DX11 enabled GPUs but also greatly improve the visual experience while taking to the skies.

-

Level maps are 128 Km per dimension creating a level area of 16384 Km².

All of the terrain in this area is rendered using a powerful tessellation implementation. -

The game uses a hardware terrain tessellation method that allows a high number of detailed triangles to be rendered entirely on the GPU when near the terrain in question. This allows for a very low memory footprint and relies on the GPU power alone to expand the low res data to highly realistic detail.

-

Quad patches with multiple displacement maps aim to render 6-pixel-wide triangles typically creating 1.5 Million triangles per frame not including planes, trees, and buildings!

-

The game uses bi-cubic height filtering and fractal noise to give realistic detail at this grand scale. The wavelength and amplitude of the fractal noise is carefully tuned for maximum realism on each level working with the complex tessellation shaders to ensure highest level detail without cracks in the terrain surface.

-

These factors make H.A.W.X. 2 the perfect title for benchmarking the current and future generation of DX11 enabled GPUs.

The H.A.W.X.2 benchmark test is not quite the tessellation monster that Unigine Heaven is. It is supposed to represent an actual game, after all. However, the developers have taken full advantage of the DirectX 11 technology to pump up the realism in this new title. The scenery on the ground in particular is very detailed and vividly portrayed, and there's a lot of it that goes by the window of the F-35 Lightning that is your point of view. The blue sky, not so much....

The enhanced ability of the NVIDIA GPU designs to handle tessellation is quite evident here. This benchmark was launched by NVIDIA and AMD had limited access during game development, so they were pretty far behind with regard to drivers. This test was run with the latest v11.1 Hotfix A drivers and each of the Radeon cards got about a 10 FPS boost compared to their earlier performance. The GTX 480 ends up on the top of the pile in this test, because it has the most shaders and the GTX 4xx designs were heavily focused on tessellation performance. The HD 6950 doesn't handle this game any better than the HD 6870, which surprised me.

Let's take a look at another DX11 benchmark, a fast-paced scenario on a Lost Planet called E.D.N. III. The dense vegetation in "Test A" is almost as challenging as it was in Crysis, and now we have tessellation and soft shadows thrown into the mix via DirectX 11.

|

Graphics Card |

Cores |

Core Clock |

Shader Clock |

Memory Clock |

Memory |

Interface |

| MSI GeForce GTX 460 (N460GTX Cyclone 1GD5/OC) |

336 |

725 |

1450 |

900 |

1.0 GB GDDR5 |

256-bit |

| MSI Radeon HD 6870 (R6870-2PM2D1GD5) |

1120 |

900 |

N/A |

1050 |

1.0 GB GDDR5 |

256-bit |

| MSI GeForce GTX 560 Ti (N560GTX-Ti Twin Frozr II/OC) |

384 |

880 |

1760 |

1050 |

1.0 GB GDDR5 |

256-bit |

|

PowerColor Radeon HD 5870 (PCS+ AX5870 1GBD5-PPDHG2) |

1600 |

875 |

N/A |

1250 |

1.0 GB GDDR5 |

256-bit |

| PowerColor PCS++ Radeon HD6950 (AX6950 2GBD5-P22DHG) |

1408 |

800 |

N/A |

1250 |

2.0 GB GDDR5 |

256-bit |

| Gigabyte GeForce GTX 480 (GV-N480SO-15I Super Over Clock) |

480 |

820 |

1640 |

950 |

1536 MB GDDR5 |

384-bit |

Lost Planet 2 DX11 Benchmark Results

A decade has passed since the first game, and the face of E.D.N. III has changed dramatically. Terra forming efforts have been successful and the ice has begun to melt, giving way to lush tropical jungles and harsh unforgiving deserts. Players will enter this new environment and follow the exploits of their own customized snow pirate on their quest to seize control of the changing planet.

-

4-player co-op action: Team up to battle the giant Akrid in explosive 4 player co-operative play. Teamwork is the player's key to victory as the team is dependent on each to succeed and survive.

-

Single-player game evolves based on players decisions and actions

-

Deep level of character customization: Players will have hundreds of different ways to customize their look to truly help them define their character on the battlefield both on- and offline. Certain weapons can also be customized to suit individual player style.

-

Beautiful massive environments: Capcom's advanced graphics engine, MT Framework 2.0, will bring the game to life with the next step in 3D fidelity and performance.

-

Massive scale of enemies: Players skill on the battlefield and work as a team will be tested like never before against the giant Akrid. Players will utilize teamwork tactics, new weapons and a variety of vital suits (VS) to fight these larger-than-life bosses.

-

Rewards System- Players will receive rewards for assisting teammates and contributing to the team's success

-

Multiplayer modes and online ranking system

-

Exciting new VS features- Based on fan feedback, the team has implemented an unbelievable variety of Vital Suits and new ways to combat VS overall. The new VS sytem will have a powerful impact on the way the player takes to the war zone in Lost Planet 2

Test A:

The primary purpose of Test A is to give an indication of typical game play performance of the PC running Lost Planet 2 (i.e. if you can run Mode A smoothly, the game will be playable at a similar condition). In this test, the character's motion is randomized to give a slightly different outcome each time.

In Test A of Lost Planet 2, we see a familiar pattern. That is, the newest games are implementing the latest software technology and the newest graphics cards are optimized to handle exactly that. The HD 6950 does a bit better here, compared to the HD 6870 and HD 5870, than it did with the H.A.W.X. 2 benchmark. I did not see the usual one or two "slowdowns" during the test with the PowerColor PCS++ HD 6950 that I have seen before, with lesser AMD cards. They've always remained during the second and third runs of the benchmark, so it wasn't a map loading issue. It occurs at the beginning of scene two which is the most demanding, no matter what card is installed. In fact it's usually tougher than Test B. For simplicity's sake, we are reporting the average result, as calculated by the benchmark application. It is not an average of the individual scores reported for the three scenes.

The new GeForce GTX 560 Ti is the most impressive performer in this challenging test, providing the best frame rates for the money. The results for the Radeon HD 5870 show why you don't want to use anything but the most recent DX11-capable hardware for these new games. The developers are really warming up to the enhanced visual tools that are available in DirectX 11, and hopefully we'll see more titles like this that make the unreal, real. As long as you are happy with the story lines, characters, scoring systems, etc. of these new games, you can enjoy a level of realism and performance that was only hinted at with the first generation of DX11 software and hardware. I keep thinking of some of the early titles as "tweeners", as they were primarily developed using the DirectX 10 graphics API, and then some DX11 features were added right before the product was released. It was a nice glimpse into the technology, but the future is now.

Test B:

The primary purpose of Test B is to push the PC to its limits and to evaluate the maximum performance of the PC. It utilizes many functions of Direct X11 resulting in a very performance-orientated, very demanding benchmark mode.

Test B shows broadly similar ranking as Test A, but the Radeon HD 5870 makes a bit of a comeback. The extra shaders in the PCS++ configuration help the HD 6950 sneak past the 30 FPS line with a 25% boost compared to the performance of the HD 6870. Don't forget that the HD 6870 is not "half" of a 6890, like the HD 5xxx series was, where every step up in the product line was a doubling of the die size and transistor count. The 6870 has 1120 shader cores, compared to the 1408 in the 6950, not even close to a 1:2 ratio. So don't expect massive performance gains by moving up to the HD 69xx series.

The sea monster (I can't quite say "River Monster" for some reason...it reminds me of River Dance) is a prime candidate for tessellation, and given the fact that it is in the foreground for most of the scene, the full level of detail is usually being displayed. The water effects also contribute to the graphics load in this test, making it just a little bit tougher than Test A, overall.

In our next section, we are going to continue our DirectX 11 testing with a look at our most demanding DX11 benchmarks, straight from the depths of Moscow's underground rail system and the studios of 4A Games in Ukraine. Let's take a peek at what post-apocalyptic Moscow looks like in the year 2033.

|

Graphics Card |

Cores |

Core Clock |

Shader Clock |

Memory Clock |

Memory |

Interface |

| MSI GeForce GTX 460 (N460GTX Cyclone 1GD5/OC) |

336 |

725 |

1450 |

900 |

1.0 GB GDDR5 |

256-bit |

| MSI Radeon HD 6870 (R6870-2PM2D1GD5) |

1120 |

900 |

N/A |

1050 |

1.0 GB GDDR5 |

256-bit |

| MSI GeForce GTX 560 Ti (N560GTX-Ti Twin Frozr II/OC) |

384 |

880 |

1760 |

1050 |

1.0 GB GDDR5 |

256-bit |

|

PowerColor Radeon HD 5870 (PCS+ AX5870 1GBD5-PPDHG2) |

1600 |

875 |

N/A |

1250 |

1.0 GB GDDR5 |

256-bit |

| PowerColor PCS++ Radeon HD6950 (AX6950 2GBD5-P22DHG) |

1408 |

800 |

N/A |

1250 |

2.0 GB GDDR5 |

256-bit |

| Gigabyte GeForce GTX 480 (GV-N480SO-15I Super Over Clock) |

480 |

820 |

1640 |

950 |

1536 MB GDDR5 |

384-bit |

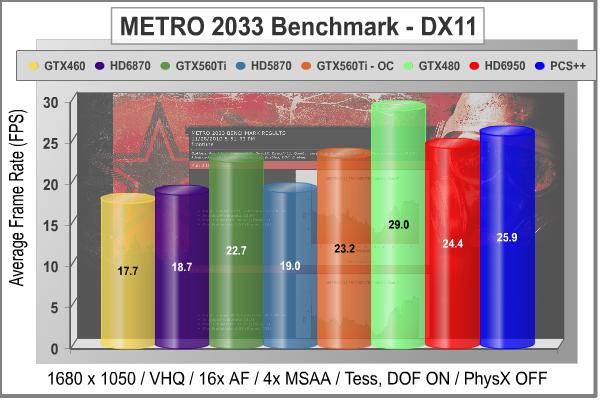

METRO 2033 DX11 Benchmark Results

Metro 2033 is an action-oriented video game with a combination of survival horror, and first-person shooter elements. The game is based on the novel Metro 2033 by Russian author Dmitry Glukhovsky. It was developed by 4A Games in Ukraine and released in March 2010 for Microsoft Windows. Metro 2033 uses the 4A game engine, developed by 4A Games. The 4A Engine supports DirectX-9, 10, and 11, along with NVIDIA PhysX and GeForce 3D Vision.

The 4A engine is multi-threaded in that only PhysX has a dedicated thread, and it uses a task-model without any pre-conditioning or pre/post-synchronizing, thus allowing tasks to be done in parallel. The 4A game engine can utilize a deferred shading pipeline, and uses tessellation for greater performance, and also has HDR (complete with blue shift), real-time reflections, color correction, film grain and noise, and the engine also supports multi-core rendering.

Metro 2033 featured superior volumetric fog, double PhysX precision, object blur, sub-surface scattering for skin shaders, parallax mapping on all surfaces and greater geometric detail with a less aggressive LODs. Using PhysX, the engine uses many features such as destructible environments, and cloth and water simulations, and particles that can be fully affected by environmental factors.

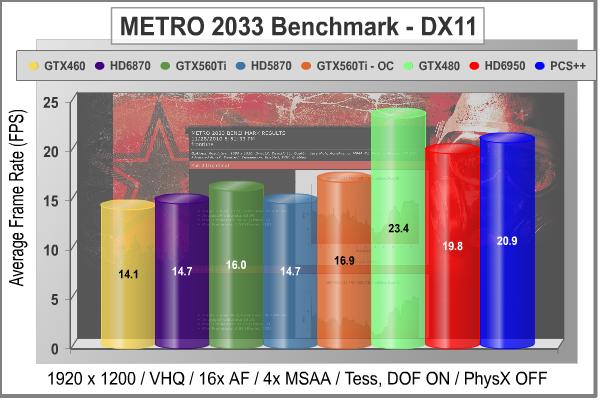

NVIDIA has been diligently working to promote Metro 2033, and for good reason: it is the most demanding PC video game we've ever tested. When an overclocked GeForce GTX 480 struggles to produce 29 FPS, you know that only the strongest graphics processors will generate playable frame rates. All of my tests use the in-game benchmark that was added to the game as DLC earlier this year. Advanced Depth of Field and Tessellation effects are enabled, but the advanced PhysX option is disabled to provide equal load to both AMD and NVIDIA cards. All tests are run with 4x MSAA, which produces the highest load of the two anti-aliasing choices.

The GTX 480 SOC from Gigabyte gets the top spot again with a respectable frame rate of 29 FPS, and the HD 6950 PCS++ pair hold down second and third place. That may sound low, but METRO 2033 is a punishing graphics load, and these are very good results for a single card. The PowerColor Radeon HD 6950 PCS++ does very well in this benchmark, especially compared to the GTX 560 Ti which only gained 0.5 FPS on a very substantial overclock. Once again, PhysX is disabled for all testing, although it only extracted about a 2 FPS penalty when it was enabled with an NVIDIA card installed. IMHO, the minor hit in frame rates is fully justified in terms of the additional realism that PhysX imparts to the gameplay. It adds a lot more credibility to the graphics than any amount of anti-aliasing, no matter what type...

At the higher screen resolution of 1920x1200, the PowerColor HD 6950 PCS++ really pulls away from the GTX 560 Ti card. The extra shaders and the 2 GB of memory really help out with this game. It's a strange coincidence that the Radeon HD 6950 puts in its best performance on the toughest benchmark we have in the whole test suite. These are all barely playable frame rates, however. It takes a bigger card than we have in the mix today to play this game with all the stops pulled out, or a multi-GPU setup.

In our next section, we are going to complete our DirectX 11 testing with a look at an unusual DX11 benchmarks, straight from mother Russia and the studios of Unigine. Their latest benchmark is called "Heaven", and it has some very interesting and non-typical graphics. So, let's take a peek at what Heaven v2.1 looks like.

|

Graphics Card |

Cores |

Core Clock |

Shader Clock |

Memory Clock |

Memory |

Interface |

| MSI GeForce GTX 460 (N460GTX Cyclone 1GD5/OC) |

336 |

725 |

1450 |

900 |

1.0 GB GDDR5 |

256-bit |

| MSI Radeon HD 6870 (R6870-2PM2D1GD5) |

1120 |

900 |

N/A |

1050 |

1.0 GB GDDR5 |

256-bit |

| MSI GeForce GTX 560 Ti (N560GTX-Ti Twin Frozr II/OC) |

384 |

880 |

1760 |

1050 |

1.0 GB GDDR5 |

256-bit |

|

PowerColor Radeon HD 5870 (PCS+ AX5870 1GBD5-PPDHG2) |

1600 |

875 |

N/A |

1250 |

1.0 GB GDDR5 |

256-bit |

| PowerColor PCS++ Radeon HD6950 (AX6950 2GBD5-P22DHG) |

1408 |

800 |

N/A |

1250 |

2.0 GB GDDR5 |

256-bit |

| Gigabyte GeForce GTX 480 (GV-N480SO-15I Super Over Clock) |

480 |

820 |

1640 |

950 |

1536 MB GDDR5 |

384-bit |

Unigine Heaven 2.1 Benchmark Results

The Unigine "Heaven 2.1" benchmark is a free, publicly available, tool that grants the power to unleash the graphics capabilities in DirectX 11 for Windows 7 or updated Vista Operating Systems. It reveals the enchanting magic of floating islands with a tiny village hidden in the cloudy skies. With the interactive mode, emerging experience of exploring the intricate world is within reach. Through its advanced renderer, Unigine is one of the first to set precedence in showcasing the art assets with tessellation, bringing compelling visual finesse, utilizing the technology to the full extend and exhibiting the possibilities of enriching 3D gaming.

The distinguishing feature in the Unigine Heaven benchmark is a hardware tessellation that is a scalable technology aimed for automatic subdivision of polygons into smaller and finer pieces, so that developers can gain a more detailed look of their games almost free of charge in terms of performance. Thanks to this procedure, the elaboration of the rendered image finally approaches the boundary of veridical visual perception. The "Heaven" benchmark excels at the following key features:

- Native support of OpenGL, DirectX 9, DirectX-10 and DirectX-11

- Comprehensive use of tessellation technology

- Advanced SSAO (screen-space ambient occlusion)

- Volumetric cumulonimbus clouds generated by a physically accurate algorithm

- Dynamic simulation of changing environment with high physical fidelity

- Interactive experience with fly/walk-through modes

- ATI Eyefinity support

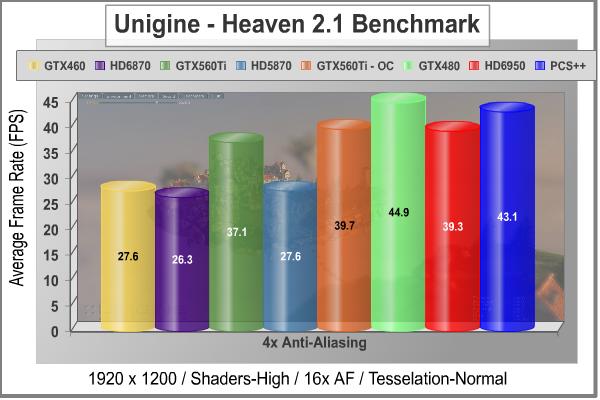

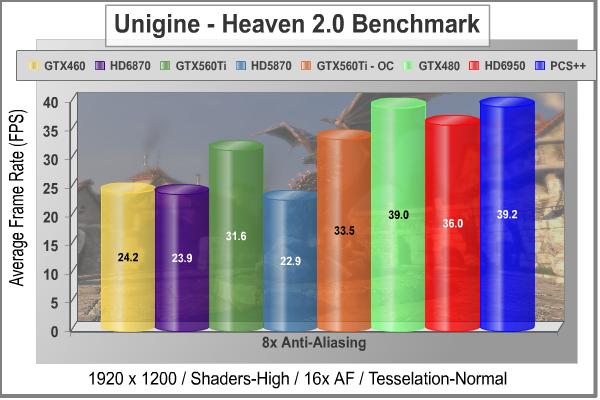

Starting off with a lighter load of 4x MSAA, we see the Gigabyte GTX 480 SOC taking the single GPU crown, and the Radeon HD 6950 and GeForce GTX 560 Ti trading blows for second and third place. Even in the "normal" tessellation mode, this is a graphics test that really shows off the full impact of this DirectX 11 technology. The first generation Fermi architecture has so much more computing power designated for and available for tessellation, that it's no small surprise to see the card doing so well here. The same goes for the GTX 560 Ti, but the HD 6950 PCS++, with its special BIOS settings and a revamped tessellation engine, gets much closer to the performance of the GTX 480 in single-GPU mode. There is no jerkiness to the display at this resolution with the top GPUs represented here; now that I've seen the landscape go by for a couple hundred times, I can spot the small stutters pretty easily. This test was run with 4x anti-aliasing; let's see how the cards stack up when we increase MSAA to the maximum level of 8x.

Increasing the anti-aliasing just improved the excellent performance of the PowerColor Radeon HD 6950 PCS++, relative to all of the other cards in this test. There's no denying that the Fermi chip is a killer when called upon for tessellation duty, but the HD 6950 does surprisingly well in this benchmark. The GTX 560 Ti comes in third place, even with its excellent overclocking performance; the HD 6950 beats it by several FPS in stock configuration, and the PCS++ variant pulls out a slightly bigger lead. I honestly never thought that AMD could beat out NVIDIA on this test, because the Fermi chips are so aggressive in their tessellation performance.

In our next section, we investigate the thermal performance of the PowerColor PCS+ HD6950 2GB GDDR5 video card, and see how well this non-reference cooler works on this new top class of GPU.

|

Graphics Card |

Cores |

Core Clock |

Shader Clock |

Memory Clock |

Memory |

Interface |

| MSI GeForce GTX 460 (N460GTX Cyclone 1GD5/OC) |

336 |

725 |

1450 |

900 |

1.0 GB GDDR5 |

256-bit |

| MSI Radeon HD 6870 (R6870-2PM2D1GD5) |

1120 |

900 |

N/A |

1050 |

1.0 GB GDDR5 |

256-bit |

| MSI GeForce GTX 560 Ti (N560GTX-Ti Twin Frozr II/OC) |

384 |

880 |

1760 |

1050 |

1.0 GB GDDR5 |

256-bit |

|

PowerColor Radeon HD 5870 (PCS+ AX5870 1GBD5-PPDHG2) |

1600 |

875 |

N/A |

1250 |

1.0 GB GDDR5 |

256-bit |

| PowerColor PCS++ Radeon HD6950 (AX6950 2GBD5-P22DHG) |

1408 |

800 |

N/A |

1250 |

2.0 GB GDDR5 |

256-bit |

| Gigabyte GeForce GTX 480 (GV-N480SO-15I Super Over Clock) |

480 |

820 |

1640 |

950 |

1536 MB GDDR5 |

384-bit |

PowerColor PCS++ HD 6950 Temperatures

It's hard to know exactly when the first video card got overclocked, and by whom. What we do know is that it's hard to imagine a computer enthusiast or gamer today that doesn't overclock their hardware. Of course, not every video card has the head room. Some products run so hot that they can't suffer any higher temperatures than they generate straight from the factory. This is why we measure the operating temperature of the video card products we test.

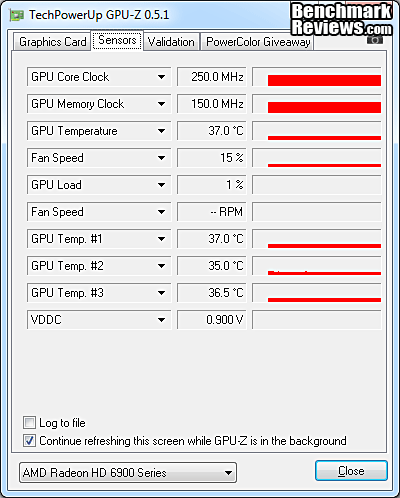

To begin testing, I use GPU-Z to measure the temperature at idle as reported by the GPU. Next I use FurMark 1.8.2 to generate maximum thermal load and record GPU temperatures at high-power 3D mode. The ambient room temperature remained stable at 25C throughout testing. I have a ton of airflow into the video card section of my benchmarking case, with a 200mm side fan blowing directly inward, so that helps alleviate any high ambient temps.

I tested the PowerColor PCS++ Radeon HD6950 2GB GDDR5 video card with both BIOS settings, figuring that adding more shader cores and a higher clock would have a definite effect on the GPU temperature. I was right, there was a measureable difference, but you'll see that it wasn't a major one. With just the basic Windows Aero desktop running I recorded 37C in idle mode, with a very minimal idle fan speed of 15%, as dialed up by the internal fan controller. The GPU temperature increased to 66C after 30 minutes of stability testing in full 3D mode, at 1920x1200 resolution, and the maximum MSAA setting of 8X. With the fan set on Automatic, the speed rose to a very meager 22% under full load, which is so low that I would ordinarily complain, but with the temp only getting up to 66C I feel I can't, really. I then did a run with manual fan control and 100% fan speed. I was rewarded by a modest increase in fan noise and a nice reduction in load temperature to 57C.

|

Load |

Fan Speed |

GPU Temperature |

|

Idle |

15% - AUTO |

37C |

|

Furmark |

22% - AUTO |

66C |

|

Furmark |

100% - Manual |

57C |

66C is a very good result for temperature stress testing, and it's impressive that PowerColor does it with a fan speed of only 22%. I'm used to seeing video card manufacturers keep the fan speeds low, especially with radial blower wheels that make a racket at higher speeds, but with axial fans there's usually no point to doing that. I was able to knock 9 degrees off the load temps by running the fan at either 80% or 100%, which is what I recommend for sustained gaming. Heat kills electronic components, and there's no joy in assisted suicide for your video card, plus the increase in noise is not too bad at full tilt. I would probably end up making a custom software profile to optimize the fan speeds for this non-reference design, but that's only because I am obsessive about heat. Honestly, the stock settings keep the GPU well within reasonable temperature limits.

|

Load |

Fan Speed |

GPU Temperature |

|

Idle |

15% - AUTO |

38C |

|

Furmark |

26% - AUTO |

73C |

|

Furmark |

100% - Manual |

63C |