Radeon HD5770 CrossFireX Performance

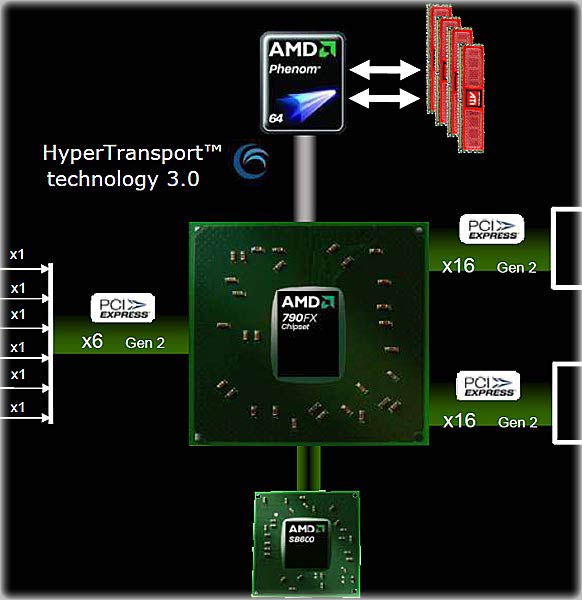

This article is all about answering one question: how well does the Radeon HD 5770 scale in CrossFireX. Benchmark Reviews has already investigated and published reviews for two video cards based on the HD5770 "Juniper" chip; an engineering sample from ATI and a production card from XFX. They both acquitted themselves quite well, and we included some CrossFireX test results in the XFX review, using two cards strapped together on an Intel P45 platform. This time we take a look at how 1, 2, and 3 cards work together on our new Windows 7 test suite, with an AMD 790FX motherboard.

Looking at our earlier reviews of the Radeon HD5770, we already know that two of them in CrossFireX soundly beat a single NVIDIA GTX285. So, this article is not about comparing the 5770 to other cards; we've already done that. What we're interested in is how well CrossFireX works once you get past the initial surge of dual cards. Is adding a third card a waste of time, or is it just as effective as adding the second card?

Stick around, as we test out all three configurations on a series of eight challenging benchmarking tools. I can promise you a few surprises.

About the company: ATI

Over the course of AMD's four decades in business, silicon and software have become the steel and plastic of the worldwide digital economy. Technology companies have become global pacesetters, making technical advances at a prodigious rate - always driving the industry to deliver more and more, faster and faster.

However, "technology for technology's sake" is not the way we do business at AMD. Our history is marked by a commitment to innovation that's truly useful for customers - putting the real needs of people ahead of technical one-upmanship. AMD founder Jerry Sanders has always maintained that "customers should come first, at every stage of a company's activities."

We believe our company history bears that out.

ATI Radeon HD5770 Features

I originally had a hard time thinking that CrossFireX actually had features. I tend to think of it as just the act of hooking two or more cards together with a jumper cable. But the more I thought about it, there are hardware and software components to it. Someone had to design those things, and the design had to fulfill certain requirements, and another word for requirements is "Features." So, I got it sorted in my head, and started to look around for what features might be included in CrossFireX. Here's what ATI has to say:

What Is ATI CrossFireX

ATI CrossFireXTMis the ultimate multi-GPU performance gaming platform. Enabling game-dominating power, ATI CrossFireX technology enables two or more discrete graphics processors to work together to improve system performance. For The Ultimate Visual ExperienceTM, be sure to select ATI CrossFireX ready motherboards for AMD and Intel processors and multiple ATI RadeonTM HD graphics cards.

ATI CrossFireX technology allows you to expand your system's graphics capabilities. It allows you the ability to scale your system's graphics horsepower as you need it, supporting up to four ATI RadeonTM HD graphics cards, making this the most scalable gaming platform ever.

Extreme High-Definition Gaming

Arm yourself with up to four ATI Radeon HD graphics cards to move faster, aim quicker, and see more clearly than all who stand between you and victory. With an ATI CrossFireX gaming rig, you can breakthrough traditional graphics limitations to achieve higher performance and The Ultimate Visual ExperienceTM.

Products featuring ATI CrossFireX technology offer a range of solutions for a whole new gaming experience. Whether you're an up-and-comer or a pro-tournament master, ATI CrossFireX raises the bar for competitive performance and visual thrills.

What Are The Benefits Of ATI CrossFireX?

With an ATI CrossFireX gaming rig, you can break through the traditional graphics limitation allowing you to higher performance as well as visual experience.

Does ATI CrossFireX Only Benefit The High-End Cards?

No. ATI CrossFireX technology brings a whole new level of gaming experience throughout our products line-up. Whether you have an entry level or top end solution, ATI CrossFireX will raise the bar in bringing the ultimate performance and visual experience.

Just to refresh your memory, here are the basic features of the Radeon HD5770.

ATI Radeon HD 5770 GPU Feature Summary

-

1.04 billion 40nm transistors

-

TeraScale 2 Unified Processing Architecture

-

GDDR5 memory interface

-

PCI Express 2.1 x16 bus interface

-

DirectX 11 support

-

Shader Model 5.0

-

DirectCompute 11

-

Programmable hardware tessellation unit

-

Accelerated multi-threading

-

HDR texture compression

-

Order-independent transparency

-

OpenGL 3.2 support1

-

Image quality enhancement technology

-

Up to 24x multi-sample and super-sample anti-aliasing modes

-

Adaptive anti-aliasing

-

16x angle independent anisotropic texture filtering

-

128-bit floating point HDR rendering

-

ATI Eyefinity multi-display technology2,3

-

Three independent display controllers - Drive three displays simultaneously with independent resolutions, refresh rates, color controls, and video overlays

-

Display grouping - Combine multiple displays to behave like a single large display

-

ATI Stream acceleration technology

-

OpenCL 1.0 compliant

-

DirectCompute11

-

Accelerated video encoding, transcoding, and upscaling4,5

-

Native support for common video encoding instructions

-

ATI CrossFireXTM multi-GPU technology6

-

ATI Avivo HD Video & Display technology7

-

UVD 2 dedicated video playback accelerator

-

Advanced post-processing and scaling8

-

Dynamic contrast enhancement and color correction

-

Brighter whites processing (blue stretch)

-

Independent video gamma control

-

Dynamic video range control

-

Support for H.264, VC-1, and MPEG-2

-

Dual-stream 1080p playback support9,10

-

DXVA 1.0 & 2.0 support

-

Integrated dual-link DVI output with HDCP11

-

Integrated DisplayPort output

-

Integrated HDMI 1.3 output with Deep Color, xvYCC wide gamut support, and high bit-rate audio

-

Integrated VGA output

-

3D stereoscopic display/glasses support13

-

Integrated HD audio controller

-

Output protected high bit rate 7.1 channel surround sound over HDMI with no additional cables required

-

Supports AC-3, AAC, Dolby TrueHD and DTS Master Audio formats

-

ATI PowerPlayTM power management technology7

-

Certified drivers for Windows 7, Windows Vista, and Windows XP

-

Driver support scheduled for release in 2010

-

Driver version 8.66 (Catalyst 9.10) or above is required to support ATI Eyefinity technology and to enable a third display you require one panel with a DisplayPort connector

-

ATI Eyefinity technology works with games that support non-standard aspect ratios which is required for panning across three displays

-

Requires application support for ATI Stream technology

-

Digital rights management restrictions may apply

-

ATI CrossFireXTMtechnology requires an ATI CrossFireX Ready motherboard, an ATI CrossFireXTM Bridge Interconnect for each additional graphics card) and may require a specialized power supply

-

ATI PowerPlayTM, ATI AvivoTMand ATI Stream are technology platforms that include a broad set of capabilities offered by certain ATI RadeonTMHD GPUs. Not all products have all features and full enablement of some capabilities and may require complementary products

-

Upscaling subject to available monitor resolution

-

Blu-ray or HD DVD drive and HD monitor required

-

Requires Blu-ray movie disc supporting dual 1080p streams

-

Playing HDCP content requires additional HDCP ready components, including but not limited to an HDCP ready monitor, Blu-ray or HD DVD disc drive, multimedia application and computer operating system.

-

Some custom resolutions require user configuration

-

Requires 3D Stereo drivers, glasses, and display

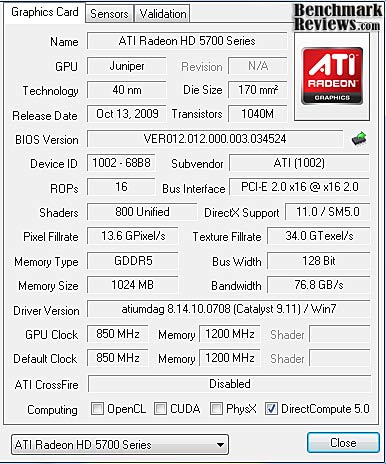

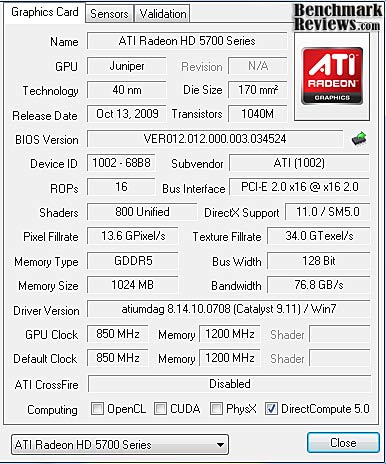

Radeon HD5770 Specifications

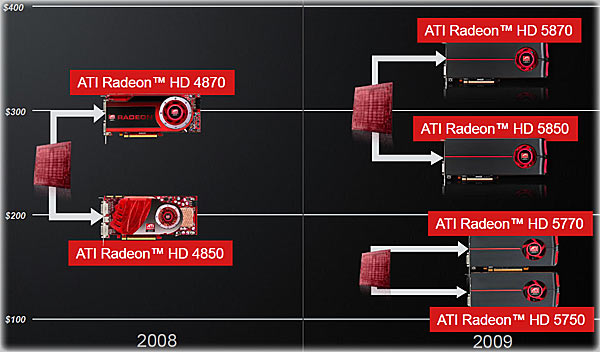

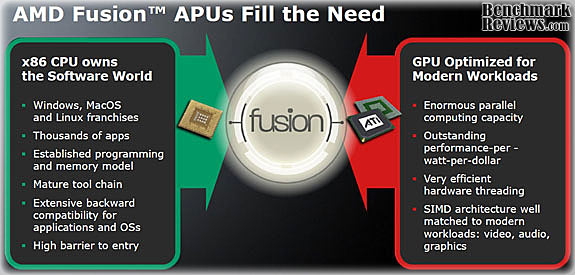

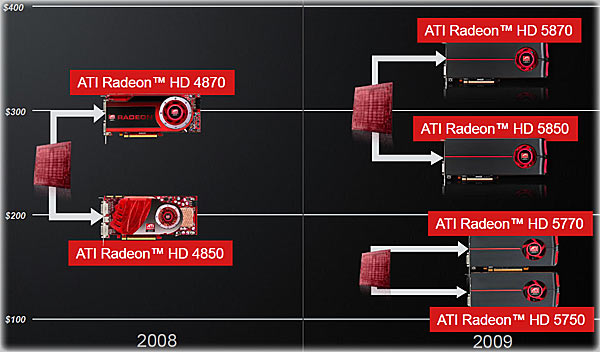

The ATI Radeon HD5770 specifications don't fit neatly between two or more competing, or legacy models. It's tempting to compare the card to the HD4870 and HD4890, but the HD5770 has only half the memory bus width of the entire HD48xx series; however every single 5xxx card runs GDDR5 memory at very high clock rates. The memory bandwidth of the HD5770 compares favorably to the HD4850, at 76.8 GB/sec versus 63.5 GB/s, but falls way behind the HD4890 which runs at 124.8 GB/s, as well as all the NVIDIA G200-based cards. It's the penalty ATI paid for slicing the "Cypress" directly in half in order to get the "Juniper". You can see the complete specs in detail a little further below on this page. The real story is how ATI has been able to reduce the cost of the HD5700 platform to below the HD4850. Take a look at where the four versions of the HD5xxx series end up relative to their forefathers. And remember, this is all based on launch pricing...

Now let's look at the actual HD5770 specs in detail:

Radeon HD5770 Speeds & Feeds

-

Engine clock speed: 850 MHz

-

Processing power (single precision): 1.36 TeraFLOPS

-

Polygon throughput: 850M polygons/sec

-

Data fetch rate (32-bit): 136 billion fetches/sec

-

Texel fill rate (bilinear filtered): 34 Gigatexels/sec

-

Pixel fill rate: 13.6 Gigapixels/sec

-

Anti-aliased pixel fill rate: 54.4 Gigasamples/sec

-

Memory clock speed: 1.2 GHz

-

Memory data rate: 4.8 Gbps

-

Memory bandwidth: 76.8 GB/sec

-

Maximum board power: 108 Watts

-

Idle board power: 18 Watts

As impressive as these specs are, the answer for the question of today, "How well do these cards work in CrossFireX?" isn't contained here. Sure, there are some clues, like the memory bandwidth possibly becoming a problem, when all 2400 cores are pumping out pixels. It wasn't a problem with one card on its own, but in CrossFireX, all three GPUs are sharing the memory (and memory bus) of one card. The power consumption is important, as well. The extremely low power requirements of these cards are a real boon to running a multi-GPU arrangement. I was able to run the test setup comfortably with a 750 watt PSU; the maximum power draw (from the wall socket) was 507 watts. Add in the fact that each card only requires one 6 pin PCI-E power connection, and you have a lot more freedom in your power supply selection.

Now that we're through with all the numbers, let's take a closer look at the card voted most likely to be CrossFired in 2010.

Closer Look: Radeon HD5770

The HD 5770 follows the general design of the HD5850 card, only on a slightly smaller scale. The card is only 220 mm long (8.63"), which means it will fit into most any case without an issue. The signature red blower wheel, sourced from Delta is there, pushing air through a finned heatsink block that sits on top of the GPU, and out the back of the card through the small set of vents on the I/O plate. The reference design cooler also provides a large expanse of real estate for ATI's retail partners to display their branding.

The collection of I/O ports on the dual-width rear panel of the card is consistent across the entire HD5xxx family at this point: two DVI, one HDMI and one DisplayPort connector. This doesn't leave much room for the exhaust vents, but if ATI can keep the HD5800 series cool with the same design, the half-size HD5700 series GPU should be fine. The housing is a one-piece plastic affair, and removes easily. The external appearance hints at a simplistic design; it looks like a cover and nothing more. Once we look inside, that impression will be laid to rest.

The far end of the card showcases the new "hood scoop" design that is carried over from the high end ATI cards. They don't feed a lot of air into the blower, but if you look closely at the back side of the fan housing in the image after next, you should see some vents on the back side that do feed air into the center of the squirrel cage blower wheel. So, the HD5770 has an extra trick up its sleeve, compared to the HD58xx series, which use a different blower housing. This provides some ventilation for most of the power supply components located at the far end of the card. Power supply + ventilation is always a good thing. The red racing graphics on the top edge is both decorative and functional, as there are some additional vents molded in there.

Popping off the cover reveals a deceptively simple, ducted heat sink with a copper base and tightly spaced aluminum fins. The blower is thinner than the units in the HD5800 series, but follows the same format. A portion of the duct opens up to the case by way of some vents in the top rail, molded here in red. Clearly, the majority of the air is meant to exhaust through the I/O plate, but it never hurts to have a backup plan.

For most high-end video cards, the cooling system is an integral part of the performance envelope for the card. Make it run cooler, and you can make it run faster is the byword for achieving gaming-class performance from the latest and greatest GPUs. Even though the HD5770 is a mid-range card with a small GPU die size, it's still a gaming product and will be pushed to maximum performance levels by most potential customers. We'll be looking at cooling performance later on, to see how well the cooler design holds up under the strain of GPU overclocks.

The back of the Radeon HD5770 is bare, which is normal for a card in this market segment. The main features to be seen here are the metal cross-brace for the GPU heatsink screws, which are spring loaded, and the four Hynix GDDR5 memory chips on the back side. They are mounted back-to-back with four companion chips on the top side of the board. Together, they make up the full 1GB of memory contained on this card.

Now, let's peek under the covers and have a good look at what's inside the Radeon HD5770.

Radeon HD5770 Detailed Features

The main attraction of ATI's new line of video cards is the brand new GPU with its 40nm transistors and an improved architecture. The chip in the 5700 series is called "Juniper" and is essentially half of the "Cypress", the high-end chip in the HD5800 series that was introduced in September, 2009.

The Juniper die is very small, as can be seen with this comparison to a well known dimensional standard. ATI still managed to cram over a billion transistors on there, and the small size is critical to the pricing strategy that ATI is pursuing with these new releases.

1 GB of GDDR5 memory, on a 128-bit bus with a 4.8 Gbps memory interface offers a maximum memory bandwidth of up to 76.8 GB/sec. Cutting the Cypress GPU in half limited the bus to 128-bit, but ATI has bumped up the clock rate on all their new boards. With GDDR5 running at 1200 MHz, the memory itself won't be a bottleneck on this card, but the narrower bus width does have a major performance impact. There is some room for memory overclocking via the Overdrive tool distributed by AMD.

The H5GQ1H24AFR-T2C chip from Hynix is rated for 5.0 Gbps, and is one of the higher rated chips in the series, as you can see in the table below. An overclock to the 1250-1300 MHz range is not unthinkable, especially if utilities become available to modify memory voltage.

The power section provides 3-phase power to the GPU; that's about average for a mid-range graphics card, and while increasing the number of power phases achieves better voltage regulation, improves efficiency, and reduces heat, ATI has used the inherently lower power requirements of the Juniper GPU and some fancy footwork in the power supply control chip to reduce power draw to very low levels.

Where the HD5800 series used a number of Volterra regulators and controllers, the HD5770 makes do with one L6788A controller chip from ST. It's still a relatively sophisticated controller, and the combination of a lower power GPU, low power GDDR5 memory, and smart power supply design yields an incredibly low power consumption of 18W at idle and 108W under duress. These numbers are derived from testing with 3DMark03; ATI says it pulls higher current than more recent versions of the synthetic benchmark. Another cost-cutting measure can be seen here, the use of standard electrolytic capacitors in a few locations.

Before we dive into the testing portion of the review, let's look at one of the most exciting new features available on every Radeon HD5xxx series product, Eyefinity.

ATI Eyefinity Multi-Monitors

ATI Eyefinity advanced multiple-display technology launches a new era of panoramic computing, helping to boost productivity and multitasking with innovative graphics display capabilities supporting massive desktop workspaces, creating ultra-immersive computing environments with superhigh resolution gaming and entertainment, and enabling easy configuration. High end editions will support up to six independent display outputs simultaneously.

In the past, multi-display systems catered to professionals in specific industries. Financial, energy, and medical are just some industries where multi-display systems are a necessity. Today, more and more graphic designers, CAD engineers and programmers are attaching more than one display to their workstation. A major benefit of a multi-display system is simple and universal - it enables increased productivity. This has been confirmed in industry studies which show that attaching more than one display device to a PC can signficantly increase user productivity.

Early multi-display solutions were non-ideal. Bulky CRT monitors claimed too much desk space; thinner LCD monitors were very expensive; and external multidisplay hardware were inconvenient and also very expensive. These issues are much less of a concern today. LCD monitors are very affordable and current generation GPUs can drive multiple display devices independently and simultaneously, without the need for external hardware. Despite the advancements in multi-display technology, AMD engineers still felt there was room for improvement, especially regarding the display interfaces. VGA carries analog signals and needs a dedicated DAC per display output, which consumes power and ASIC space. Dual-Link DVI is digital, but requires a dedicated clock source per display output and uses too many I/O pins from the GPU. It was clear that a superior display interface was needed.

In 2004, a group of PC companies collaborated to define and develop DisplayPort, a powerful and robust digital display interface. At that time, engineers working for the former ATI Technologies Inc. were already thinking about a more elegant solution to drive more than two display devices per GPU, and it was clear that DisplayPort was the interface of choice for this task. In contrast to other digital display interfaces, DisplayPort does not require a dedicated clock signal for each display output. In fact, the data link is fixed at 1.62Gbps or 2.7Gbps per lane, irrespective of the timing of the attached display device. The benefit of this design is that one reference clock source provides the clock signal needed to drive as many DisplayPort display devices as there are display pipelines in the GPU. In addition, with the same number of I/O pins used for Single-Link DVI, a full speed DisplayPort link can be driven which provides more bandwidth and translates to higher resolutions, refresh rates and color depths. All these benefits perfectly complement ATI Eyefinity Multi-Display Technology.

ATI Eyefinity Technology from AMD provides advanced multiple monitor technology delivering an immersive graphics and computing experience, supporting massive virtual workspaces and super-high resolution gaming environments. Legacy GPUs have supported up to two display outputs simultaneously and independently for more than a decade. Until now graphics solutions have supported more than two monitors by combining multiple GPUs on a single graphics card. With the introduction of AMD's next-generation graphics product series supporting DirectX 11, a single GPU now has the advanced capability of simultaneously supporting up to six independent display outputs.

ATI Eyefinity Technology is closely aligned with AMD's DisplayPort implementation providing the flexibility and upgradability modern user's demand. Up to two DVI, HDMI, or VGA display outputs can be combined with DisplayPort outputs for a total of up to six monitors, depending on the graphics card configuration. The initial AMD graphics products with ATI Eyefinity technology will support a maximum of three independent display outputs via a combination of two DVI, HDMI or VGA with one DisplayPort monitor. AMD has a future product planned to support up to six DisplayPort outputs. Wider display connectivity is possible by using display output adapters that support active translation from DisplayPort to DVI or VGA.

The DisplayPort 1.2 specification is currently being developed by the same group of companies who designed the original DisplayPort specification. Its feature set includes higher bandwidth, enhanced audio and multi-stream support. Multi-stream, commonly referred to as daisy-chaining, is the ability to address and drive multiple display devices through one connector. This technology, coupled with ATI Eyefinity Technology, will be a key enabler for multi-display technology, and AMD will be at the forefront of this transition.

Video Card Testing Methodology

This is the beginning of a new era for testing at Benchmark Reviews. With the imminent release of Windows 7 to the marketplace, and given the prolonged and extensive pre-release testing that occurred on a global scale, there are compelling reasons to switch all testing to this new, and highly anticipated, operating system. Overall performance levels of Windows 7 have been favorably compared to Windows XP, and there is solid support for the 64-bit version, something enthusiasts have been anxiously awaiting for several years. Windows 7 also brings a new version of DirectX, DX11. The combination of the two provides a solid leap forward in graphics processing.

Our site polls and statistics indicate that the over 90% of our visitors use their PC for playing video games, and practically every one of you are using a screen resolutions mentioned above. Since all of the benchmarks we use for testing represent different game engine technology and graphic rendering processes, this battery of tests will provide a diverse range of results for you to gauge performance on your own computer system. All of the benchmark applications are capable of utilizing DirectX 10, and that is how they were tested. Some of these benchmarks have been used widely for DirectX 9 testing in the XP environment, and it is critically important to differentiate between results obtained with different versions. Each game behaves differently in DX9 and DX10 formats. Crysis is an extreme example, with frame rates in DirectX 10 only about half what was available in DirectX 9.

At the start of all tests, the previous display adapter driver is uninstalled and trace components are removed using Driver Cleaner Pro.We then restart the computer system to establish our display settings and define the monitor. Once the hardware is prepared, we begin our testing. According to the Steam Hardware Survey published at the time of Windows 7 launch, the most popular gaming resolution is 1280x1024 (17-19" standard LCD monitors) closely followed by 1024x768 (15-17" standard LCD). However, because these resolutions are considered 'low' by most standards, our benchmark performance tests concentrate on the up-and-coming higher-demand resolutions: 1680x1050 (22-24" widescreen LCD) and 1920x1200 (24-28" widescreen LCD monitors).

Each benchmark test program begins after a system restart, and the very first result for every test will be ignored since it often only caches the test. This process proved extremely important in the World in Conflict benchmarks, as the first run served to cache maps allowing subsequent tests to perform much better than the first. Each test is completed five times, the high and low results are discarded, and the average of the thre remaining results are displayed in our article.

Test System

-

-

System Memory: 2X 2GB OCZ Reaper HPC DDR3 1600MHz (7-7-7-24)

-

-

-

-

-

-

-

Optical Drive: Sony NEC Optiarc AD-7190A-OB 20X IDE DVD Burner

-

-

PSU: Corsair CMPSU-750TX ATX12V V2.2 750Watt

-

-

Operating System: Windows 7 Ultimate Version 6.1 (Build 7600)

Benchmark Applications

-

3DMark Vantage v1.0.1 (8x Anti Aliasing & 16x Anisotropic Filtering)

-

Crysis v1.21 Benchmark (High Settings, 0x and 4x Anti-Aliasing)

-

Devil May Cry 4 Benchmark Demo (Ultra Quality, 8x-MSAA)

-

Far Cry 2 v1.02 (Very High Performance, Ultra-High Quality, 8x-AA)

-

World in Conflict v1.0.0.9 Performance Test (Very High Setting: 4x AA/4x-AF)

-

Battleforge Renegade v1.1 (Max Quality, 8x Anti-Aliasing, MT Rendering)

-

Resident Evil 5 (8x Anti-Aliasing, Motion Blur ON, Quality Levels-High)

-

Unigine Heaven v1.0 (DX11, Shaders-High, Tessellation-On, 16x-AF, 8x-AA)

CrossFireX and the Radeon HD5770

Leaving well enough alone is NOT the way most gamers and computer enthusiasts think. A few hours after every enthusiast installs their new graphics card, the itch for even more performance starts to take hold. It's like eating carbs; the more you have, the more you want. Of course, no one wants to return their new pride and joy; that would be too much like admitting to some sort of error in strategy. So, what's any self-respecting gamer to do? Well, buy another one, that's what! But, you want to know that the second (or third, if you have it bad...) card is going to make a substantial difference. That's what we're here to find out.

CrossfireX and three Radeon HD5770 cards just happened to be in the same house last week, and the installation and setup could not have been any easier. Once the system was running with one HD5770, I shut it down, plugged the second card in, attached the flexi-bridge, and restarted. Once Windows 7 started up, Catalyst Control Center popped open and informed me that I had two GPUs running in CrossfireX, and asked if that was alright. I said yes, and from that point on, it was a seamless transition from one GPU to two. The additional performance was substantial, even with stock, reference design clocks.

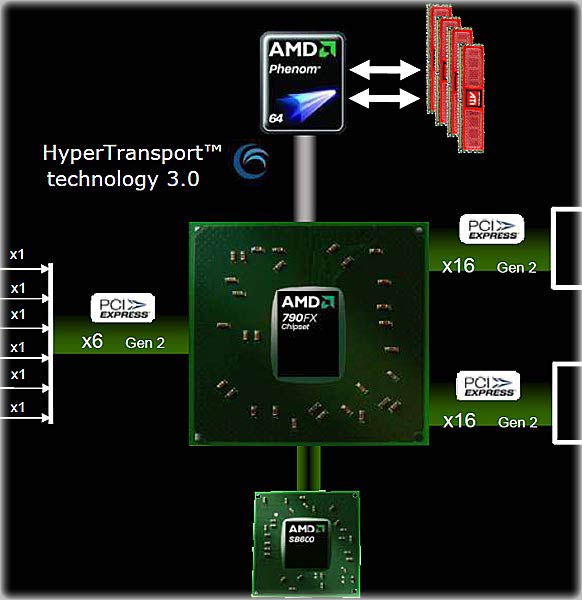

Moving on to adding a third card, it was almost as easy. Physically, there was no problem, as the AMD 790FX Northbridge offers 42 available PCI-e lanes. If you use more than two graphics cards, two of them have to throttle back to 8X bandwidth, but the 57xx series shouldn't be held back by 8X bandwidth. Four of the 42 lanes are consumed by the NB-SB interconnect, leaving 6 lanes available for onboard peripherals.

Once all three cards were installed, I had a little trouble upon restart. The ATI catalyst Control Center gave me an error message saying the driver did not support the current CrossFireX configuration. Windows told my new hardware was installed and ready for use. So, I said, OK. OK. (How come these dialogue boxes never have the option of "No, you're wrong"...?) Then I did what any self-respecting early adopter does, I re-booted. No messages on startup, everything looked good in Device Manager, CCC still had Enable CrossFireX checked, and ATI Overdrive was able to address all three cards. See, I knew that message box was wrong.

Now we're ready to begin testing video game performance on these video cards. In all of the testing, I'm going to use the single card numbers as the reference for the two and three card results. It takes one more variable out of the equation, compared to the case where 3x results are compared to 2x results. So, for example, the maximum performance gain from adding a second card would reasonably be 100% (100% more performance...). Similarly, the maximum gain for adding two cards would be 200% more performance. Due to hardware and software limitations, you're never going to see a 100% increase, in fact the practical maximum gain is about 85%. Many CrossFire and SLI implementations in the past performed well below this level, and only provided somewhere between 30% and 60% performance improvement.

So, we have our work cut out for us, to see how well this latest generation of ATI Radeon chips perform when yoked together. Please continue to the next page as we start off with our 3DMark Vantage test results.

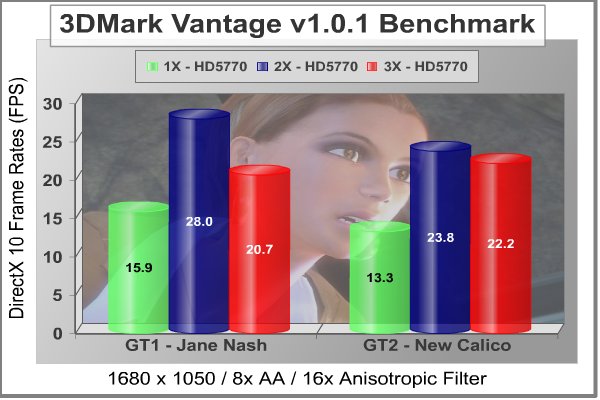

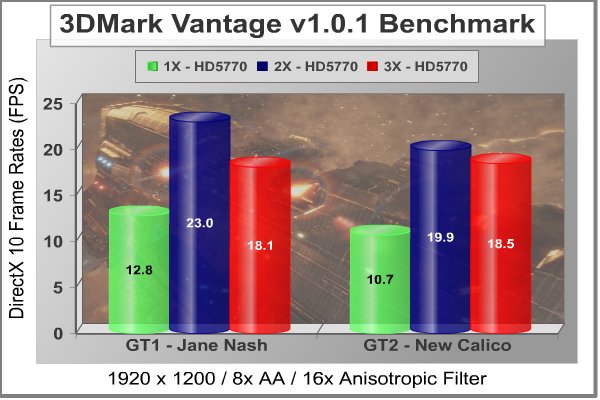

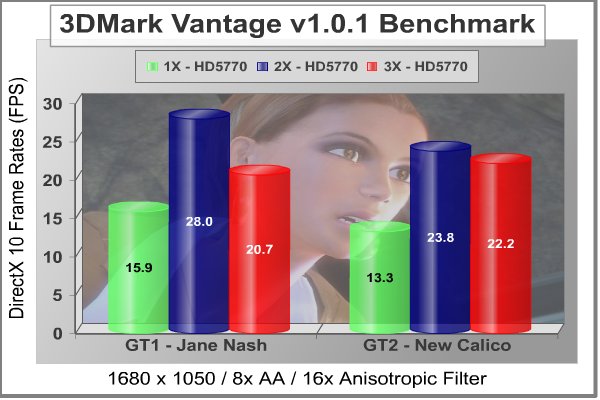

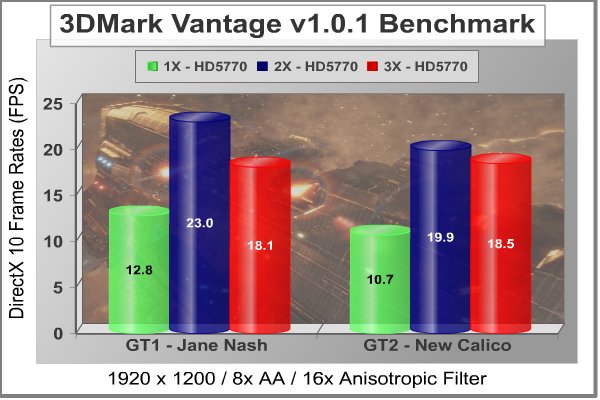

3DMark Vantage Benchmark Results

3DMark Vantage is a computer benchmark by Futuremark (formerly named Mad Onion) to determine the DirectX 10 performance of 3D game performance with graphics cards. A 3DMark score is an overall measure of your system's 3D gaming capabilities, based on comprehensive real-time 3D graphics and processor tests. By comparing your score with those submitted by millions of other gamers you can see how your gaming rig performs, making it easier to choose the most effective upgrades or finding other ways to optimize your system.

There are two graphics tests in 3DMark Vantage: Jane Nash (Graphics Test 1) and New Calico (Graphics Test 2). The Jane Nash test scene represents a large indoor game scene with complex character rigs, physical GPU simulations, multiple dynamic lights, and complex surface lighting models. It uses several hierarchical rendering steps, including for water reflection and refraction, and physics simulation collision map rendering. The New Calico test scene represents a vast space scene with lots of moving but rigid objects and special content like a huge planet and a dense asteroid belt.

At Benchmark Reviews, we believe that synthetic benchmark tools are just as valuable as video games, but only so long as you're comparing apples to apples. Since the same test is applied in the same controlled method with each test run, 3DMark is a reliable tool for comparing graphic cards against one-another.

1680x1050 is rapidly becoming the new 1280x1024. More and more widescreen are being sold with new systems or as upgrades to existing ones. Even in tough economic times, the tide cannot be turned back; screen resolution and size will continue to creep up. Using this resolution as a starting point, the maximum settings were applied to 3DMark Vantage include 8x Anti-Aliasing, 16x Anisotropic Filtering, all quality levels at Extreme, and Post Processing Scale at 1:2.

I'm tempted to call the 3DMark Vantage results a disaster, but the truth is the only disaster in testing is when you don't learn anything. In this case, we learn that there is something interfering with the proper operation of three video cards, hooked together in CrossFireX. Now, it happens that in addition to being the first benchmark that appears in most of our reviews, it's also the first benchmark I usually run. You can imagine my trepidation after seeing these results. Luckily, this is not representative of what we can expect from the rest of our tests.

The two test scenes in 3DMark Vantage provide a varied and modern set of challenges for the video cards and their subsystems, as described above. The results always produced higher frame rates for GT1 and so far, I haven't seen any curveball results like I used to see with 3DMark06. The twin Radeon HD5770 cards pull impressive numbers, as we saw in our earlier comparison. To be blunt, they smoke the GTX285. Performance scaling is quite good, at 76% increase for Jane Nash, and 79% at New Calico. There were no issues with twin cards at all, while running this benchmark. Unfortunately, the combination of three cards gave us terrible results. It's not worth even calculating the gains, as something is so obviously wrong with this combination of hardware and software.

At a higher screen resolution of 1920x1200, the story remains essentially the same. Jane gets an 80% increase for twin cards and New Calico gets an even smarter 86%. Truly, there is very little holding CrossFireXTM back in this synthetic benchmark, at least with two cards. We'll have to wait and see what is wrong with either the drivers or the benchmark when three cards are put into play. Catalyst 9.11 drivers are due to be released soon, so that might fix it.

We need to look at actual gaming performance to verify these results, especially in light of the troubles with TriFire, so let's take a look in the next section at how these cards stack up in the standard bearer for gaming benchmarks, Crysis.

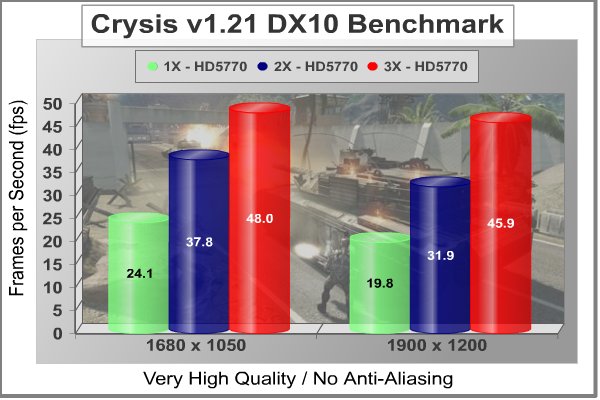

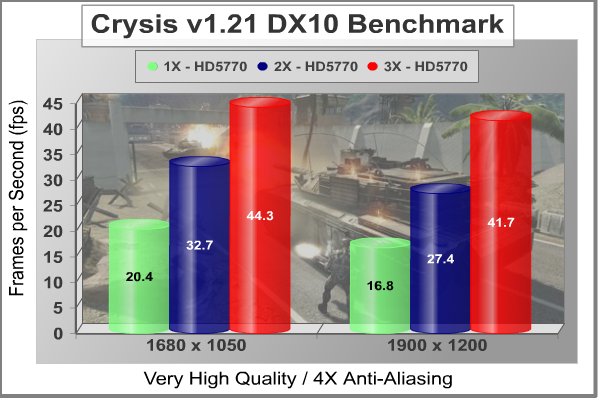

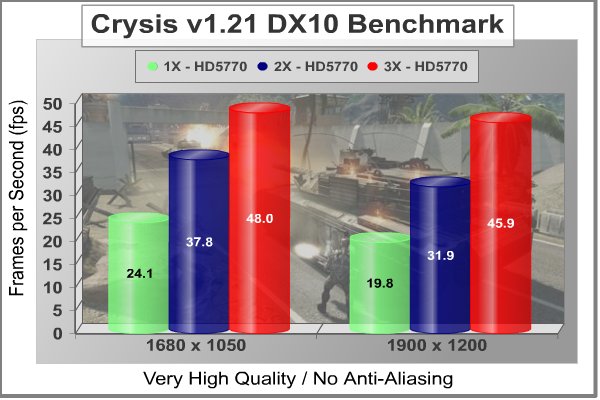

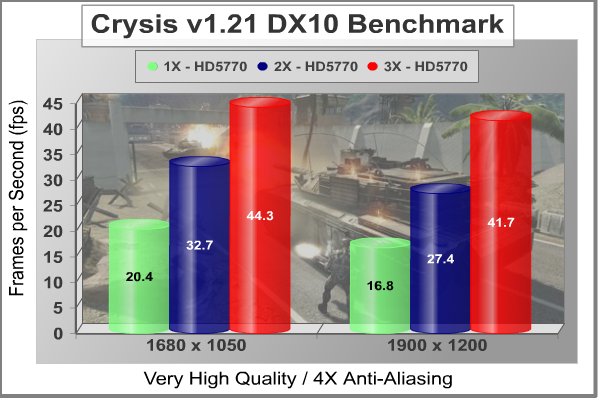

Crysis Benchmark Results

Crysis uses a new graphics engine: the CryENGINE2, which is the successor to Far Cry's CryENGINE. CryENGINE2 is among the first engines to use the Direct3D 10 (DirectX 10) framework, but can also run using DirectX 9, on Vista, Windows XP and the new Windows 7. As we'll see, there are significant frame rate reductions when running Crysis in DX10. It's not an operating system issue, DX9 works fine in WIN7, but DX10 knocks the frame rates in half.

Roy Taylor, Vice President of Content Relations at NVIDIA, has spoken on the subject of the engine's complexity, stating that Crysis has over a million lines of code, 1GB of texture data, and 85,000 shaders. To get the most out of modern multicore processor architectures, CPU intensive subsystems of CryENGINE 2 such as physics, networking and sound, have been re-written to support multi-threading.

Crysis offers an in-game benchmark tool, which is similar to World in Conflict. This short test does place some high amounts of stress on a graphics card, since there are so many landscape features rendered. For benchmarking purposes, Crysis can mean trouble as it places a high demand on both GPU and CPU resources. Benchmark Reviews uses the Crysis Benchmark Tool by Mad Boris to test frame rates in batches, which allows the results of many tests to be averaged.

Low-resolution testing allows the graphics processor to plateau its maximum output performance, and shifts demand onto the other system components. At the lower resolutions Crysis will reflect the GPU's top-end speed in the composite score, indicating full-throttle performance with little load. This makes for a less GPU-dependant test environment, but it is sometimes helpful in creating a baseline for measuring maximum output performance. At the 1280x1024 resolution used by 17" and 19" monitors, the CPU and memory have too much influence on the results to be used in a video card test. At the widescreen resolutions of 1680x1050 and 1900x1200, the performance differences between video cards under test are mostly down to the cards.

In my two prior reviews of the Radeon HD5770, I voiced my concerns about the DirectX 10 benchmarks for Crysis. They just seem unnaturally low, for the increase in visual quality that you get by moving up from DirectX 9. In DX10 only the highest performing boards get close to an average frame rate of 30FPS. It seems like we've gone back in time, back to when only two or three very expensive video cards could run Crysis with all the eye candy turned on. I guess we'll have to wait until CryEngine3 comes out, and is optimized for the current generation of graphics APIs.

Putting two 5770s in CrossfireX starts to get this game moving, and here we get to see three cards performing together as they should. The scaling isn't quite as impressive without anti-aliasing turned on; at 1900x1200, 2x nets you a 61% increase, and 3x gets you a 132% increase over a single card.

Add in some anti-aliasing, 4X to be exact, and the scaling improves a bit. This makes sense, as more of the processing load is transferred from the CPU to the GPU. Even so, Crysis is one of those games that really stress the CPU, and I was able to observe additional gains by bumping my CPU clock up a notch or two. I couldn't leave it there and get the full stability that is required for a series of benchmark runs, but I just wanted to satisfy my curiosity about whether more CPU power would improve the results; and they did. At 1900x1200, two cards give a 63% advantage over the lone card, and three brings it all the way up to 148%. Note to NVIDIA: a three card HD5770 setup is basically double the performance of a GTX285. Two-way and three-way CrossFireXTM worked without a hitch in this benchmark.

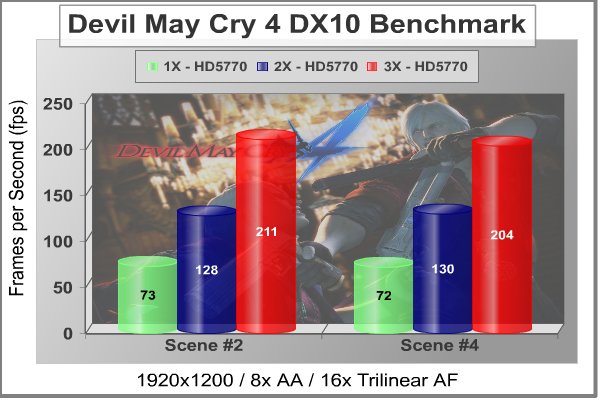

In our next section, Benchmark Reviews tests with Devil May Cry 4 Benchmark. Read on to see how a blended high-demand GPU test with low video frame buffer demand will impact our test products.

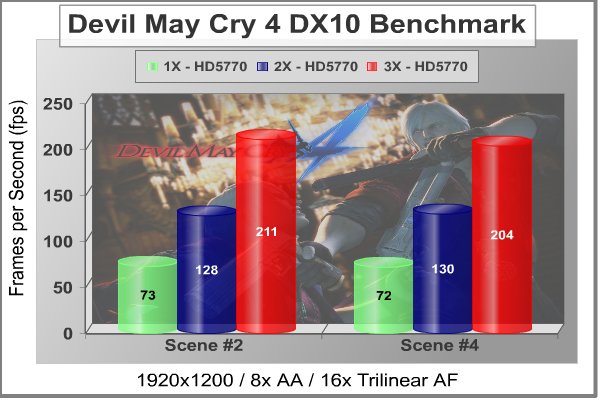

Devil May Cry 4 Benchmark

Devil May Cry 4 was released for the PC platform in early 2007 as the fourth installment to the Devil May Cry video game series. DMC4 is a direct port from the PC platform to console versions, which operate at the native 720P game resolution with no other platform restrictions. Devil May Cry 4 uses the refined MT Framework game engine, which has been used for many popular Capcom game titles over the past several years.

MT Framework is an exclusive seventh generation game engine built to be used with games developed for the PlayStation 3 and Xbox 360, and PC ports. MT stands for "Multi-Thread", "Meta Tools" and "Multi-Target". Originally meant to be an outside engine, but none matched their specific requirements in performance and flexibility. Games using the MT Framework are originally developed on the PC and then ported to the other two console platforms.

On the PC version a special bonus called Turbo Mode is featured, giving the game a slightly faster speed, and a new difficulty called Legendary Dark Knight Mode is implemented. The PC version also has both DirectX 9 and DirectX 10 mode for Windows XP, Vista, and Widows 7 operating systems.

It's always nice to be able to compare the results we receive here at Benchmark Reviews with the results you test for on your own computer system. Usually this isn't possible, since settings and configurations make it nearly difficult to match one system to the next; plus you have to own the game or benchmark tool we used.

Devil May Cry 4 fixes this, and offers a free benchmark tool available for download. Because the DMC4 MT Framework game engine is rather low-demand for today's cutting edge video cards, Benchmark Reviews uses the 1920x1200 resolution to test with 8x AA (highest AA setting available to Radeon HD video cards) and 16x AF.

The Radeon HD5770 CrossFireX scaled extremely well in this application. Scene two got increases of 75% and 189% for two and three cards, respectively. Scene four racked up 81% and 183%. Those are very impressive results for a game that wasn't born yesterday; several times, it's been clear that newer cards and drivers for those cards were not optimized for this game. Not so, here; when's the last time you saw FPS averages above 200 for a resolution of 1920x1200, with all the knobs turned up to the max? For all resolutions, I also saw something unusual; the FPS charts were for the most part straight, horizontal lines. You don't see that very often, either!

Even though it carries a The Way It's Meant to be Played logo, the combination of ATI's latest micro-architecture and the Capcom MT Framework turned in absolutely insane frame rates, well beyond what is required for this game. Note to ATI: this combo beat out an HD5970 running on an i7 platform. That's not supposed to happen...

Our next benchmark of the series is for a very popular FPS game that rivals Crysis for world-class graphics in exotic locales.

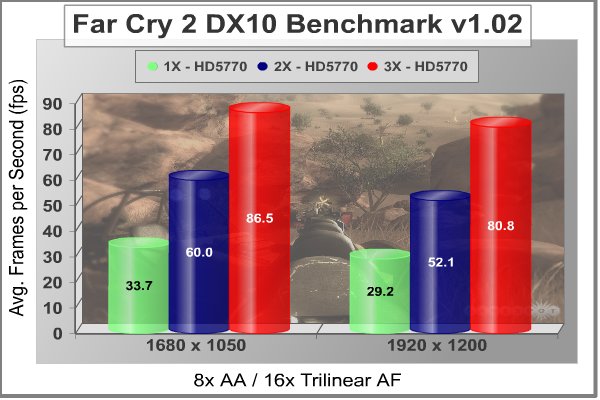

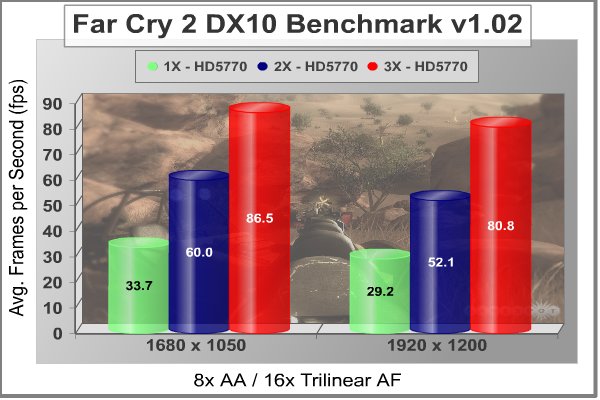

Far Cry 2 Benchmark Results

Ubisoft has developed Far Cry 2 as a sequel to the original, but with a very different approach to game play and story line. Far Cry 2 features a vast world built on Ubisoft's new game engine called Dunia, meaning "world", "earth" or "living" in Farci. The setting in Far Cry 2 takes place on a fictional Central African landscape, set to a modern day timeline.

The Dunia engine was built specifically for Far Cry 2, by Ubisoft Montreal development team. It delivers realistic semi-destructible environments, special effects such as dynamic fire propagation and storms, real-time night-and-day sun light and moon light cycles, dynamic music system, and non-scripted enemy A.I actions.

The Dunia game engine takes advantage of multi-core processors as well as multiple processors and supports DirectX 9 as well as DirectX 10. Only 2 or 3 percent of the original CryEngine code is re-used, according to Michiel Verheijdt, Senior Product Manager for Ubisoft Netherlands. Additionally, the engine is less hardware-demanding than CryEngine 2, the engine used in Crysis. However, it should be noted that Crysis delivers greater character and object texture detail, as well as more destructible elements within the environment. For example; trees breaking into many smaller pieces and buildings breaking down to their component panels. Far Cry 2 also supports the amBX technology from Philips. With the proper hardware, this adds effects like vibrations, ambient colored lights, and fans that generate wind effects.

There is a benchmark tool in the PC version of Far Cry 2, which offers an excellent array of settings for performance testing. Benchmark Reviews used the maximum settings allowed for our tests, with the resolution set to 1920x1200. The performance settings were all set to 'Very High', Render Quality was set to 'Ultra High' overall quality level, 8x anti-aliasing was applied, and HDR and Bloom were enabled. Of course DX10 was used exclusively for this series of tests.

Although the Dunia engine in Far Cry 2 is slightly less demanding than CryEngine 2 engine in Crysis, the strain appears to be extremely close. In Crysis we didn't dare to test AA above 4x, whereas we use 8x AA and 'Ultra High' settings in Far Cry 2. Here we also see the opposite effect, when switching our testing to DirectX 10. Far Cry 2 seems to have been optimized, or at least written with a clear understanding of DX10 requirements.

Using the short 'Ranch Small' time demo (which yields the lowest FPS of the three tests available), we once again see some excellent performance scaling at both widescreen (16:10) resolutions. Particularly at 1920x1200, the 3x result of 80.8 FPS is 177% better than one card on its own.

So far, all the gaming applications are turning in some impressive results with CrossFireX, either in 2X or 3X format. It's no longer a case of, "Well, it does better, BUT...", we're seeing results that are close to the theoretical maximums here. Our next benchmark of the series puts our CrossFireX collection against some fresh new graphics in the newly released Resident Evil 5 benchmark.

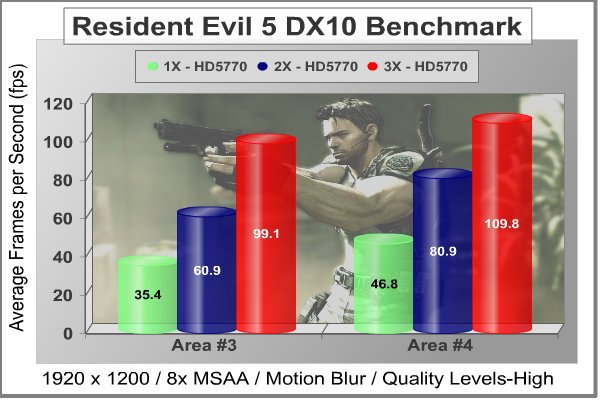

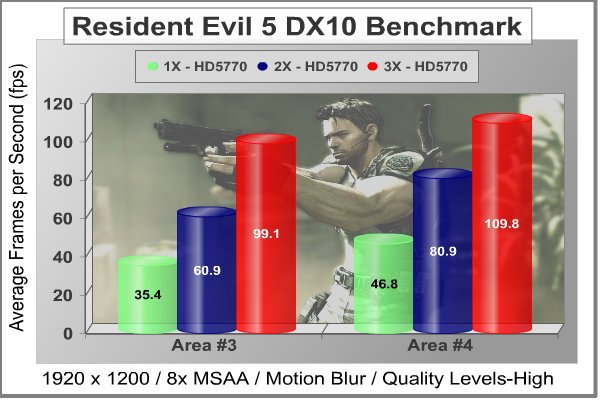

Resident Evil 5 Benchmark Results

PC gamers get the ultimate Resident Evil package in this new PC version with exclusive features including NVIDIA's new GeForce 3D Vision technology (wireless 3D Vision glasses sold separately), new costumes and a new mercenaries mode with more enemies on screen. Delivering an infinite level of detail, realism and control, Resident Evil 5 is certain to bring new fans to the series. Incredible changes to game play and the world of Resident Evil make it a must-have game for gamers across the globe.

Years after surviving the events in Raccoon City, Chris Redfield has been fighting the scourge of bio-organic weapons all over the world. Now a member of the Bio-terrorism Security Assessment Alliance (BSSA), Chris is sent to Africa to investigate a biological agent that is transforming the populace into aggressive and disturbing creatures. New cooperatively-focused game play revolutionizes the way that Resident Evil is played. Chris and Sheva must work together to survive new challenges and fight dangerous hordes of enemies.

From a gaming performance perspective, Resident Evil 5 uses Next Generation of Fear - Ground breaking graphics that utilize an advanced version of Capcom's proprietary game engine, MT Framework, which powered the hit titles Devil May Cry 4, Lost Planet and Dead Rising. The game uses a wider variety of lighting to enhance the challenge. Fear Light as much as Shadow - Lighting effects provide a new level of suspense as players attempt to survive in both harsh sunlight and extreme darkness. As usual, we maxed out the graphics settings on the benchmark version of this popular game, to put the hardware through its paces. Much like Devil May Cry 4, it's relatively easy to get good frame rates in this game, so take the opportunity to turn up all the knobs and maximize the visual experience.

The Resident Evil5 benchmark tool provides a graph of continuous frame rates and averages for each of four distinct scenes. In addition it calculates an overall average for the four scenes. Scenes depicting Area #3 and Area #4 consistently turn in the lowest scores during our testing, and as usual, we choose the most demanding and revealing tests to report on.

Area #3 seemed to favor the 3X configuration and Area #4 seemed to favor the 2X approach to CrossFireX. Taking the best results from both tests, two cards achieved a 73% performance gain in Area #4, and three cards achieved a 180% gain in Area #3. Once again, either two or three HD5770s in CrossFireX clean house, with frame rates that are beyond reproach. I feel duty bound to mention that these results are in close competition with the HD5970, at least when both are running stock clocks.

Our next benchmark of the series features a strategy game with photorealistic, modern warfare graphics: World in Conflict.

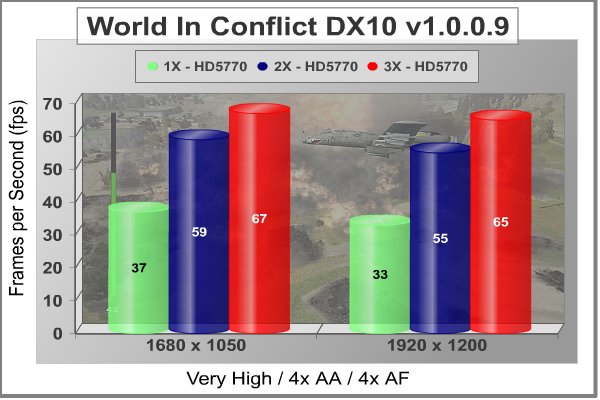

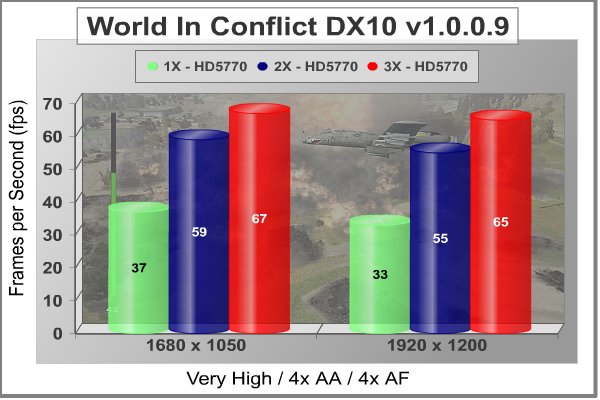

World in Conflict Benchmark Results

The latest version of Massive's proprietary Masstech engine utilizes DX10 technology and features advanced lighting and physics effects, and allows for a full 360 degree range of camera control. Massive's MassTech engine scales down to accommodate a wide range of PC specifications, if you've played a modern PC game within the last two years, you'll be able to play World in Conflict.

World in Conflict's FPS-like control scheme and 360-degree camera make its action-strategy game play accessible to strategy fans and fans of other genres... if you love strategy, you'll love World in Conflict. If you've never played strategy, World in Conflict is the strategy game to try.

Based on the test results charted below it's clear that WiC doesn't place a limit on the maximum frame rate (to prevent a waste of power) which is good for full-spectrum benchmarks like ours, but bad for electricity bills. The average frame rate is shown for each resolution in the chart below. World in Conflict just begins to place demands on the graphics processor at the 1680x1050 resolution, so we'll skip the low-res testing.

OK, there was bound to be one game where the performance scaling wasn't everything it could be. This is it: World In Conflict. Two cards do pretty well, offering 59% and 67% improvements over a single GPU solution at the two most popular widescreen resolutions, but the results with three cards are quite disappointing. You don't need the numbers to figure this one out; the chart tells the story in graphic detail.

Our next-to-last benchmark of the series is the first one to bring DirectX 11 into the mix, a situation that only the current crop of ATI cards are capable of handling.

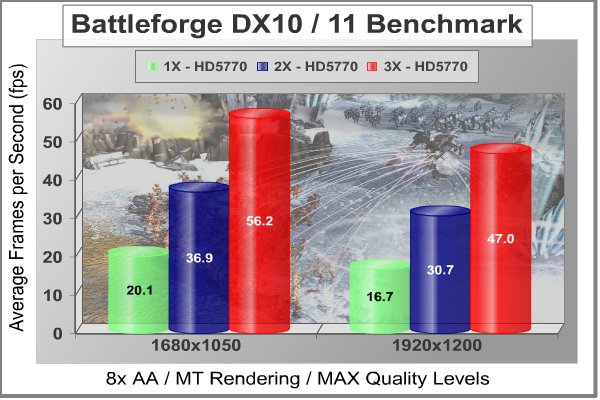

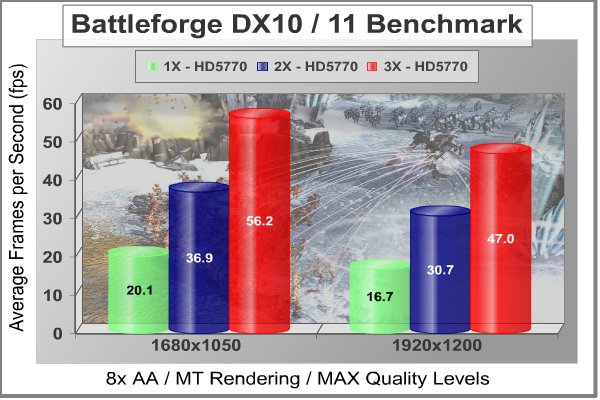

BattleForge - Renegade Benchmark Results

In anticipation of the Release of DirectX 11 with Windows 7 and coinciding with the release of AMD's ATI HD 5870, BattleForge has been updated to allow it to run using DirectX 11 on supported hardware. Well what does all of this actually mean you may ask? It gives us a sip of water from the Holy Grail of game designing and computing in general: greater efficiency! What does this mean for you? It means that that the game will demonstrate a higher level of performance for the same processing power, which in turn allows more to be done with the game graphically. In layman's terms the game will have a higher frame rate and new ways of creating graphical effects, such as shadows and lighting. The culmination of all of this is a game that both runs and looks better. The game is running on a completely new graphics engine that was built for BattleForge.

BattleForge is a next-gen real time strategy game, in which you fight epic battles against evil along with your friends. What makes BattleForge special is that you can assemble your army yourself: the units, buildings and spells in BattleForge are represented by collectible cards that you can trade with other players. BattleForge is developed by EA Phenomic. The studio was founded by Volker Wertich, father of the classic "The Settlers" and the SpellForce series. Phenomic has been an EA studio since August 2006.

BattleForge was released on Windows in March 2009. On May 26, 2009, BattleForge became a Play 4 Free branded game with only 32 of the 200 cards available. In order to get additional cards, players will now need to buy points on the BattleForge website. The retail version comes with all of the starter decks and 3,000 BattleForge points.

The BattleForge benchmark itself is a tough one, once all the settings are maxed out. The graphics are suitably impressive; even though they were developed primarily on the DirectX 10.1 platform. In case you are wondering, these results are with SSAO "On" and set to the Very High setting. We've seen good results from ATI Radeon cards on this benchmark before, so I'm not surprised that CrossFireX works like it should on this game.

Just two HD5770 cards get the average frame rate above 30FPS; three of them give you headroom to spare, at the highest settings possible. Two GPU scale up with an 84% performance increase, three GPU net you 180% improvement over the single card. It's interesting that the scaling is almost identical for both 1680x1050 and 1920x1200 resolutions. This hasn't been the typical case for all the other benchmarks.

In our next section, we look at the newest DX11 benchmark, straight from Russia and the studios of Unigine. Their latest benchmark is called "Heaven", and it has some very interesting and non-typical graphics. So, let's take a peek at what Heaven looks like.

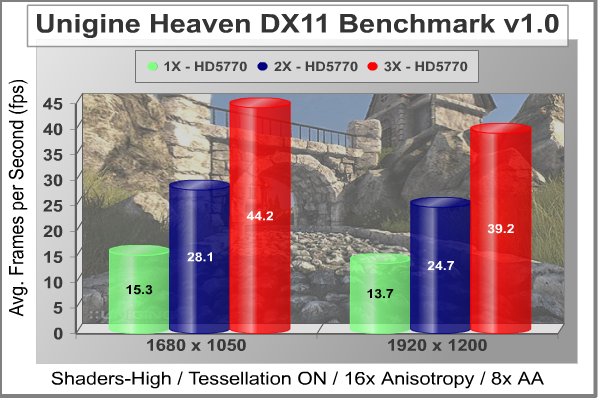

Unigine - Heaven Benchmark Results

Unigine Corp. released the first DirectX 11 benchmark "Heaven" that is based on its proprietary UnigineTM engine. The company has already made a name among the overclockers and gaming enthusiasts for uncovering the realm of true GPU capabilities with previously released "Sanctuary" and "Tropics" demos. Their benchmarking capabilities are coupled with striking visual integrity of the refined graphic art.

"Heaven" benchmark excels at providing the following key features:

-

Native support of OpenGL, DirectX 9, DirectX 10 and DirectX 11

-

Comprehensive use of tessellation technology

-

Advanced SSAO (screen-space ambient occlusion)

-

Volumetric cumulonimbus clouds generated by a physically accurate algorithm

-

Dynamic simulation of changing environment with high physical fidelity

-

Interactive experience with fly/walk-through modes

-

ATI EyeFinity support

The distinguishing feature of the benchmark is a hardware tessellation that is a scalable technology aimed for automatic subdivision of polygons into smaller and finer pieces, so that developers can gain a more detailed look of their games almost free of charge in terms of performance. Thanks to this procedure, the elaboration of the rendered image finally approaches the boundary of veridical visual perception: the virtual reality transcends conjured by your hand.

Unigine Corp. is an international company focused on top-notch real-time 3D solutions. The development studio is located in Tomsk, Russia. Main activity of Unigine Corp. is development of UnigineTM, a cross-platform engine for virtual 3D worlds. Since the project start in 2004, it attracts attention of different companies and groups of independent developers, because Unigine is always on the cutting edge of real-time 3D visualization and physics simulation technologies.

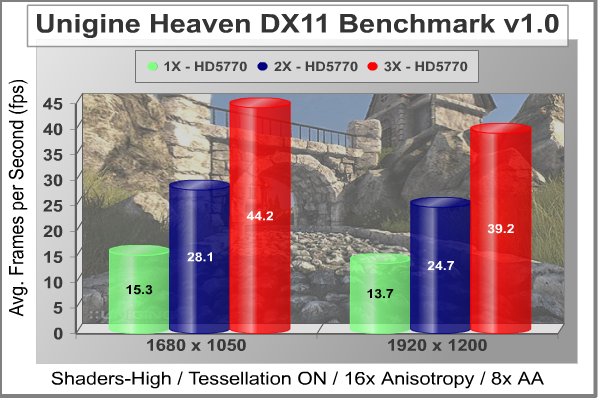

As we look at newer and newer benchmarks, from studios that have had an ongoing relationship with ATI, we are seeing better and better scaling factors. Once again, we turned on all the eye candy for this benchmark, because we didn't want to miss a trick. I have to say, the graphics were extremely impressive; the scenarios they scripted really showcase the new technologies available, particularly tessellation.

The CrossFireXTM scaling numbers are the best we've seen yet, and are really pushing the theoretical limit in this benchmark. At 1680x1050, the three card setup gives a 189% improvement over a single card. 200% is the limit, and we're within spitting distance of that, here. What's beyond belief is that this kind of performance is being realized with the first official driver release from ATI.

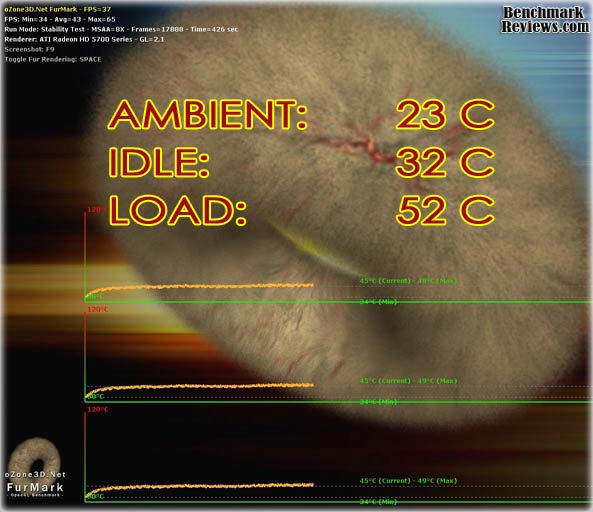

In our next section, we investigate the thermal performance of the Radeon HD5770, and see if that half-size 40nm GPU die still runs cool, when two and three cards are stacked one directly on top of one another. The GPU cooler for this card showed that it had lots of headroom in our earlier tests, so I don't anticipate the usual overheating problems one would typically see in a multi-GPU arrangement. But, that's why we test...just to make sure.

Radeon HD5770 CrossFireX Temperature

It's hard to know exactly when the first video card got overclocked, and by whom. What we do know is that it's hard to imagine a computer enthusiast or gamer today that doesn't overclock their hardware, or in this case, throw two or three of them together into one chassis. Of course, not every video card has the head room. Some products run so hot that they can't suffer any higher temperatures than they generate straight from the factory. This is why we measure the operating temperature of the video card products we test.

We've already seen in our previous reviews of Juniper-based video cards that the half pint chip runs impressively cool, paired with fairly basic cooling components. Now that we're dealing with an extra-ordinary situation, we need an extra margin of error in the cooling department. Normally, the stock blower runs about 1200 RPM, and increases with higher loads to somewhere between 1600 and 1700 RPM, depending on how hard you push the card in its stock configuration. I wanted to see how cool I could keep the GPU, so I clicked on the "Enable Manual Fan Control" check box for each card, in ATI Overdrive, and zoomed all the fans up to 100%, which I found out is 4000 RPM. Loud, yes? Effective, yes? Would I eventually end up trying to turn it down, until it made a difference in stability, yes? For now, I'm just happy that overkill settings are available in the factory driver package, and I don't have to worry at all about any of the GPUs overheating.

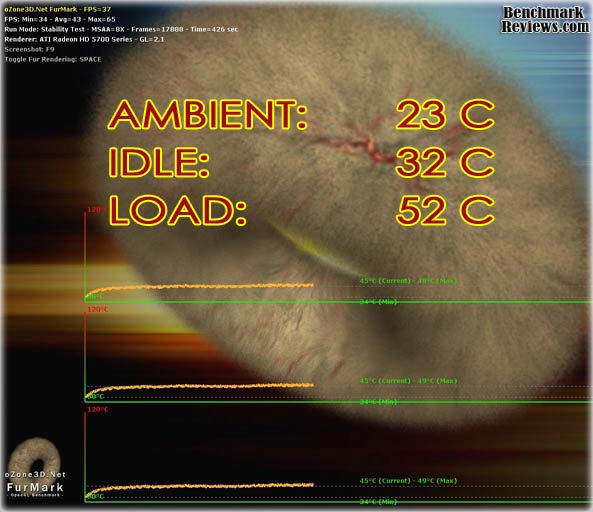

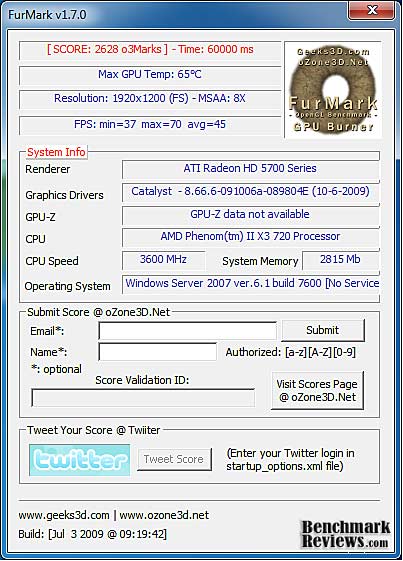

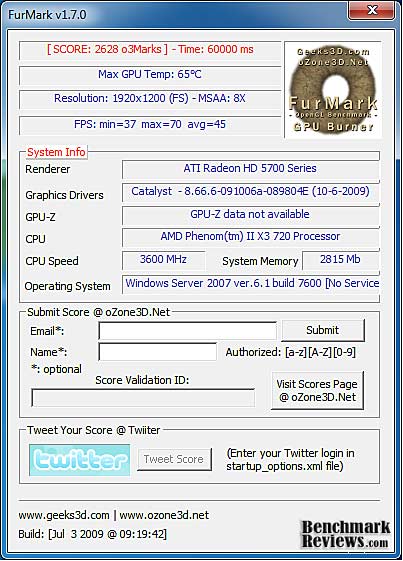

To begin testing, I use GPU-Z to measure the temperature at idle as reported by the GPU. Next I use FurMark 1.7.0 to generate maximum thermal load and record GPU temperatures at high-power 3D mode. The ambient room temperature remained stable at 23C throughout testing. All three ATI Radeon HD5770 video cards recorded 32C in idle 2D mode, and increased to 52C after 20 minutes of stability testing in full 3D mode, at 1920x1200 resolution and the maximum MSAA setting of 8X. I don't need to tell you that 52C is an astonishingly low temperature for a 3-GPU setup getting kicked around by FurMark. The cooling package on the Radeon HD5770 can take everything you can throw at it. I also did one run with all the fans set on AUTO, and the three GPUs only hit 65C, which is still a reasonable temperature, particularly for a multi-GPU system.

One thing deserves mentioning about the HD5770 cards, the reference cooler we all saw at launch is being replaced on some cards with a version more akin to the unit we saw on the HD5750. This arrangement forgoes the benefit of exhausting most of the heated air to the outside of the case. In a multi-GPU setup, this is not going to be ideal. Unfortunately, if the better performing cooler is going to remain available, it will probably command a price premium.

FurMark is an OpenGL benchmark that heavily stresses and overheats the graphics card with fur rendering. The benchmark offers several options allowing the user to tweak the rendering: fullscreen / windowed mode, MSAA selection, window size, duration. The benchmark also includes a GPU Burner mode (stability test). FurMark requires an OpenGL 2.0 compliant graphics card with lot of GPU power! As an oZone3D.net partner, Benchmark Reviews offers a free download of FurMark to our visitors.

FurMark does do two things extremely well: drive the thermal output of any graphics processor higher than any other application or video game, and it does so with consistency every time. While FurMark is not a true benchmark tool for comparing different video cards, it still works well to compare one product against itself using different drivers or clock speeds, or testing the stability of a GPU, as it raises the temperatures higher than any program. But in the end, it's a rather limited tool.

In our next section, we discuss electrical power consumption and learn how well (or poorly) this type of video card setup will impact your utility bill...

VGA Power Consumption

Life is not as affordable as it used to be, and items such as gasoline, natural gas, and electricity all top the list of resources which have exploded in price over the past few years. Add to this the limit of non-renewable resources compared to current demands, and you can see that the prices are only going to get worse. Planet Earth is needs our help, and needs it badly. With forests becoming barren of vegetation and snow capped poles quickly turning brown, the technology industry has a new attitude towards suddenly becoming "green". I'll spare you the powerful marketing hype that I get from various manufacturers every day, and get right to the point: your computer hasn't been doing much to help save energy... at least up until now.

To measure isolated video card power consumption, Benchmark Reviews uses the Kill-A-Watt EZ (model P4460) power meter made by P3 International. A baseline test is taken without a video card installed inside our computer system, which is allowed to boot into Windows and rest idle at the login screen before power consumption is recorded. Once the baseline reading has been taken, the graphics card is installed and the system is again booted into Windows and left idle at the login screen. Our final loaded power consumption reading is taken with the video card running a stress test using FurMark. Below is a chart with the isolated video card power consumption (not system total) displayed in Watts for each specified test product:

Video Card Power Consumption by Benchmark Reviews

VGA Product Description

(sorted by combined total power) |

Idle Power |

Loaded Power |

NVIDIA GeForce GTX 480 SLI Set |

82 W |

655 W |

NVIDIA GeForce GTX 590 Reference Design |

53 W |

396 W |

ATI Radeon HD 4870 X2 Reference Design |

100 W |

320 W |

AMD Radeon HD 6990 Reference Design |

46 W |

350 W |

NVIDIA GeForce GTX 295 Reference Design |

74 W |

302 W |

ASUS GeForce GTX 480 Reference Design |

39 W |

315 W |

ATI Radeon HD 5970 Reference Design |

48 W |

299 W |

NVIDIA GeForce GTX 690 Reference Design |

25 W |

321 W |

ATI Radeon HD 4850 CrossFireX Set |

123 W |

210 W |

ATI Radeon HD 4890 Reference Design |

65 W |

268 W |

AMD Radeon HD 7970 Reference Design |

21 W |

311 W |

NVIDIA GeForce GTX 470 Reference Design |

42 W |

278 W |

NVIDIA GeForce GTX 580 Reference Design |

31 W |

246 W |

NVIDIA GeForce GTX 570 Reference Design |

31 W |

241 W |

ATI Radeon HD 5870 Reference Design |

25 W |

240 W |

ATI Radeon HD 6970 Reference Design |

24 W |

233 W |

NVIDIA GeForce GTX 465 Reference Design |

36 W |

219 W |

NVIDIA GeForce GTX 680 Reference Design |

14 W |

243 W |

Sapphire Radeon HD 4850 X2 11139-00-40R |

73 W |

180 W |

NVIDIA GeForce 9800 GX2 Reference Design |

85 W |

186 W |

NVIDIA GeForce GTX 780 Reference Design |

10 W |

275 W |

NVIDIA GeForce GTX 770 Reference Design |

9 W |

256 W |

NVIDIA GeForce GTX 280 Reference Design |

35 W |

225 W |

NVIDIA GeForce GTX 260 (216) Reference Design |

42 W |

203 W |

ATI Radeon HD 4870 Reference Design |

58 W |

166 W |

NVIDIA GeForce GTX 560 Ti Reference Design |

17 W |

199 W |

NVIDIA GeForce GTX 460 Reference Design |

18 W |

167 W |

AMD Radeon HD 6870 Reference Design |

20 W |

162 W |

NVIDIA GeForce GTX 670 Reference Design |

14 W |

167 W |

ATI Radeon HD 5850 Reference Design |

24 W |

157 W |

NVIDIA GeForce GTX 650 Ti BOOST Reference Design |

8 W |

164 W |

AMD Radeon HD 6850 Reference Design |

20 W |

139 W |

NVIDIA GeForce 8800 GT Reference Design |

31 W |

133 W |

ATI Radeon HD 4770 RV740 GDDR5 Reference Design |

37 W |

120 W |

ATI Radeon HD 5770 Reference Design |

16 W |

122 W |

NVIDIA GeForce GTS 450 Reference Design |

22 W |

115 W |

NVIDIA GeForce GTX 650 Ti Reference Design |

12 W |

112 W |

ATI Radeon HD 4670 Reference Design |

9 W |

70 W |

* Results are accurate to within +/- 5W.

The three Radeon HD5770 cards pulled 77 (196-119) watts at idle and 388 (507-119) watts when running full out, using the test method outlined above. That works out to 26 watts and 129 watts per card, which is a little bit above the factory numbers of 18W at idle and 108W per card under load. I attribute most of that to me manually setting the fans to a constant 100%, and to the load that FurMark put on the CPU. As I mentioned before, these are very reasonable power numbers for a 3-GPU setup, and well within the range that most PSU can provide. No need for a 1,000 watt power supply to run these at full power, I made do with my trusty single-rail 750W Corsair. I even had one PCI-E cable to spare.

Radeon HD5770 CrossFireX Final Thoughts

AMD is slowly working towards a future vision of graphics computing, as is their main competitor, Intel. They both believe that integrating graphics processing with the CPU provides benefits that can only be achieved by taking the hard road. For now, the only thing we can see is their belief; the roadmap is both sketchy and proprietary. One look at the size of the Juniper GPU die and it starts to look more like a possibility than a pipe dream, though.

AMD's latest wonder chip, named Llano, uses 32nm technology to incorporate 1 billion transistors on a single die. No one is saying yet, what the split is, between CPU and GPU, but 50-50 is probably close. Given that this review covers the performance of 3 billion transistors dedicated to graphics processing and connected together in CrossFireX, you might wonder what relevance there is to a discussion of AMD's Fusion architecture.

Won't enthusiasts always want the highest possible performance? Yes. The question becomes, then, how best to achieve that performance. The brute strength method is to throw as many transistors you can muster at the GPU socket, and turn up the clocks. But what if it turns out that to really harness the power of multiple GPUs working in tandem, that you need a supervisor? Today, we talk about the CPU bottlenecking the system when we have an overabundance of GPU power. Maybe what is really happening is that the GPUs aren't working as effectively as they could be with a smarter and more energetic leader.

In order to lead a massive force, you need a command, control, and communication infrastructure. We've already seen how the integration of a PCI-E controller into the Intel i5 chip improved the graphics performance of the 1156 platform. I'm not sure how to combine the packaging of CPUs and GPUs into an APU, and retain the ability to easily scale the system performance like we can do with CrossFireXTM, but I'm also sure that it is possible, and I hope to see it one day.

Radeon HD5770 CrossFireX Conclusion

The performance of one ATI HD5770 is adequate for most game titles. In stock form, or with a modest overclock, it can compete with an NVIDIA GTX260-216. However, these are mid-range GPUs with only half the horsepower of a Cypress chips. If you want more performance, you don't have to get rid of your HD5770, just add one or two more. In most of our tests, the scaling in CrossFireX has been exemplary. In more than one case, three HD5770 cards working together, conspired to beat the massive new HD5970. Now that's performance! Sadly, on two benchmarks, World in Conflict and 3DMark Vantage, scaling was poor, or negative. I'm sure ATI is working on a fix for this, and Futuremark is working on a DX11 benchmarking suite, so I don't expect this situation to last.

One performance disadvantage to CrossFireXTM is the fact that only the memory of one card is in use. The other RAM just sits there, idle. So, in all of our tests, we were working with 1GB of GDDR5. At our maximum testing resolution of 1920x1200, that wasn't a problem. At 2560x1600, or with multiple monitors, it could have become a handicap. On the whole, we went looking for some excellent performance scaling and we found it. We also found very reasonable power requirements and operating temperatures, both well established traits of the entire HD5xxx series.

The appearance of the product is, unfortunately, not improved by anything close to a factor of 3x. However, there is a certain look of muscularity that you get when three of these black and red devices sit side by side. So, appearance scaling is maybe a 1.75x...?

The construction of the Radeon HD5770 cards wasn't really an issue in this test, other than their ability to perform in close quarters. The fan shrouds did not have the benefit of the tapered housing that was introduced on the second generation of NVIDIA cards. All the same, I saw absolutely no differences in temperature across all three cards, at any time during my testing. As for the construction quality of the software that made this test possible, there were some dicey moments when first configuring three-way CrossFireXTM. The Catalyst Control Center software caused the screen to flicker several times, and go dark for several seconds once. I did some last minute testing with the 9.11 version of CCC, and this behavior was much improved.

As I said before, I had a hard time thinking about features in the context of this review. I mean, CrossFireX IS a feature, so now I'm talking about the features of features. Well this feature is all about performance, and I think we have a winner here. In CCC, you have the option of turning CrossFireX on or off, and when on, you can choose to employ all three, or two of the three cards in CrossFireX. Maybe ATI is planning for a PhysX implementation, and they needed the option of keeping one card out of the fire....

As of late November, Newegg is selling a few Radeon HD5770 cards at $164.99; three of them will set you back less than $500. Consider the fact that this combination smokes all of the following cards: GTX285, HD5870, GTX295, and beats the HD5970 in a couple scenarios with the Far Cry 2 and Resident Evil 5 benchmarks. If that's not a bargain, I don't know what is. There is an additional cost in system complexity, and partial incompatibility, however. You don't want to go this route if your favorite game is World in Conflict, at least until performance is addressed in a driver update.

Radeon HD5770 CrossFireXTM earns a Gold Tachometer Award, because it delivers an unprecedented level of performance at the $500 mark. Not only that, it's budget friendly in another way; you don't have to come up with all the cash up front. You can buy one card now, and have a guaranteed upgrade path. What makes this combination more worthy than CrossFireX combinations in the past is its scalability with first generation drivers. This is the first time an entirely new architecture has come out of the gate with this level of stability and performance in a multi-GPU arrangement.

Pros:

+ Unmatched CrossFireX performance scaling

+ Mature performance levels at product launch

+ In some cases, outperforms an HD5970

+ Lots of cooling headroom available

+ Requires only one 6-pin power connector per card

+ Can be used with modest power supplies

+ Easy payment plan available

+ ATI Overdrive simple to use

+ Most heat is exhausted outside the case

Cons:

- Only 1GB of memory available, 3GB paid for

- Industry migrating towards lower cost cooler

Ratings:

-

Performance: 9.50

-

Appearance: 9.00

-

Construction: 9.00

-

Functionality: 9.50

-

Value: 9.50

Final Score: 9.30 out of 10.

Questions? Comments? Benchmark Reviews really wants your feedback. We invite you to leave your remarks in our Discussion Forum.

Related Articles:

|

Comments

scores with the same set-up: 1920 x 1200 with all settings were max out 8xAA was only 24.1fps with the single card and 42.1 fps with crossfire setup.

Specs:

GPU: Sapphire ATI 5770 Vapor X crossfire config with 1 crossfire cable connected

Proci: thuban 1090t h50 water cooled

mobo: msi 790gx-65g

hdd: samsung 500gb 7200rpm 3.5

mem: ocz obsidian dd3 1600mhz 4GB ( 2by2Gb)

PSU: thermaltake litepower 600w

Monitor: Sony 32in bravia lcd TV

software: dx 11 cat 10.11 win 7

can somebody please help..could it be some components maybe bottleneck?