| The Fast-Enough Budget Computer: Built and Tested |

| Articles - Featured Guides | |

| Written by David Ramsey | |

| Thursday, 24 March 2011 | |

The Fast Enough Computer: Built and TestedRecently, I wrote an op-ed piece here titled "The Fast Enough Computer". I argued that for gamers, low- to mid-range components provided the most bang for the buck and could readily play most modern games. The metric I used was "30 frames per second at 1680x1050". In this follow-up, I build a system based on the components I thought would be adequate and test the result with several modern games. I noted that frame rates in excess of 30fps were generally imperceptible, and that frame rates in excess of 60fps were wasted because most monitors don't refresh the screen any faster. Well, those assertions were not left unchallenged! If you read the comments on that article, you'll see that while some agreed with me, there were also those who claimed that my hardware suggestions were far too modest for serious gaming, and that they could indeed discern a visual difference between 60 and 120fps.

It's folks like those who keep companies producing things like Radeon 6990 video cards. And they should feel completely free to keep on buying them, because my recommendations aren't for everyone: they're for those who either have a limited budget (not everyone can afford an NVIDIA GTX580) or just want to get the most bang for their buck. Well, that first article was all theoretical; this article is all about empericism. Let's see how my Fast Enough Computer actually performs with real games. The GoalJust to be clear: I'm not trying to build the ultimate gaming box; rather, my goal is to build a gaming system that will play most modern games at an average frame rate of 30fps or higher at a resolution of 1680x1050 pixels for the least amount of money. A secondary goal is that the system should be easily upgradeable to increase its performance so that it can last at least a few years without requiring major expenditures. The SystemThis is actually a less-than-optimal time to design such a system: Intel may have some lower-end Sandy Bridge parts coming out, and AMD's forthcoming "Bulldozer" processors may change everything. But I can only build with what you can actually buy right now. I'm going with AMD despite their CPU horsepower disadvantage relative to Intel for three main reasons:

So here are the main components of the system:

AMD's Black Edition Phenom II X2 processors are both inexpensive and highly overclockable; in fact, when the 560 Black Edition was introduced, AMD touted its relevance to "extreme overclockers" (i.e. the liquid-nitrogen guys) who could push things as far as they wanted and only risk smoking a relatively inexpensive processor! The Radeon 6850 is a solid mid-range video card that offers performance that equals or exceeds the NVIDIA GTX460 cards in most games at a slightly lower price, and also offers the option of triple-monitor Eyefinity gaming. Benchmark Reviews has done enough tests to prove that expensive, high-speed and low-latency memory has a minimal (if any) effect on your gaming experience, so I used generic DDR3-1333, and the optical and hard drives were whatever I had laying around. The motherboard was an ASUS Crosshair III Formula, but any 790FX or 890FX motherboard would work. GX-series motherboard would provide the same base performance but have only 22 PCI-E lanes, which severely limits your upgrade potential since they're unsuitable for multiple video card setups. The Fast Enough Computer runs Windows 7 Home Premium 64-bit, with AMD's Catalyst Software Suite 11.2 and the latest game profiles available as of the time of this test. So let's get to it...

Testing and ResultsIf you want to read detailed tests on the processor and video card used in the Fast Enough Computer, Benchmark Reviews tested the AMD Phenom II X2 560 Black Edition CPU here and the Sapphire Radeon 6850 video card here (the AMD 560BE CPU is identical to the AMD 565BE CPU I used, except that its stock clock speed is 3.3GHz rather than 3.4GHz). I wanted to see how overclocking and hardware upgrades affected performance, so I tested five different hardware configurations:

Both AMD CPUs were tested with the stock heat sink. I was able to easily overclock the 565BE to 3.9GHz by increasing the multiplier to 19.5x; no voltage tweaks were required. The processor would boot and run at 4.0GHz (20x multiplier) but would fail stress testing. Poking around the interwebs, I see that the maximum air-cooled overclock of the 560/565 seems to be about 4.1GHz, so I don't see much point in spending extra money for a third-party CPU cooler that would only enable another 200MHz or so of clock speed. I increased the shader clocks on the Sapphire Radeon HD6850 from the stock 775MHz to 900MHz and the memory clock from 1000MHz to 1050MHz. These speeds seem to be reachable by any retail Radeon HD6850 video card, but dedicated overclockers might be able to squeeze out a bit more performance. The games I benchmarked were Crysis Warhead, Street Firghter IV, Aliens vs. Predator, Battlefield: Bad Company 2, and BattleForge. I tested at 1680x1050, and in each case I set the game settings as high as I could consistent with my 30fps-average goal. Aliens vs. Predator and Street Fighter IV were tested with dedicated benchmarking utilities released by the game vendors; BattleForge was tested with its built-in benchmark tool, Crysis Warhead was tested with the HOC Crysis Warhead benchmark tool, and Battlefield: Bad Company 2 was tested using FRAPS. I didn't include synthetic benchmarks like Unigine Heaven or 3DMark Vantage since I was only interested in game performance. DX10: Crysis WarheadWhen the original Crysis debuted in late 2008, it quickly garnered a reputation for bringing the most powerful systems to their knees. Even today, its CryEngine game engine needs a lot of hardware thrown at it to get good frame rates at decent resolutions without having to turn off many of the visual effects.

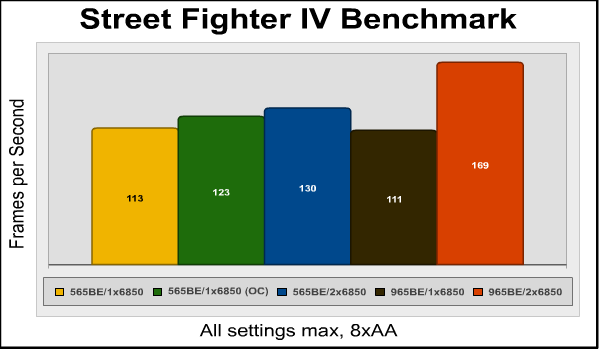

The Fast Enough Computer returned a solid 37 frames per second average in its base configuration. However, in the latter part of the "Airfield" demo, where Sykes emerges from the crashed cargo plan and jets are attacking the Exosuit, frame rates drop below 20fps (the minimum was 13fps, the maximum, 45fps), which made the visuals rather stuttery. Actually playing the game revealed that while it's broadly "playable", you'll frequently see the frame rate drop low enough to be irritating. Turning off anti-aliasing increased the maximum frame rate recorded in the benchmark from 45 to 51fps, but surprisingly did not affect the minimum or average frame rates. The overclocked configuration increased the average frame rate a little over 10%, but surprisingly, there was almost no benefit from adding another 6850 in CrossFireX. Switching out the 565BE to a quad-core Phenom II 965 Black Edition gained a mere one frame per second, but adding CrossFireX into this mix cranked things up another nine frames per second, although the minimum frame rate was still only 15 frames per second. In general CryEngine games seems to "like" NVIDIA video cards better. Sadly, the 890fx chipset does not support SLI, so although you could use a single NVIDIA video card in the Fast Enough Computer, your only upgrade option would be to replace it with a faster card. Overall, I'd have to say that Crysis Warhead is a marginal proposition on the Fast Enough Computer, which just isn't Fast Enough for this game. DX10: Street Fighter IVStreet Fighter IV is the latest in a series of games that began back in 1987. It uses a proprietary Capcom graphics engine, and despite its high-speed visuals, it just doesn't require much graphics horsepower...your netbook would probably make a perfectly adequate Street Fighter IV platform. Capcom makes a dedicated Street Fighter IV benchmark available so you can test your system, and that's what I ran.

With all visual settings at their maximums, the Street Fighter IV benchmark returned 113 frames per second. Overclocking and CrossFireX added a few percentage points, and going to a four-core processor actually slowed things down fractionally. However, CrossFireX proved synergistic with the Phenom II 975 processor, and frame rates shot up by over 50%. This phenomenon is something I'd see repeated in other tests.

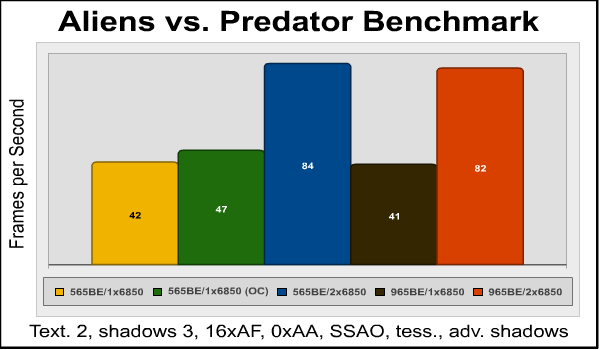

Testing and Results, ContinuedWe've gotten some pretty good DirectX 10 results, but how will the Fast Enough Computer handle DX11 games? Let's start by looking at the results from Aliens vs. Predator. DX11: Aliens vs. PredatorThis game uses the Asura game engine developed by the publisher, Rebellion. Rebellion provides a stand-alone benchmarking tool for this game (I love stand-along benchmarking tools) which I used for testing. So how does our test system handle it?

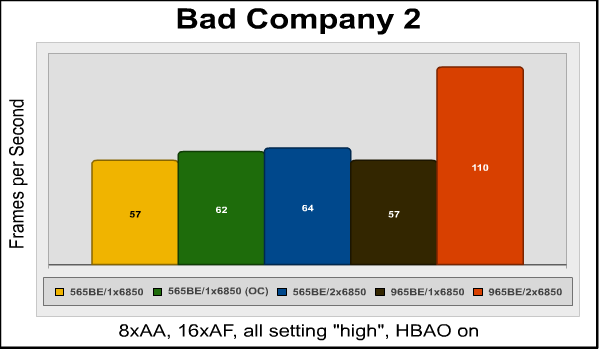

And the answer is "Pretty well!" The only thing I had to compromise on to get decent frame rates was anti-aliasing; turning it on dropped the frame rates for the stock, single-card configuration below 30fps average. Other advanced visual features like sub-surface ambient occlusion (SSAO) and tesselation add to the visual appeal of the game with a minimal effect on the frame rate, and so I left them on. Overclocking the CPU and video card brought frame rates up about 12 percent, to 47fps, but adding a couple more processor cores actually dropped the frame rate very slightly. While AvP doesn't make any real use of additional processor cores, it absolutely loves CrossFireX, with perfect 100% scaling (a doubling of frame rates) when another Radeon 6850 is added to the system. In a CrossFireX system, turning on 4xAA still drops the average frame rates significantly, to 57-58 frames per second, but that's still plenty fast enough for a very smooth visual experience. DX11: Battlefield: Bad Company 2I am not a big fan of FPS war games, but Battlefield: Bad Company 2 is good enough to suck me into its world. Although not a "pure" DX11 title (the main rendering is performed in DX10, but some DX11 visual features are added), it still offers highly detailed environments that contain a lot of destructible elements, which adds a lot to a battle game. Throw in a variety of combat locations and scenarios and decent A.I. for your squad mates and you have something that's become one of my favorite FPS games.

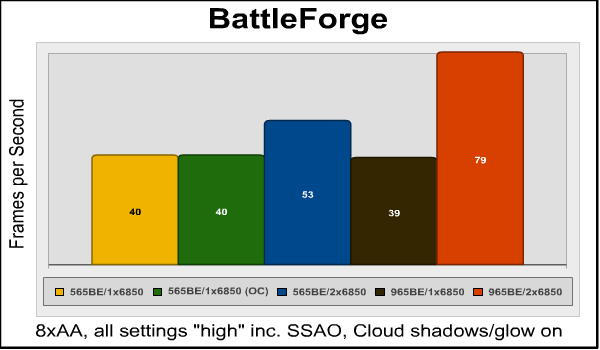

I expected this game to be problematic, at least on the base configuration, but a solid 57 frames per second average with 8xAA, horizon-based ambient occlusion (HBAO), and all settings on "high" proved me wrong. What's interesting here is that overclocking the CPU and video card didn't help much, nor did CrossFireX on the dual-core system, or a quad-core processor with a single Radeon 6850. But the combination of quad-core and two HD6850s? Wow! Frame rates virtually doubled. Apparently, the dual-core Phenom II 565BE can't process enough data to keep a CrossFireX system fed in this game. Granted, frame rates still increased a touch over 12% (57 to 64fps) on the dual-core system when moving to CrossFireX, but it's obvious that this game needs multiple cores and multiple video cards for best performance. DX11: BattleForgeBattleForge has a handy built-in benchmark test so you can discover the best settings for your system. With 8x anti-aliasing and all other settings set to "high", the average frame rate was 40fps on the base system. BattleForge has an "auto multithreading" option that I left turned on, but as you can see it made no difference in frame rates, at least in this test.

This was the only game that was completely unaffected by overclocking— note that the frame rates are identical between the stock and overclocked systems. Although just doubling the number of processor cores has no effect on BattleForge performance, it does make more effective use of multiple video cards in CrossFireX. With the dual-core 565 Black Edition CPU, adding another HD6850 video card increases frame rates by just over 32%, but when paired with a quad-core processor (which by itself has no effect on performance), frame rates double, duplicating the 100% scaling we saw previously with the Aliens vs. Predator benchmark.

Final ThoughtsI think I've proved my premise: you can build a pretty decent gaming machine with a lot of headroom for expansion without spending a lot of money. With the exception of Crysis Warhead, all games produced smooth, playable frame rates even on the base, non-overclocked system. When I wrote the original "Fast Enough Computer" piece, the Steam hardware survey reported that the most common gaming resolution (for Steam games, anyway) was 1680x1050. At the conclusion of the testing I performed for this follow-up article, though, I noticed that 1680x1050 has been edged out by 1920x1080 as the most common resolution: 1920x1080 screens represent 18.86% of the total as compared to 18.25% for 1680x1050...so I may want to revisit this system and test with the higher resolution at some point. Looking at the test results, a few things stand out:

Building and testing this system was a real learning experience, and we hope you've found this article useful. We invite you to leave your remarks in our Discussion Forum.

Related Articles:

|

|

Comments

Honestly though, I can't believe you wanted 3.4GHz x2 more than 3.0-3.2GHz x4. You even brought up in your review that the AMDs max out around ~4GHz! All their iterations with .1GHz stepping are pretty redundant once you get close enough to start nearing that barrier while overclocking.

Hmm... Actually I'm starting to like the gambler aspect of your original cpu choice. Ha!

Athlon II x2's are much rarer to unlock because a good portion of them are 'true' dual cores and there is nothing to unlock. Every once in a while though some people still get quads that have been disabled to create an x2.

Athlon II x3's also have a good unlock rate because they are all quads with a disabled 4th core.

1. It?s cheaper, and this article is all about building cheap!

2. The extra cores do add extra performance, and I think that would be most visible in the Crysis test.

On top of that there are a couple of

...

On top of that there are a couple of sub $50 quality PSUs that will handle this rig just as well, shaving quite a bit off the total cost.

As for the system settings for the evaluation I think adding a second graphics card is way off topic. It would be much more interesting to see if the 6850 really is the cheapest option at the chosen resolution. A comparison to a slightly cheaper GTX 550Ti and a considerably cheaper 5770 would show if you got the right pick. (I think the cheaper cards are sufficient for 1680x1050.)

The evaluation method with looking at average framerates in benchmarks really doesn?t cut it at this level. The only useful method is to actually play the games and see what graphic quality settings can be used to provide the best experience with each system.

The 5770 is a nice card, but the 6850 is significantly faster, and even it was marginal in Crysis Warhead. Of course, everyone will have their own balance of price and performance.

I disagree, sort of. It all boils down to the definition of "later". Buying a second 6850 two years from now will probably provide less of a performance raise than buying a same cost latest generation card at that time.

Even if I had the option last summer I didn't spend USD 250 on a second GeForce7800GT to improve my graphics, but went for a USD 200 HD5770 instead.

Secondly one can argue that ability to upgrade isn't a defined part of the objective, but rather simply getting sufficient performance right now at the least possible starting cost.

Still, it's my personal opinion that adding a second graphics card is rarely the most cost effective upgrade path, unless it's done in a very short time span. (Then the extra card should really be added to the system cost. Not buying it directly is simply a form of credit.)

With the HD6790 hitting the shelves in a week I expect 5770 to become a rarity in a few months, so buying myself a second card once the single card becomes insufficient (in a year or so) might become problematic unless I go for a used card.

My main complain about using CF in the test though is that it doesn't show the single 6850 to be the ultimate option for the initial build.

1.) The GeForce 7800GT is a 4 or 5 year old card, not a 2 year old card so your 2-year comparison is invalid.

2.) Just last summer I added an HD 4870 1GB to the one I already had. It only cost me $100 from newegg. With the 2 HD 4870s underfoot, I now have performance in HD 5870 territory because 2 separate HD 4870s are more powerful than a single HD 4870x2.

There was approximately a 2 year gap between GPU purchases. Do you honestly think that I could have gotten a better deal than $100 to completely catch up? I don't think so and believe me, I don't care about DX11 AT ALL at this point because it's still a rarity. Most games are still DX10 and most (if not all) online games are still DX9. The horsepower of these cards means that I CAN play Crysis Warhead at 1920x1080 at max settings (full AA, full AF) and have a buttery-smooth experience all the way through. ATi just keeps making CrossfireX better so the results with the HD 6xxx series should be even better than mine.

The 2-year limit is my arbitrary minimum expected usage span of a graphics solution before upgrading. In this case I felt no need to upgrade the graphics until considerably later because, as you note, DX9 kept up as the ultimate technical requirement. In the end I just needed a faster card, with some added features that I will be able to make use of once I also upgrade the OS.

"2. ... Do you honestly think that I could have gotten a better deal than $100 to completely catch up? I don't think so and believe me, I don't care about DX11 AT ALL ..."

Just as I was lucky in the development of games not needing any upgrade at all you're lucky that DX11 hasn't caught on as fast as DX9 did over DX8.1. The two reasons being that many gamers stick to Windows XP and consoles don't support DX10/11.

I, for one, don't expect this sluggish development to last.

"... the results with the HD 6xxx series should be even better than mine."

With the 7000-series expected to hit the shelves in time for X-mas I wonder how long one can wait before finding 6850 at reasonable prices out of stock.

I think my main point is that if you need to upgrade the graphics soon you either picked a too slow card to start with or there's a new feature around that isn't supported by the card you have. If you need to upgrade later it's a bit of a gamble whether a second similar card is a good option or not.

Result(1): Minimum= 28 FPS Average= 36 FPS Max= 61 FPS

and there yee go

Me, I'd be stuck with the $800 because I my computer is ancient with IDE and AGP :P

You could have used the Phenom II 840 for a little less money and gotten the same results at least plus been using a quad core.

The crossfire upgrade is a solid option but your test proves a point hat people overlook, only some games get a true benefit from multi-card video. Moving up to a bigger single card can cost nearly the same and will give across the board increases.

With that in mind the budget for the system could be further reduced by using a single card GPU and using an 870 based board. The performance is the same and the cost is lower.

Finally on the PSU, you can buy solid units for under $100 like the Antec Green series.

Overall a solid article with a premise that has been true for some time, luxury parts are not needed for a great gaming experience.

Shouldn t it have a fast enought for the other side of the fence too ?

I couldn t care much because i m way above minimum.

But in the matter of justice, this sounds like a payed article, propaganda for one brand, a one sided eye, what happened to the other eye ?

But then you wake up, and realize that writers here at Benchmark Reviews are not paid at all, and write these articles for the love of their hobby and for the benefit of our readership. Well, that is, everyone except for ignorant helmet-wearing trolls like you who make us wish we had never spent the time to help give you an edge over the marketing hype.

Crawl back into the cave you came out of somewhere in Rio De Janeiro, Brazil.

Like Olin said, we do this for the fun of it, and none of us give a rat's sss about whether the Green Team or the Red team has the faster product this month. We just report what we find....

One thing I'd like to throw out there is that I have a perfectly good Nvidia 9800 GTX+ card from BFG (too bad they're gone). Understanding the GPU is considered a bottleneck to the entire gaming experience, would this card still be considered sufficient or should I just pony up the cash and make the move to the Radeon as suggested in the article?

Cheers!

Not the ideal situation but I can live with low-med until finances are better.

That little system ran just about every game I owned at the time in 1080p. Even with Crysis being the decisive heavyweight, it managed to crank an average of 40FPS in Crysis with my minimums being @27FPS. V-SYNC limited my highs to 60FPS. I don't know if my getting such better frame rates was an issue of the extra core, overclocking the mainboard to get the CPU up to 3.4 GHz, the way my XFX card handled it, or (most likely) some combination of them all.

Crossfire ran no problem with that motherboard, and it's taken a Bulldozer chip without issues. The system worked out so well that I had even built some similar builds for some friends who wanted budget builds at the time.

Your build idea certainly had merit, as proven by us both, but I think you overly focused on the Black Edition's unlocked multiplier and should have focused on cores. You could have bought an Athlon II X4 at the same prices you paid for the Black Edition Phenom II X2.