| AMD Radeon HD6850 & HD6950 CrossFire Performance |

| Reviews - Featured Reviews: Video Cards | |||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||

| Written by Steven Iglesias-Hearst | |||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||

| Monday, 27 June 2011 | |||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||

AMD Radeon HD 6850/6950 CrossFire PerformanceTo run today's PC games at high resolutions with all the settings maxed out you need a high end video Card, or two mid/high end video cards in CrossFire/SLI. The best thing about CrossFire/SLI is that you don't need to buy both cards at once, meaning you can spread out the cost of a system build, or simply wait until prices drop before you make your second purchase. Dual video card scaling has come a long way since its conception, in the early days Crossfire performance wasn't always what it was made out to be. However, our setups show near 90% scaling in most of our tests, this is great news for us budget enthusiasts out there as not all of us can afford to fork out $600 up front for the highest end equipment. In this article Benchmark Reviews will provide you with our performance and cost analysis of a HD6850 CrossFire setup and a HD6950 CrossFire setup.

For this review we will be comparing single HD6850, HD6870 and HD6950 video cards to the CrossFire Pairs in a mixture of DX10 / DX11 synthetic benchmarks and current games to get a good idea of the benefit of running dual video cards. We will also look at other factors you need to consider when running a CrossFire setup such as temperatures and power consumption, so without further delay let's move on and get stuck in.

Full Disclosure: The product samples used in this article were provided by HIS and MSI. The Cards: HIS HD6850 IceQ X TurboIn this section we will have a good tour of the HIS HD6850 IceQ X Turbo video card and discuss its main features.

The HIS HD6850 IceQ X Turbo is packed in a relatively small package, about the size and shape of a shoe box. In the box you get a fairly standard bundle that includes an installation package (driver disk and manual), one molex to 6-pin power cable converter, a DVI to VGA adapter and a CrossFireX bridge.

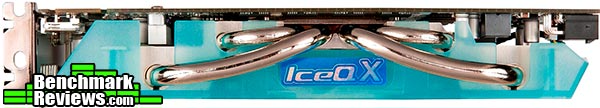

The HIS HD6850 IceQ X Turbo video card is fairly big, measuring 13.5 cm tall x 23.5 cm long and is also a dual slot design. Bang smack in the middle is a 92mm fan that effectively cools the overclocked HD6850 GPU while still remaining fairly quiet. The big, in your face, aqua colored shroud that covers the IceQ X cooler is a little bit loud for my liking, its design is more of a metaphor but it is quite functional at the same time. When installed in your system you only see the side view which actually looks quite nice, as you will see below.

The HIS HD6850 IceQ X Turbo video card requires one 6-pin PCI-E power connector from your PSU, while HIS supply a molex to 6-pin converter it is strongly recommended that you use a PSU that actually has a 6-pin connector present to power this card. HIS also recommends using a 500W or greater PSU.

For output we have one display port connector, a HDMI port and two DVI-I connectors (top is single link and bottom is dual link). Bundled with the card you get a DVI to D-SUB adapter, so as far as connectors go HIS have really covered all the bases here. The top half of the PCI bracket has a small vent cut out, but the design of the cooler exhausts the hot air inside the case rather than out here.

It is likely going to be the case that if you buy this video card then you will most certainly have a side window in your PC case of choice. Until recently most video cards aesthetic features were on the front only, this is no longer the case here as this side view demonstrates. The semi transparent aqua shroud is quite pleasing on the eye when viewed from the side where the visual metaphor really works.

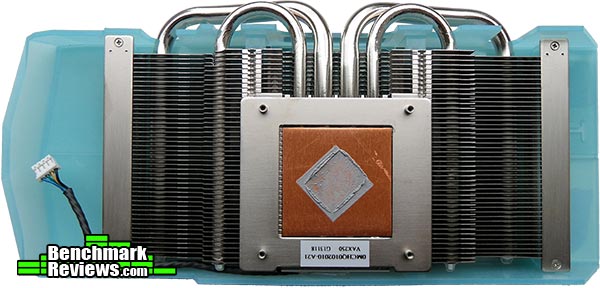

The IceQ X cooler is somewhat smaller than I had first suspected. A pair of 8mm and a pair of 6mm heatpipes take the heat from the GPU and into the aluminum fin array where it is met with cool air from the 92mm fan seen earlier. The shroud makes sure that the cool air is evenly distributed and not wasted. It's nice to see that HIS have not over done it with the thermal interface, leaving not much room for improvement. The HIS HD6850 IceQ X Turbo 1GB video card article can be found here. The Cards: HIS HD6870 IceQ X Turbo XIn this section we will have a good tour of the HIS HD6870 IceQ X Turbo X video card and discuss its main features.

The HIS HD6870 IceQ X Turbo X is packed in a relatively small package, about the size and shape of a shoe box. In the box you get a fairly standard bundle that includes an installation package (driver disk and manual), two molex to 6-pin power cable converters, a DVI to VGA adapter and a CrossFireX bridge.

The HIS HD6870 IceQ X Turbo X video card is fairly big, measuring 14.2 cm tall x 26 cm long and is also a dual slot design. Bang smack in the middle is a 92mm fan that effectively cools the overclocked HD6870 GPU while still remaining fairly quiet. The big, in your face, aqua colored shroud that covers the IceQ X cooler is a little bit loud for my liking, its design is more of a metaphor but it is quite functional at the same time. When installed in your system you only see the side view which actually looks quite nice, as you will see below.

It is likely going to be the case that if you buy this video card then you will most certainly have a side window in your PC case of choice. Until recently most video cards aesthetic features were on the front only, this is no longer the case here as this side view demonstrates. The semi transparent aqua shroud is quite pleasing on the eye when viewed from the side and here the visual metaphor really works.

The HIS HD6870 IceQ X Turbo X video card requires two 6-pin PCI-E power connectors from your PSU, while HIS supply two molex to 6-pin converters it is strongly recommended that you use a PSU that actually has two 6-pin connectors present to power this card. HIS also recommends using a 500W or greater PSU.

For output we have two mini display port connectors, a HDMI port and two DVI-I connectors (top is single link and bottom is dual link). Bundled with the card you get a DVI to D-SUB adapter, so as far as connectors go HIS have really covered all the bases here. The top half of the PCI bracket has a small vent cut out, but the design of the cooler exhausts the hot air inside the case rather than out here.

The IceQ X cooler is somewhat smaller than I had first suspected. A pair of 8mm and a pair of 6mm heatpipes take the heat from the GPU and into the aluminum fin array where it is met with cool air from the 92mm fan seen earlier. The shroud makes sure that the cool air is evenly distributed and not wasted. It's nice to see that HIS have not over done it with the thermal interface leaving not much room for improvement.

With the IceQ cooler removed a further two heatsinks are visible, in the middle of the card is a large memory heatsink and off to the left is a smaller VRM heatsink. These two are in great locations to get some second hand air directly through the aluminum fins of the main heatsink, cooling the memory is not as essential as it used to be but the benefit of such cooling is always welcome. The HIS HD6870 IceQ X Turbo X has only one CrossFireX connector limiting it to a 2-way CrossFireX configuration. The HIS HD6870 IceQ X Turbo X 1GB video card article can be found here. The Cards: HIS HD6950 IceQ X Turbo XIn this section we will have a good tour of the HIS HD6950 IceQ X Turbo X video card and discuss its main features.

The HIS HD6950 IceQ X Turbo X is packed in a relatively small package, about the size and shape of a shoe box. In the box you get a fairly standard bundle that includes an installation package (driver disk and manual), two molex to 6-pin power cable converters, a DVI to VGA adapter and a CrossFireX bridge.

The HIS HD6950 IceQ X Turbo X video card is fairly big, measuring 14.2 cm tall x 26 cm long and is also a dual slot design. Bang smack in the middle is a 92mm fan that effectively cools the overclocked HD6950 GPU while still remaining fairly quiet. The big, in your face, aqua colored shroud that covers the IceQ X cooler is a little bit loud for my liking, its design is more of a metaphor but it is quite functional at the same time. When installed in your system you only see the side view which actually looks quite nice, as you will see below.

For output we have two mini display port connectors, a HDMI port and two DVI-I connectors (top is single link and bottom is dual link). Bundled with the card you get a DVI to D-SUB adapter, so as far as connectors go HIS have really covered all the bases here. The top half of the PCI bracket has a small vent cut out, but the design of the cooler exhausts the hot air inside the case rather than out here.

It is likely going to be the case that if you buy this video card then you will most certainly have a side window in your PC case of choice. Until recently most video cards aesthetic features were on the front only, this is no longer the case here as this side view demonstrates. The semi transparent aqua shroud is quite pleasing on the eye when viewed from the side and here the visual metaphor really works. The HIS HD6950 IceQ X Turbo X video card requires two 6-pin PCI-E power connectors from your PSU, while HIS supply two molex to 6-pin converters it is strongly recommended that you use a PSU that actually has two 6-pin connectors present to power this card. HIS also recommends using a 500W or greater PSU.

The IceQ X cooler is somewhat smaller than I had first suspected. A pair of 8mm and a pair of 6mm heatpipes take the heat from the GPU and into the aluminum fin array where it is met with cool air from the 92mm fan seen earlier. The shroud makes sure that the cool air is evenly distributed and not wasted. It's nice to see that HIS have not over done it with the thermal interface leaving not much room for improvement.

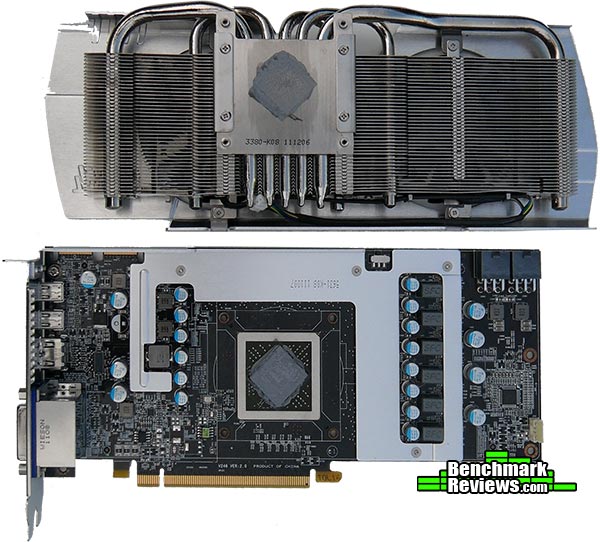

With the IceQ cooler removed a further two heatsinks are visible, in the middle of the card is a large memory heatsink and off to the left is a smaller VRM heatsink. These two are in great locations to get some second hand air directly through the aluminum fins of the main heatsink, cooling the memory is not as essential as it used to be but the benefit of such cooling is always welcome. The HIS HD6950 IceQ X Turbo X has two CrossFireX connectors opening up the possibilities for Tri/Quad CrossFireX configurations. The HIS HD6950 IceQ X Turbo X 2GB video card article can be found here. The Cards: MSI R6950 Twin Frozr III PE/OCLet's take a good look at the MSI R6950 Twin Frozr III PE/OC's exterior and discuss its main features. The image below shows a very professional looking video card, it measures 27cm long x 11.6cm tall and is a true dual slot design.

The guts of the MSI Twin Frozr III cooler are well hidden by the gun-metal color aluminum shroud. This shroud improves over the previous Twin Frozr II design and looks much less hideous and much more functional. Twin 80mm 'Propeller Blade' fans dominate the front face of the MSI R6950 Twin Frozr III PE/OC and complete the aesthetics.

The MSI R6950 Twin Frozr III PE/OC requires two 6-pin PCI-E power connectors from your PSU, while MSI includes two molex to 6-pin adapter cables it is highly recommended to use a PSU that already has these connectors present.

For connectivity we have 2 x mini display ports, a full size HDMI port and 2 x Dual Link DVI-I connectors. There is a little space left over for ventilation but the design of the cooler expels hot air inside your case rather than out here.

A side view of the MSI R6950 Twin Frozr III PE/OC video card showing you how it will look installed inside your PC. The PCB is somewhat re-enforced by the Memory/VRM heatsink but not to the same extent as we have seen previously on the MSI GTX 560Ti Hawk, this seems somewhat strange considering this card is the longer of the two.

Removing the cooler assembly reveals yet another heatsink, MSI have dubbed this the 'Form-in-one' heatsink and it covers all of the memory IC's and the power circuitry. There is a slight bit of overkill with the thermal paste here but nothing that can't be remedied, the temperature recordings are good so only us perfectionists need worry about cleaning and refining here. A little cut-out at the top of the formed heatsink reveals a fan profile switch that allows you to choose between 'Performance' (higher noise) and 'Silent' (higher temps), needless to say I left it on performance.

The Twin Frozr III cooler on this card varies slightly from the Twin Frozr seen on MSI GTX 560Ti Hawk in that it has an extra 6mm heatpipe and it is slightly longer too. There are three 6mm heatpipes and two 8mm heatpipes to carry the heat away from the GPU and into the aluminum fin array.

The Twin Frozr III design incorporates two 80mm propeller blade PWM fans introduced by MSI on their Cyclone II coolers. These fans have proven track record for cooling ability but they are not the quietest when running at full speed, thankfully you won't need to ramp them up to 100% to get optimum performance as they cool very effectively on their auto cycle with minimal noise disruption. The MSI R6950 Twin Frozr III PE/OC 2GB video card article can be found here.

|

|||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||

| Graphics Card | HIS HD6850 IceQ X Turbo 1GB |

HIS HD6870 IceQ X Turbo X 1GB |

HIS HD6850 / HIS HD6870 CrossFire |

HIS HD6950 IceQ X TurboX 2GB |

MSI R6950 Twin Frozr III PE/OC 2GB |

MSI R6950 TF III / |

| GPU Cores | 960 | 1120 | 960 x 2 | 1408 | 1408 | 1408 x 2 |

| Core Clock (MHz) | 820 | 975 | 850 | 880 | 850 | 850 |

| Shader Clock (MHz) | N/A | N/A | N/A | N/A | N/A | N/A |

| Memory Clock (MHz) | 1100 | 1150 | 1100 | 1300 | 1300 | 1300 |

| Memory Amount | 1024MB GDDR5 | 1024MB GDDR5 | 1024MB GDDR5 | 2048MB GDDR5 | 2048MB GDDR5 | 2048MB GDDR5 |

| Memory Interface | 256-bit | 256-bit | 256-bit | 256-bit | 256-bit | 256-bit |

- AMD HIS Radeon HD6850 IceQ X Turbo (820 MHz GPU/1100 MHz vRAM - AMD Catalyst Driver 11.6)

- AMD HIS Radeon HD6870 IceQ X Turbo X (975 MHz GPU/1150 MHz vRAM - AMD Catalyst Driver 11.6)

- AMD HIS Radeon HD6850 IceQ X Turbo / HD6870 IceQ X Turbo X CrossFire (850 MHz GPU/1100 MHz vRAM - AMD Catalyst Driver 11.6)

- AMD HIS Radeon HD6950 IceQ X Turbo X (880 MHz GPU/1300 MHz vRAM - AMD Catalyst Driver 11.6)

- AMD MSI Radeon HD6950 Twin Frozr III PE/OC (850 MHz GPU/1300 MHz vRAM - AMD Catalyst Driver 11.6)

- AMD HIS HD 6950 IceQ X Turbo X / MSI HD6950 Twin Frozr III PE/OC CrossFire (850MHz GPU/1300 MHz vRAM - AMD Catalyst Driver 11.6)

DX10: 3DMark Vantage

3DMark Vantage is a PC benchmark suite designed to test the DirectX10 graphics card performance. FutureMark 3DMark Vantage is the latest addition the 3DMark benchmark series built by FutureMark corporation. Although 3DMark Vantage requires NVIDIA PhysX to be installed for program operation, only the CPU/Physics test relies on this technology.

3DMark Vantage offers benchmark tests focusing on GPU, CPU, and Physics performance. Benchmark Reviews uses the two GPU-specific tests for grading video card performance: Jane Nash and New Calico. These tests isolate graphical performance, and remove processor dependence from the benchmark results.

- 3DMark Vantage v1.02

- Extreme Settings: (Extreme Quality, 8x Multisample Anti-Aliasing, 16x Anisotropic Filtering, 1:2 Scale)

3DMark Vantage GPU Test: Jane Nash

Of the two GPU tests 3DMark Vantage offers, the Jane Nash performance benchmark is slightly less demanding. In a short video scene the special agent escapes a secret lair by water, nearly losing her shirt in the process. Benchmark Reviews tests this DirectX-10 scene at 1680x1050 and 1920x1080 resolutions, and uses Extreme quality settings with 8x anti-aliasing and 16x anisotropic filtering. The 1:2 scale is utilized, and is the highest this test allows. By maximizing the processing levels of this test, the scene creates the highest level of graphical demand possible and sorts the strong from the weak.

Cost Analysis: Jane Nash (1680x1050)

Test Summary: In the Jane Nash benchmark the HD6850 CrossFire configuration gives an average 41% performance increase over the single card which equates to 82% scaling at 1920x1080. The HD6950 CrossFire configuration gives an average 43% extra performance over its single card counterpart which equates to 86% scaling at 1920x1080. The CrossFire scaling is all well and good, but the cost to performance aspect isn't. It seems that running a CrossFire setup may be more expensive than it is worth and you would be better off with a more powerful single card.

3DMark Vantage GPU Test: New Calico

New Calico is the second GPU test in the 3DMark Vantage test suite. Of the two GPU tests, New Calico is the most demanding. In a short video scene featuring a galactic battleground, there is a massive display of busy objects across the screen. Benchmark Reviews tests this DirectX-10 scene at 1680x1050 and 1920x1080 resolutions, and uses Extreme quality settings with 8x anti-aliasing and 16x anisotropic filtering. The 1:2 scale is utilized, and is the highest this test allows. Using the highest graphics processing level available allows our test products to separate themselves and stand out (if possible).

Cost Analysis: New Calico (1680x1050)

Test Summary: The New Calico benchmark has a slightly different effect on our CrossFire sets, the HD6850 CrossFire configuration gives an average 35% performance increase over a single card which equates to 70% scaling at 1920x1080. The HD6950 CrossFire setup gives on average 46% extra performance over its single card contenders, this equates to 92% scaling at 1920x1080 which is an excellent result. In our cost analysis you will see that the cost per frame rating of the HD6850 CrossFire setup is very high compared with a single card, but the HD6950 CrossFire setup cost per frame is very close to that of its single card counterpart.

| Graphics Card | HIS HD6850 IceQ X Turbo 1GB |

HIS HD6870 IceQ X Turbo X 1GB |

HIS HD6850 IceQ X Turbo CrossFire |

HIS HD6950 IceQ X TurboX 2GB |

MSI R6950 Twin Frozr III PE/OC 2GB |

MSI R6950 TF III / |

| GPU Cores | 960 | 1120 | 960 x 2 | 1408 | 1408 | 1408 x 2 |

| Core Clock (MHz) | 820 | 975 | 850 | 880 | 850 | 850 |

| Shader Clock (MHz) | N/A | N/A | N/A | N/A | N/A | N/A |

| Memory Clock (MHz) | 1100 | 1150 | 1100 | 1300 | 1300 | 1300 |

| Memory Amount | 1024MB GDDR5 | 1024MB GDDR5 | 1024MB GDDR5 | 2048MB GDDR5 | 2048MB GDDR5 | 2048MB GDDR5 |

| Memory Interface | 256-bit | 256-bit | 256-bit | 256-bit | 256-bit | 256-bit |

DX10: Street Fighter IV

Capcom's Street Fighter IV is part of the now-famous Street Fighter series that began in 1987. The 2D Street Fighter II was one of the most popular fighting games of the 1990s, and now gets a 3D face-lift to become Street Fighter 4. The Street Fighter 4 benchmark utility was released as a novel way to test your system's ability to run the game. It uses a few dressed-up fight scenes where combatants fight against each other using various martial arts disciplines. Feet, fists and magic fill the screen with a flurry of activity. Due to the rapid pace, varied lighting and the use of music this is one of the more enjoyable benchmarks. Street Fighter IV uses a proprietary Capcom SF4 game engine, which is enhanced over previous versions of the game.

Using the highest quality DirectX-10 settings with 8x AA and 16x AF, a mid to high end card will ace this test, but it will still weed out the slower cards out there.

- Street Fighter IV Benchmark

- Extreme Settings: (Very High Quality, 8x AA, 16x AF, Parallel rendering On, Shadows High)

Cost Analysis: Street Fighter IV (1680x1050)

Test Summary: The Street Fighter IV benchmark doesn't need dual video cards to produce high frame rates but does demonstrate the scaling effect of our CrossFire sets very well. The HD6850 CrossFire configuration gives an average 41% performance increase over a single card which equates to 82% scaling at 1920x1080. The HD6950 CrossFire setup gives on average 39% extra performance over its single card contenders, this equates to 78% scaling at 1920x1080. In our cost analysis you will see that the cost per frame rating of the HD6950 CrossFire setup is very high compared with a single card, but the HD6850 CrossFire setup cost per frame is very close to that of its single card counterpart.

| Graphics Card | HIS HD6850 IceQ X Turbo 1GB |

HIS HD6870 IceQ X Turbo X 1GB |

HIS HD6850 IceQ X Turbo CrossFire |

HIS HD6950 IceQ X TurboX 2GB |

MSI R6950 Twin Frozr III PE/OC 2GB |

MSI R6950 TF III / |

| GPU Cores | 960 | 1120 | 960 x 2 | 1408 | 1408 | 1408 x 2 |

| Core Clock (MHz) | 820 | 975 | 850 | 880 | 850 | 850 |

| Shader Clock (MHz) | N/A | N/A | N/A | N/A | N/A | N/A |

| Memory Clock (MHz) | 1100 | 1150 | 1100 | 1300 | 1300 | 1300 |

| Memory Amount | 1024MB GDDR5 | 1024MB GDDR5 | 1024MB GDDR5 | 2048MB GDDR5 | 2048MB GDDR5 | 2048MB GDDR5 |

| Memory Interface | 256-bit | 256-bit | 256-bit | 256-bit | 256-bit | 256-bit |

DX11: Aliens vs Predator

Aliens vs. Predator is a science fiction first-person shooter video game, developed by Rebellion, and published by Sega for Microsoft Windows, Sony PlayStation 3, and Microsoft Xbox 360. Aliens vs. Predator utilizes Rebellion's proprietary Asura game engine, which had previously found its way into Call of Duty: World at War and Rogue Warrior. The self-contained benchmark tool is used for our DirectX-11 tests, which push the Asura game engine to its limit.

In our benchmark tests, Aliens vs. Predator was configured to use the highest quality settings with 4x AA and 16x AF. DirectX-11 features such as Screen Space Ambient Occlusion (SSAO) and tessellation have also been included, along with advanced shadows.

- Aliens vs Predator

- Extreme Settings: (Very High Quality, 4x AA, 16x AF, SSAO, Tessellation, Advanced Shadows)

Cost Analysis: Aliens vs Predator (1680x1050)

Test Summary: In the Alien vs Predator benchmark we see great potential from our CrossFire sets, the HD6850 CrossFire configuration gives an average 45% performance increase over a single card which equates to 90% scaling at 1920x1080. The HD6950 CrossFire setup gives on average 49% extra performance over its single card contenders, this equates to 98% scaling at 1920x1080 which is an excellent result indeed. In our cost analysis you will see that the cost per frame rating of the HD6850 CrossFire setup is higher compared with a single card, but the HD6950 CrossFire setup cost per frame is so close to that of its single card counterpart that it is hard to believe. This is a great result for CrossFire scaling.

| Graphics Card | HIS HD6850 IceQ X Turbo 1GB |

HIS HD6870 IceQ X Turbo X 1GB |

HIS HD6850 IceQ X Turbo CrossFire |

HIS HD6950 IceQ X TurboX 2GB |

MSI R6950 Twin Frozr III PE/OC 2GB |

MSI R6950 TF III / |

| GPU Cores | 960 | 1120 | 960 x 2 | 1408 | 1408 | 1408 x 2 |

| Core Clock (MHz) | 820 | 975 | 850 | 880 | 850 | 850 |

| Shader Clock (MHz) | N/A | N/A | N/A | N/A | N/A | N/A |

| Memory Clock (MHz) | 1100 | 1150 | 1100 | 1300 | 1300 | 1300 |

| Memory Amount | 1024MB GDDR5 | 1024MB GDDR5 | 1024MB GDDR5 | 2048MB GDDR5 | 2048MB GDDR5 | 2048MB GDDR5 |

| Memory Interface | 256-bit | 256-bit | 256-bit | 256-bit | 256-bit | 256-bit |

DX11: Battlefield Bad Company 2

The Battlefield franchise has been known to demand a lot from PC graphics hardware. DICE (Digital Illusions CE) has incorporated their Frostbite-1.5 game engine with Destruction-2.0 feature set with Battlefield: Bad Company 2. Battlefield: Bad Company 2 features destructible environments using Frostbit Destruction-2.0, and adds gravitational bullet drop effects for projectiles shot from weapons at a long distance. The Frostbite-1.5 game engine used on Battlefield: Bad Company 2 consists of DirectX-10 primary graphics, with improved performance and softened dynamic shadows added for DirectX-11 users.

At the time Battlefield: Bad Company 2 was published, DICE was also working on the Frostbite-2.0 game engine. This upcoming engine will include native support for DirectX-10.1 and DirectX-11, as well as parallelized processing support for 2-8 parallel threads. This will improve performance for users with an Intel Core-i7 processor. Unfortunately, the Extreme Edition Intel Core i7-980X six-core CPU with twelve threads will not see full utilization.

In our benchmark tests of Battlefield: Bad Company 2, the first three minutes of action in the single-player raft night scene are captured with FRAPS. Relative to the online multiplayer action, these frame rate results are nearly identical to daytime maps with the same video settings. The Frostbite-1.5 game engine in Battlefield: Bad Company 2 appears to equalize our test set of video cards, and despite AMD's sponsorship of the game it still plays well using any brand of graphics card.

- BattleField: Bad Company 2

- Extreme Settings: (Highest Quality, HBAO, 8x AA, 16x AF, 180s Fraps Single-Player Intro Scene)

Cost Analysis: Battlefield: Bad Company 2 (1680x1050)

Test Summary: The Battlefield: Bad Company 2 benchmark is not the most demanding, you really don't need to run CrossFire in this game. The HD6850 CrossFire configuration gives an average 45% performance increase over a single card which equates to 90% scaling at 1920x1080. The HD6950 CrossFire setup gives on average 40% extra performance over its single card contenders, this equates to 80% scaling at 1920x1080 which is an excellent result. In our cost analysis you will see that you can save a lot of money by going with a lower end card or CrossFire setup if this is your main game. The good news is that you can rest assured that your video card won't be the cause of your lag in BF: BC2.

| Graphics Card | HIS HD6850 IceQ X Turbo 1GB |

HIS HD6870 IceQ X Turbo X 1GB |

HIS HD6850 IceQ X Turbo CrossFire |

HIS HD6950 IceQ X TurboX 2GB |

MSI R6950 Twin Frozr III PE/OC 2GB |

MSI R6950 TF III / |

| GPU Cores | 960 | 1120 | 960 x 2 | 1408 | 1408 | 1408 x 2 |

| Core Clock (MHz) | 820 | 975 | 850 | 880 | 850 | 850 |

| Shader Clock (MHz) | N/A | N/A | N/A | N/A | N/A | N/A |

| Memory Clock (MHz) | 1100 | 1150 | 1100 | 1300 | 1300 | 1300 |

| Memory Amount | 1024MB GDDR5 | 1024MB GDDR5 | 1024MB GDDR5 | 2048MB GDDR5 | 2048MB GDDR5 | 2048MB GDDR5 |

| Memory Interface | 256-bit | 256-bit | 256-bit | 256-bit | 256-bit | 256-bit |

DX11: BattleForge

BattleForge is free Massive Multiplayer Online Role Playing Game (MMORPG) developed by EA Phenomic with DirectX-11 graphics capability. Combining strategic cooperative battles, the community of MMO games, and trading card gameplay, BattleForge players are free to put their creatures, spells and buildings into combination's they see fit. These units are represented in the form of digital cards from which you build your own unique army. With minimal resources and a custom tech tree to manage, the gameplay is unbelievably accessible and action-packed.

Benchmark Reviews uses the built-in graphics benchmark to measure performance in BattleForge, using Very High quality settings (detail) and 8x anti-aliasing with auto multi-threading enabled. BattleForge is one of the first titles to take advantage of DirectX-11 in Windows 7, and offers a very robust color range throughout the busy battleground landscape. The charted results illustrate how performance measures-up between video cards when Screen Space Ambient Occlusion (SSAO) is enabled.

- BattleForge v1.2

- Extreme Settings: (Very High Quality, 8x Anti-Aliasing, Auto Multi-Thread)

Cost Analysis: BattleForge (1680x1050)

Test Summary: The Battleforge benchmark with all the settings cranked up looks very nice indeed, and also makes very good use of the CrossFire sets. The HD6850 CrossFire configuration gives an average 48% performance increase over a single card which equates to 96% scaling at 1920x1080. The HD6950 CrossFire setup gives on average 51% extra performance over its single card contenders, this equates to 102% scaling at 1920x1080 which is an excellent result indeed. In our cost analysis you will see that the cost per frame rating of the HD6850 CrossFire setup and the HD6950 CrossFire setup contends very strongly to that of their single card counterparts, this is great news and shows what is possible with the right optimizations.

| Graphics Card | HIS HD6850 IceQ X Turbo 1GB |

HIS HD6870 IceQ X Turbo X 1GB |

HIS HD6850 IceQ X Turbo CrossFire |

HIS HD6950 IceQ X TurboX 2GB |

MSI R6950 Twin Frozr III PE/OC 2GB |

MSI R6950 TF III / |

| GPU Cores | 960 | 1120 | 960 x 2 | 1408 | 1408 | 1408 x 2 |

| Core Clock (MHz) | 820 | 975 | 850 | 880 | 850 | 850 |

| Shader Clock (MHz) | N/A | N/A | N/A | N/A | N/A | N/A |

| Memory Clock (MHz) | 1100 | 1150 | 1100 | 1300 | 1300 | 1300 |

| Memory Amount | 1024MB GDDR5 | 1024MB GDDR5 | 1024MB GDDR5 | 2048MB GDDR5 | 2048MB GDDR5 | 2048MB GDDR5 |

| Memory Interface | 256-bit | 256-bit | 256-bit | 256-bit | 256-bit | 256-bit |

DX11: Lost Planet 2

Lost Planet 2 is the second instalment in the saga of the planet E.D.N. III, ten years after the story of Lost Planet: Extreme Condition. The snow has melted and the lush jungle life of the planet has emerged with angry and luscious flora and fauna. With the new environment comes the addition of DirectX-11 technology to the game.

Lost Planet 2 takes advantage of DX11 features including tessellation and displacement mapping on water, level bosses, and player characters. In addition, soft body compute shaders are used on 'Boss' characters, and wave simulation is performed using DirectCompute. These cutting edge features make for an excellent benchmark for top-of-the-line consumer GPUs.

The Lost Planet 2 benchmark offers two different tests, which serve different purposes. This article uses tests conducted on benchmark B, which is designed to be a deterministic and effective benchmark tool featuring DirectX 11 elements.

- Lost Planet 2 Benchmark 1.0

- Moderate Settings: (2x AA, Low Shadow Detail, High Texture, High Render, High DirectX 11 Features)

Cost Analysis: Lost Planet 2 (1680x1050)

Test Summary: The Lost Planet 2 benchmark is a tough cookie for a single video card to crack, in our tests we had to use relatively moderate settings just to get some acceptable numbers. This is one game that really benefit from a CrossFire setup, thus enabling you to increase the visual settings. The HD6850 CrossFire configuration gives an average 36% performance increase over a single card which equates to 72% scaling at 1920x1080. The HD6950 CrossFire setup gives on average 37% extra performance over its single card contenders, this equates to 74% scaling at 1920x1080. In our cost analysis you will see that the cost per frame rating of the HD6850 CrossFire setup and the HD6950 CrossFire setup is very high compared with the single card results.

| Graphics Card | HIS HD6850 IceQ X Turbo 1GB |

HIS HD6870 IceQ X Turbo X 1GB |

HIS HD6850 IceQ X Turbo CrossFire |

HIS HD6950 IceQ X TurboX 2GB |

MSI R6950 Twin Frozr III PE/OC 2GB |

MSI R6950 TF III / |

| GPU Cores | 960 | 1120 | 960 x 2 | 1408 | 1408 | 1408 x 2 |

| Core Clock (MHz) | 820 | 975 | 850 | 880 | 850 | 850 |

| Shader Clock (MHz) | N/A | N/A | N/A | N/A | N/A | N/A |

| Memory Clock (MHz) | 1100 | 1150 | 1100 | 1300 | 1300 | 1300 |

| Memory Amount | 1024MB GDDR5 | 1024MB GDDR5 | 1024MB GDDR5 | 2048MB GDDR5 | 2048MB GDDR5 | 2048MB GDDR5 |

| Memory Interface | 256-bit | 256-bit | 256-bit | 256-bit | 256-bit | 256-bit |

DX11: Tom Clancy's HAWX 2

Tom Clancy's H.A.W.X.2 has been optimized for DX11 enabled GPUs and has a number of enhancements to not only improve performance with DX11 enabled GPUs, but also greatly improve the visual experience while taking to the skies. The game uses a hardware terrain tessellation method that allows a high number of detailed triangles to be rendered entirely on the GPU when near the terrain in question. This allows for a very low memory footprint and relies on the GPU power alone to expand the low resolution data to highly realistic detail.

The Tom Clancy's HAWX2 benchmark uses normal game content in the same conditions a player will find in the game, and allows users to evaluate the enhanced visuals that DirectX-11 tessellation adds into the game. The Tom Clancy's HAWX2 benchmark is built from exactly the same source code that's included with the retail version of the game. HAWX2's tessellation scheme uses a metric based on the length in pixels of the triangle edges. This value is currently set to 6 pixels per triangle edge, which provides an average triangle size of 18 pixels.

The end result is perhaps the best tessellation implementation seen in a game yet, providing a dramatic improvement in image quality over the non-tessellated case, and running at playable frame rates across a wide range of graphics hardware.

- Tom Clancy's HAWX 2 Benchmark 1.0.4

- Extreme Settings: (Maximum Quality, 8x AA, 16x AF, DX11 Terrain Tessellation)

Cost Analysis: HAWX 2 (1680x1050)

Test Summary: HAWX 2 is a strange game in that you need to look very close to see the difference in quality settings, the main difference is in the terrain but this is easily overlooked as you are busy fighting with the controls just to fly in a straight line. This is another game that really doesn't need a CrossFire setup, you get very good performance with a single HD6850. The HD6850 CrossFire configuration gives an average 46% performance increase over a single card which equates to 92% scaling at 1920x1080. The HD6950 CrossFire setup gives on average 47% extra performance over its single card contenders, this equates to 94% scaling at 1920x1080. In our cost analysis you will see that the cost per frame rating of the HD6850 CrossFire setup and the HD6950 CrossFire setup is very close to the single card results.

| Graphics Card | HIS HD6850 IceQ X Turbo 1GB |

HIS HD6870 IceQ X Turbo X 1GB |

HIS HD6850 IceQ X Turbo CrossFire |

HIS HD6950 IceQ X TurboX 2GB |

MSI R6950 Twin Frozr III PE/OC 2GB |

MSI R6950 TF III / |

| GPU Cores | 960 | 1120 | 960 x 2 | 1408 | 1408 | 1408 x 2 |

| Core Clock (MHz) | 820 | 975 | 850 | 880 | 850 | 850 |

| Shader Clock (MHz) | N/A | N/A | N/A | N/A | N/A | N/A |

| Memory Clock (MHz) | 1100 | 1150 | 1100 | 1300 | 1300 | 1300 |

| Memory Amount | 1024MB GDDR5 | 1024MB GDDR5 | 1024MB GDDR5 | 2048MB GDDR5 | 2048MB GDDR5 | 2048MB GDDR5 |

| Memory Interface | 256-bit | 256-bit | 256-bit | 256-bit | 256-bit | 256-bit |

DX11: Metro 2033

Metro 2033 is an action-oriented video game with a combination of survival horror, and first-person shooter elements. The game is based on the novel Metro 2033 by Russian author Dmitry Glukhovsky. It was developed by 4A Games in Ukraine and released in March 2010 for Microsoft Windows. Metro 2033 uses the 4A game engine, developed by 4A Games. The 4A Engine supports DirectX-9, 10, and 11, along with NVIDIA PhysX and GeForce 3D Vision.

The 4A engine is multi-threaded in such that only PhysX had a dedicated thread, and uses a task-model without any pre-conditioning or pre/post-synchronizing, allowing tasks to be done in parallel. The 4A game engine can utilize a deferred shading pipeline, and uses tessellation for greater performance, and also has HDR (complete with blue shift), real-time reflections, color correction, film grain and noise, and the engine also supports multi-core rendering.

Metro 2033 featured superior volumetric fog, double PhysX precision, object blur, sub-surface scattering for skin shaders, parallax mapping on all surfaces and greater geometric detail with a less aggressive LODs. Using PhysX, the engine uses many features such as destructible environments, and cloth and water simulations, and particles that can be fully affected by environmental factors.

NVIDIA has been diligently working to promote Metro 2033, and for good reason: it's one of the most demanding PC video games we've ever tested. When their flagship GeForce GTX 480 struggles to produce 27 FPS with DirectX-11 anti-aliasing turned to to its lowest setting, you know that only the strongest graphics processors will generate playable frame rates. All of our tests enable Advanced Depth of Field and Tessellation effects, but disable advanced PhysX options.

- Metro 2033

- Moderate Settings: (Very-High Quality, AAA, 16x AF, Advanced DoF, Tessellation, 180s Fraps Chase Scene)

Cost Analysis: Metro 2033 (1680x1050)

Test Summary: Metro 2033 is a tough cookie for a single video card to crack, in our tests the single cards only just get some acceptable numbers. This is another game that really benefits from a CrossFire setup, thus enabling you to increase the visual settings without worrying about FPS. The HD6850 CrossFire configuration gives an average 45% performance increase over a single card which equates to 90% scaling at 1920x1080. The HD6950 CrossFire setup gives on average 49% extra performance over its single card contenders, this equates to 98% scaling at 1920x1080. In our cost analysis you will see that the cost per frame rating of the HD6850 CrossFire setup and the HD6950 CrossFire setup is very high compared with the single card results.

| Graphics Card | HIS HD6850 IceQ X Turbo 1GB |

HIS HD6870 IceQ X Turbo X 1GB |

HIS HD6850 IceQ X Turbo CrossFire |

HIS HD6950 IceQ X TurboX 2GB |

MSI R6950 Twin Frozr III PE/OC 2GB |

MSI R6950 TF III / |

| GPU Cores | 960 | 1120 | 960 x 2 | 1408 | 1408 | 1408 x 2 |

| Core Clock (MHz) | 820 | 975 | 850 | 880 | 850 | 850 |

| Shader Clock (MHz) | N/A | N/A | N/A | N/A | N/A | N/A |

| Memory Clock (MHz) | 1100 | 1150 | 1100 | 1300 | 1300 | 1300 |

| Memory Amount | 1024MB GDDR5 | 1024MB GDDR5 | 1024MB GDDR5 | 2048MB GDDR5 | 2048MB GDDR5 | 2048MB GDDR5 |

| Memory Interface | 256-bit | 256-bit | 256-bit | 256-bit | 256-bit | 256-bit |

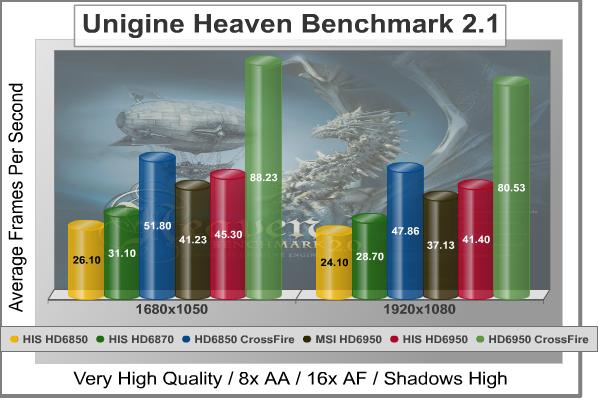

DX11: Unigine Heaven 2.1

The Unigine Heaven 2.1 benchmark is a free publicly available tool that grants the power to unleash the graphics capabilities in DirectX-11 for Windows 7 or updated Vista Operating Systems. It reveals the enchanting magic of floating islands with a tiny village hidden in the cloudy skies. With the interactive mode, emerging experience of exploring the intricate world is within reach. Through its advanced renderer, Unigine is one of the first to set precedence in showcasing the art assets with tessellation, bringing compelling visual finesse, utilizing the technology to the full extent and exhibiting the possibilities of enriching 3D gaming.

The distinguishing feature in the Unigine Heaven benchmark is a hardware tessellation that is a scalable technology aimed for automatic subdivision of polygons into smaller and finer pieces, so that developers can gain a more detailed look of their games almost free of charge in terms of performance. Thanks to this procedure, the elaboration of the rendered image finally approaches the boundary of veridical visual perception: the virtual reality transcends conjured by your hand.

Although Heaven-2.1 was recently released and used for our DirectX-11 tests, the benchmark results were extremely close to those obtained with Heaven-1.0 testing. Since only DX11-compliant video cards will properly test on the Heaven benchmark, only those products that meet the requirements have been included.

- Unigine Heaven Benchmark 2.1

- Extreme Settings: (High Quality, Normal Tessellation, 16x AF, 4x AA)

Cost Analysis: Unigine Heaven (1680x1050)

Test Summary: Unigine heaven takes no prisoners; often referred to as Tessellation mark behind the scenes this synthetic benchmark was designed to test DX11 hardware to its limit. The HD6850 CrossFire configuration gives an average 44% performance increase over a single card which equates to 88% scaling at 1920x1080. The HD6950 CrossFire setup gives on average 51% extra performance over its single card contenders, this equates to 102% scaling at 1920x1080. In our cost analysis you will see that the cost per frame rating of the HD6850 CrossFire setup is high compared with the single card results and the HD6950 CrossFire setup offers the same value as its single card contenders.

In the following sections we will report our findings on power consumption and temperatures.

| Graphics Card | HIS HD6850 IceQ X Turbo 1GB |

HIS HD6870 IceQ X Turbo X 1GB |

HIS HD6850 IceQ X Turbo CrossFire |

HIS HD6950 IceQ X TurboX 2GB |

MSI R6950 Twin Frozr III PE/OC 2GB |

MSI R6950 TF III / |

| GPU Cores | 960 | 1120 | 960 x 2 | 1408 | 1408 | 1408 x 2 |

| Core Clock (MHz) | 820 | 975 | 850 | 880 | 850 | 850 |

| Shader Clock (MHz) | N/A | N/A | N/A | N/A | N/A | N/A |

| Memory Clock (MHz) | 1100 | 1150 | 1100 | 1300 | 1300 | 1300 |

| Memory Amount | 1024MB GDDR5 | 1024MB GDDR5 | 1024MB GDDR5 | 2048MB GDDR5 | 2048MB GDDR5 | 2048MB GDDR5 |

| Memory Interface | 256-bit | 256-bit | 256-bit | 256-bit | 256-bit | 256-bit |

AMD Radeon HD6850 and HD6950 CrossFire Temperatures

Benchmark tests are always nice, so long as you care about comparing one product to another. But when you're an overclocker, gamer, or merely a PC hardware enthusiast who likes to tweak things on occasion, there's no substitute for good information. Benchmark Reviews has a very popular guide written on Overclocking Video Cards, which gives detailed instruction on how to tweak a graphics cards for better performance. Of course, not every video card has overclocking head room. Some products run so hot that they can't suffer any higher temperatures than they already do. This is why we measure the operating temperature of the video card products we test.

To begin my testing, I use GPU-Z to measure the temperature at idle as reported by the GPU. Next I use FurMark's "Torture Test" to generate maximum thermal load and record GPU temperatures at high-power 3D mode. The ambient room temperature remained at a stable 27°C throughout testing. FurMark does two things extremely well: drive the thermal output of any graphics processor higher than applications of video games realistically could, and it does so with consistency every time. Furmark works great for testing the stability of a GPU as the temperature rises to the highest possible output. The temperatures discussed below are absolute maximum values, and not representative of real-world performance.

HD6850 CrossFire Setup

As previously stated my ambient temperature remained at a stable 27°C throughout the testing procedure. The top card in a CrossFire setup (GPU 1) will always be warmer than the bottom card (GPU2). The coolers on both of the cards in this setup are more than capable but have a very relaxed fan profile, resulting in higher temperatures. GPU 1 (HIS HD6850 IceQ X Turbo) had an idle temperature of 54°C while GPU 2 (HIS HD6870 IceQ X Turbo X) had an idle temperature of 42°C. Creating a load with FurMark saw the temperatures rise to worrying levels, GPU 1 was in the red zone @ 89°C (fan speed 67%) while GPU 2 was happily chugging along @ 67°C (fan speed 56%). Changing the fan speed of GPU 1 to 100% saw the temperature drop to 84°C which is still quite high but much better. The noise level at max speed is honestly still quite bearable, and we have a very nice 5°C improvement in load temperature.

When installing the HD6950 crossfire setup I had initially placed the MSI HD6950 in the top position, but due to its fan being much louder than the HIS HD6950's fan I ended up swapping them around. Because the top card always gets hotter the MSI HD6950's fan would ramp up and create a racket, while the HIS HD6950's fan is much much quieter even at 100%, it just made sense.

HD6950 CrossFire Setup

GPU 1 (HIS HD6950 IceQ X Turbo X) had an idle temperature of 48°C while GPU 2 (MSI HD6950 Twin Frozr III PE/OC) had an idle temperature of 39°C. Creating a load with FurMark saw the temperatures rise to worrying levels once again, GPU 1 was in the red zone @ 90°C (fan speed 60%) while GPU 2 was happily chugging along @ 68°C (fan speed 62%). Changing the fan speed of GPU 1 to 100% saw the temperature drop to 79°C which is still quite high but much better. The HIS HD6950 IceQ X Turbo X's noise level at max speed is honestly still quite bearable, and we have a very nice 11°C improvement in load temperature.

VGA Power Consumption

Life is not as affordable as it used to be, and items such as gasoline, natural gas, and electricity all top the list of resources which have exploded in price over the past few years. Add to this the limit of non-renewable resources compared to current demands, and you can see that the prices are only going to get worse. Planet Earth is needs our help, and needs it badly. With forests becoming barren of vegetation and snow capped poles quickly turning brown, the technology industry has a new attitude towards turning "green". I'll spare you the powerful marketing hype that gets sent from various manufacturers every day, and get right to the point: your computer hasn't been doing much to help save energy... at least up until now.

For power consumption tests, Benchmark Reviews utilizes an 80-Plus Gold rated Corsair HX750w (model: CMPSU-750HX) This power supply unit has been tested to provide over 90% typical efficiency by Ecos Plug Load Solutions. To measure isolated video card power consumption, I used the energenie ENER007 power meter made by Sandal Plc (UK).

A baseline test is taken without a video card installed inside our test computer system, which is allowed to boot into Windows-7 and rest idle at the login screen before power consumption is recorded. Once the baseline reading has been taken, the graphics cards are installed and the system is again booted into Windows and left idle at the login screen. Our final loaded power consumption reading is taken with the video card running a stress test using FurMark. Below is a chart with the isolated video card power consumption (not system total) displayed in Watts for each specified test product:

VGA Product Description(sorted by combined total power) |

Idle Power |

Loaded Power |

|---|---|---|

NVIDIA GeForce GTX 480 SLI Set |

82 W |

655 W |

NVIDIA GeForce GTX 590 Reference Design |

53 W |

396 W |

ATI Radeon HD 4870 X2 Reference Design |

100 W |

320 W |

AMD Radeon HD 6990 Reference Design |

46 W |

350 W |

NVIDIA GeForce GTX 295 Reference Design |

74 W |

302 W |

ASUS GeForce GTX 480 Reference Design |

39 W |

315 W |

ATI Radeon HD 5970 Reference Design |

48 W |

299 W |

NVIDIA GeForce GTX 690 Reference Design |

25 W |

321 W |

ATI Radeon HD 4850 CrossFireX Set |

123 W |

210 W |

ATI Radeon HD 4890 Reference Design |

65 W |

268 W |

AMD Radeon HD 7970 Reference Design |

21 W |

311 W |

NVIDIA GeForce GTX 470 Reference Design |

42 W |

278 W |

NVIDIA GeForce GTX 580 Reference Design |

31 W |

246 W |

NVIDIA GeForce GTX 570 Reference Design |

31 W |

241 W |

ATI Radeon HD 5870 Reference Design |

25 W |

240 W |

ATI Radeon HD 6970 Reference Design |

24 W |

233 W |

NVIDIA GeForce GTX 465 Reference Design |

36 W |

219 W |

NVIDIA GeForce GTX 680 Reference Design |

14 W |

243 W |

Sapphire Radeon HD 4850 X2 11139-00-40R |

73 W |

180 W |

NVIDIA GeForce 9800 GX2 Reference Design |

85 W |

186 W |

NVIDIA GeForce GTX 780 Reference Design |

10 W |

275 W |

NVIDIA GeForce GTX 770 Reference Design |

9 W |

256 W |

NVIDIA GeForce GTX 280 Reference Design |

35 W |

225 W |

NVIDIA GeForce GTX 260 (216) Reference Design |

42 W |

203 W |

ATI Radeon HD 4870 Reference Design |

58 W |

166 W |

NVIDIA GeForce GTX 560 Ti Reference Design |

17 W |

199 W |

NVIDIA GeForce GTX 460 Reference Design |

18 W |

167 W |

AMD Radeon HD 6870 Reference Design |

20 W |

162 W |

NVIDIA GeForce GTX 670 Reference Design |

14 W |

167 W |

ATI Radeon HD 5850 Reference Design |

24 W |

157 W |

NVIDIA GeForce GTX 650 Ti BOOST Reference Design |

8 W |

164 W |

AMD Radeon HD 6850 Reference Design |

20 W |

139 W |

NVIDIA GeForce 8800 GT Reference Design |

31 W |

133 W |

ATI Radeon HD 4770 RV740 GDDR5 Reference Design |

37 W |

120 W |

ATI Radeon HD 5770 Reference Design |

16 W |

122 W |

NVIDIA GeForce GTS 450 Reference Design |

22 W |

115 W |

NVIDIA GeForce GTX 650 Ti Reference Design |

12 W |

112 W |

ATI Radeon HD 4670 Reference Design |

9 W |

70 W |

The table below shows readings for each of the CrossFire setups, power consumption is a major factor to consider when buying a power supply and when deciding which video card setup you want to run and should not be overlooked. We can't make you care but we will provide you with the facts and hope that you take note and do your bit to care for our planet.

| CrossFire Setup | System Idle (No Video Card) | CrossFire Idle (-119) | CrossFire Load (-119) | |||

| HIS HD6850 IceQ X Turbo / HIS HD6870 IceQ X Turbo X |

119 |

51 |

317 |

|||

|

HIS HD6950 IceQ X Turbo X / |

119 |

59 |

386 |

Power consumption and PSU efficiency are two different things altogether. In this example the power meter tells us how many watts the PSU is pulling from the wall, a less efficient PSU will pull more power from the wall to feed the systems power needs. This is why it is important to buy a Bronze, Silver, Gold or even Platinum certified Power Supply Unit so you are not wasting energy that needn't be wasted.

AMD Radeon HD6850 and HD6950 CrossFire Final Thoughts

CrossFire is more flexible than SLI and this has its advantages when it comes to cost, with NVIDIA SLI the video cards don't need to identical but the GPU of each card does. With AMD CrossFire you have more freedom to choose for the partnership, for instance in our tests we paired a HD6850 video card with a HD6870 video card, which resulted in the HD6870 scaling down to HD6850 performance. With CrossFire you can pair any card in the same series e.g. any HD65XX video card with any other HD65XX video card and so on and so forth up the series. What you can't do though is pair different generation or series cards with each other e.g. a HD68XX video card with a HD69XX or HD58XX video card configuration would not work. There are other benefits to a CrossFire setups that this article hasn't gone into due to hardware constraints and these are along the lines of multiple monitor setups at high resolutions with and without 3D. Sure a single AMD Radeon video card can drive three monitors simultaneously but you won't get top notch triple monitor performance with a single Radeon HD6850.

There are many factors you need to take into account before you even consider a setting up a CrossFire configuration. Firstly you need a motherboard that is not only capable, but also has the available lanes after you have installed all of you storage devices and other PCI-E lane consuming peripherals. As well as having a capable motherboard, you will want to think about the expansion card slot layout on the motherboard, video cards in close proximity to each other tend to get very hot very quickly. This brings me to temperatures, you will need a case with excellent airflow to keep you CrossFire configuration as cool as possible, high GPU temperatures for extended periods will shorten the life of your video cards.

Next you will want a Power Supply Unit that is not only capable of providing enough power, but will need to have the special 6-pin or 8-pin PCI-E power connectors too. Efficiency is another important factor when buying a PSU for a CrossFire setup as power consumption will be high already and even higher than it needs to be with a inefficient PSU. Noise can also be a problem, the fan on at least the top video card will be working much harder and as such creating more noise, you may have to make a compromise here as not all quiet fans perform well. If your video cards have the headroom you may also choose to overclock them, overclocking a CrossFire setup is not as straight forward as overclocking a single card but it is still possible. You will need to learn the capabilities of each card separately and apply the settings using AMD Overdrive. Remember that increasing clocks means increasing heat so be careful how far you push when using air cooling.

AMD Radeon HD6850 and HD6950 CrossFire Conclusion

Important: In this section I am going to write a brief summary on the Performance and Price aspects of running a CrossFire configuration. The views expressed are my own and are based on my experience while writing this article and testing the products herein. Instead of the usual point rating system we use at Benchmark Reviews, I have decided to end the article with my thoughts and encourage you to make your own conclusions. I would strongly urge you to read the entire review, if you have not already, so that you can make an educated decision for yourself.

Throughout the ten tests conducted I have been pleasantly surprised by today's level of scaling with two video cards in CrossFire. It has been well documented in the past that dual video card configurations from both AMD and NVIDIA do not always scale as you might expect. This has mainly been due to driver support and/or game optimisation (or lack of as the case may be) for the pair of cards in question.

On average the HIS HD6850 IceQ X Turbo / HIS HD6870 IceQ X Turbo X CrossFire set performed 42.5% better than the single cards which equates to 85% scaling. The HIS HD6950 IceQ X Turbo X / MSI HD6950 Twin Frozr III PE/OC CrossFire set performed 45.2% better than the single cards which equates to 90.4% scaling.

The HIS HD6950 IceQ X Turbo X / MSI R6950 Twin Frozr III PE/OC CrossFire setup will cost you two cents short of $600 if you were to buy them today, If you were to buy just one and hold out for 3-6 months I'm sure by then the price would have dropped and it would allow you to get a great performing pair at a lower price. Our tests have shown that the AMD Radeon HD6950 GPU scales very well in CrossFire and this links directly to our cost analysis, the HD6950 crossfire set has only a slightly higher cost per FPS rating over the single card rating, in most cases it is the same or less than $0.50 difference.

The HIS HD6850 IceQ X Turbo / HIS HD6870 IceQ X Turbo X CrossFire setup will cost you two cents short of $415 if you were to buy them today, without the hardware to back up my theory it's hard to say for sure but at this price point you might well be better off buying an overclocked Radeon HD6970 video card for under $400. Our tests have shown that the AMD Radeon HD6850/HD6870 GPU combo scales quite well in crossfire but not as much as I would have hoped and this links directly to our cost analysis, the HD6850 CrossFire set has constant higher cost per FPS rating over the single card ratings, costing anywhere between $0.22 ~ $1.94 more per FPS than the single cards.

If I were on a budget looking for a good 1080p gaming experience I would likely buy a single HD6870 video card now and wait till prices drop down the line and then pick up another HD6870 or HD6850 depending on available funds. If I was looking to build a performance related triple monitor setup I would definitely buy the AMD Radeon HD6950 CrossFire pair. Sure $600 is a lot to fork out in one go but when you are looking for top performance you can't afford to compromise.

Pros:

+ CrossFire enables you to run games at their highest quality settings

+ CrossFire allows you to run a triple monitor setup at high resolutions

+ HD6950 CrossFire scales to 90% on average

+ Temperatures were still manageable on the factory overclocked video cards

+ HIS video cards remained very quiet throughout testing

+ No driver issues were experienced

Cons:

- Hot air from GPU's exhausted into case

- Top GPU gets hot quickly

- Fan profiles are too relaxed on HIS video cards

- Loud fans on the MSI HD6950 when in the top position

- High power consumption

- Need a powerful PSU

Questions? Comments? Benchmark Reviews really wants your feedback. We invite you to leave your remarks in our Discussion Forum.

Related Articles:

- G.Skill Phoenix Pro SandForce SSD

- NVIDIA GeForce GTX 770 Video Card

- Zalman VF3000A VGA Cooler

- Seagate Barracuda XT 6Gbps SATA-III HDD Preview

- Lancool First Knight PC-K63 Computer Case

- Mad Catz Cyborg RAT-7 Laser Gaming Mouse

- Sapphire Radeon HD5870 Vapor-X 100281-2GVXSR

- QNAP TS-419P II NAS Network Storage Server

- Diamond Viper ATI Radeon HD 3870 512MB Video Card

- AMD Athlon II X2 250 AM3 Processor

VGA Testing Methodology

VGA Testing Methodology Intel P55 Test System

Intel P55 Test System

Comments

1- I do really wish (for your graphs), you'd use a background that was uniform, to make them easier to see.

2- Nice evaluation as always, but I wish you would have included at least 2 or 3 nVidia based cards, for a broader range of comparisons.

But like I said, very nicely done (as always), & much thanks to BenchMark Reviews!

2) This article was meant only to show benefits of CrossFire.... No need for NVIDIA results.

Thanks for the positive comments.

Also, can we expect a 6870 Crossfire review in the future by any chance? I'd love to see how two identical 6870 perform.

Any further spin off of this review will depend on its popularity, if there is enough interest then we will.

It looks like multi-GPU setups are out of the question for me unless I spend more on air conditioning. :P

As for the top card's temperatures, any mid-end P67/Z67 motherboard has 3 slots open for each card and that should leave enough room for heat to dissipate from the bottom card. Right???

Is it really worth the, added heat build up/extra power needed/price of 2 high end GPU's?

Take my advice & stick to one nice GPU, it's still going to play near enough everything.

Now, a Radeon 6970 might have equalled or beat the CrossFireX 6850s, but it costs about twice as much as one, which matters if you're building on a budget and starting with a single card.

First off, you are comparing one card to (double the price)two cards. Where as I was comparing one card with the same "value" as two cards.

Secondly, I see you don't mention the extra heat they give out & power draw they take, do you not consider this a added cost?

Re power and heat: honestly, no, I don't consider those costs worth worrying about. If your electrical budget is that tight, you've bigger problems than the power your video card draws. Of course, if you need to upgrade your power supply, that's another issue. But presumably if you're planning for CrossFireX in the future, your original power supply was chosen with this criteria in mind.

Would be interesting to see a survey done on people with sli/crossfire, to see if they will stick with it, come their next build, or go back to just using the one gpu.

My guess is a lot would return to the one gpu set-up, realising sli/crossfire just isn't worth the expense/trouble.

2 months ago I bought 2 Asus 6850 DirectCU and I can assure you that it was worth every euro cent I have spent (2 x 119euros)

Here GTX580 costs 420 euros and 6970 is 320 euros, so you can see the price difference against performance...

with good choice of motherboard, case and fans the heat can be reduced.

In my particular case I have CM690 fully populated with Scythe Slip Streams, my motherboard is Gigabyte P45-UD3P that has 3 slots between PCIe x16 connectors, so when CF is used you practically have one slot of air combined with side fan blowing inside that area :)

Overall I'm quite happy with this build (although the CPU/motherboard/RAM combination is bit old, despite 4+ GHz overclock).

Next time when I upgrade (probably Ivy Bridge based) I'll retain the GPU setup. Of course until there is a game on the market that will choke it :) But until than, I intend to enjoy it as long as I can :)

Please realise, no matter which "good choice of motherboard/Case/fans" you eventually decide to go with. You can't get away from the fact, one gpu will run cooler & cheaper then two in that same system.

And remember, both amd/nvidia are trying to show sli/crossfire in a good light, their bound to, it sells them twice as much of their product.

But the thing is that one GPU will for sure run cooler but I don't agree that always be a cheaper variant. Did you saw the prices I mentioned?

As of temperatures in case of 1 or 2 GPU - yes, 2 GPU will run hotter but as I said, with good setup the temperature can still be in the safe zone...

But does it really work out cheaper after you have added the more expensive PSU you will need, plus the added running cost over the years, not to mention driver/heat related issues?

And I have to admit, with latest drivers AMD has done a very good job with their CF scaling and driver support. Actually that was one of the main triggers for me to jump into CF wagon (I was happy user of GTX280 before this setup). The other was that I was locked to CF since I have older motherboard.

lets not get into an argument, lets just agree to disagree.

My overall point is only to enlighten people to the fact, that there is more to running sli/crossfire then "just" the cost of the extra card, & in "my opinion" it's not worth it.

as to Pigbristle and to Steven :) I think we are all correct from our standing points :)

so here is my closing thought on this subject:

I had the possibility to easily upgrade (performance wise) from single GPU to CF for very little cost and I did it. Simple as that.

As Luay subjected it: "Poor Man's Hig-end Rig" :) I think that is in essence what I'm saying...

P.S.

I guess that I'm looking into this matter more like PC enthusiast/hobbyist...

That would be the reason to consider high-end GPU or even multi-GPU, to have overclocked my CPU, to buy a good & powerful PSU (that will allow some breathing room), to buy VelociRaptor as main HDD, to constantly follow web sites like this one for reviews and sharing thoughts and advises... ;)

Otherwise I just go the nearby computer shop and just by the PC that suits my budget and call it a day... :)

I used my diagnosis as my nick name :-P

hehe :) If I cannot joke with my self, than with whom I can?! :)

I know about OEM PCs... that is why I always build my PCs (at least the last 3)...

Another thing do you think an MSI 6870 Hawk is just as good as the IceQ 6870? Going to get a single 6850/6870 since I am on a budget then get another by next year.

I'm not sure what you mean about pairing 6870 with 6850. So far as I know, CrossFire won't work this way. Please explain what you mean.

The MSI 6870 Hawk will give you the same framerates as the IceQ 6870 if they both have the same clock speeds, there differences will be A)Noise level B)Cooling capability C)Power design D)Overclock capability. Since I don't have the MSI 6870 Hawk I can't really answer your question truthfully.

/index.php?option=com_content&task=view&id=718&Itemid=72

It is also about 15-20 dollars cheaper right now. Clock speed is about 45mgz less.

It's awesome that I can SLI together a 5850 and a 5950 and the 5950 works at 90% and the 5850 works at 90% which is something like 160% of the power of the 5950.

Sure, I could just buy a top end card every time, but I would end up spending (2x)-x where x is the number of upgrades of the money.

Example. 10 upgrades.

9 * 600 = 5,4000

10 * 250 = 2,000

I took 1 off of the 600 dollar card because I figure you only need 9 cards to equal 10 of the cheaper cards performance (if used in pairs)

I never buy cheap motherboards anyway, even if they are single PCI-E 16x slot they will be over 100$.

I also never buy a cheap PSU. Even w/o using SLI my PSU is a high profile brand of 600 watts because PSU failures are very very costly and if you're on a budget then that can cause problems.